←Back to feed

🧠 AI🟢 BullishImportance 7/10

Moonshot AI Releases 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔 to Replace Fixed Residual Mixing with Depth-Wise Attention for Better Scaling in Transformers

2 images via MarkTechPost

🤖AI Summary

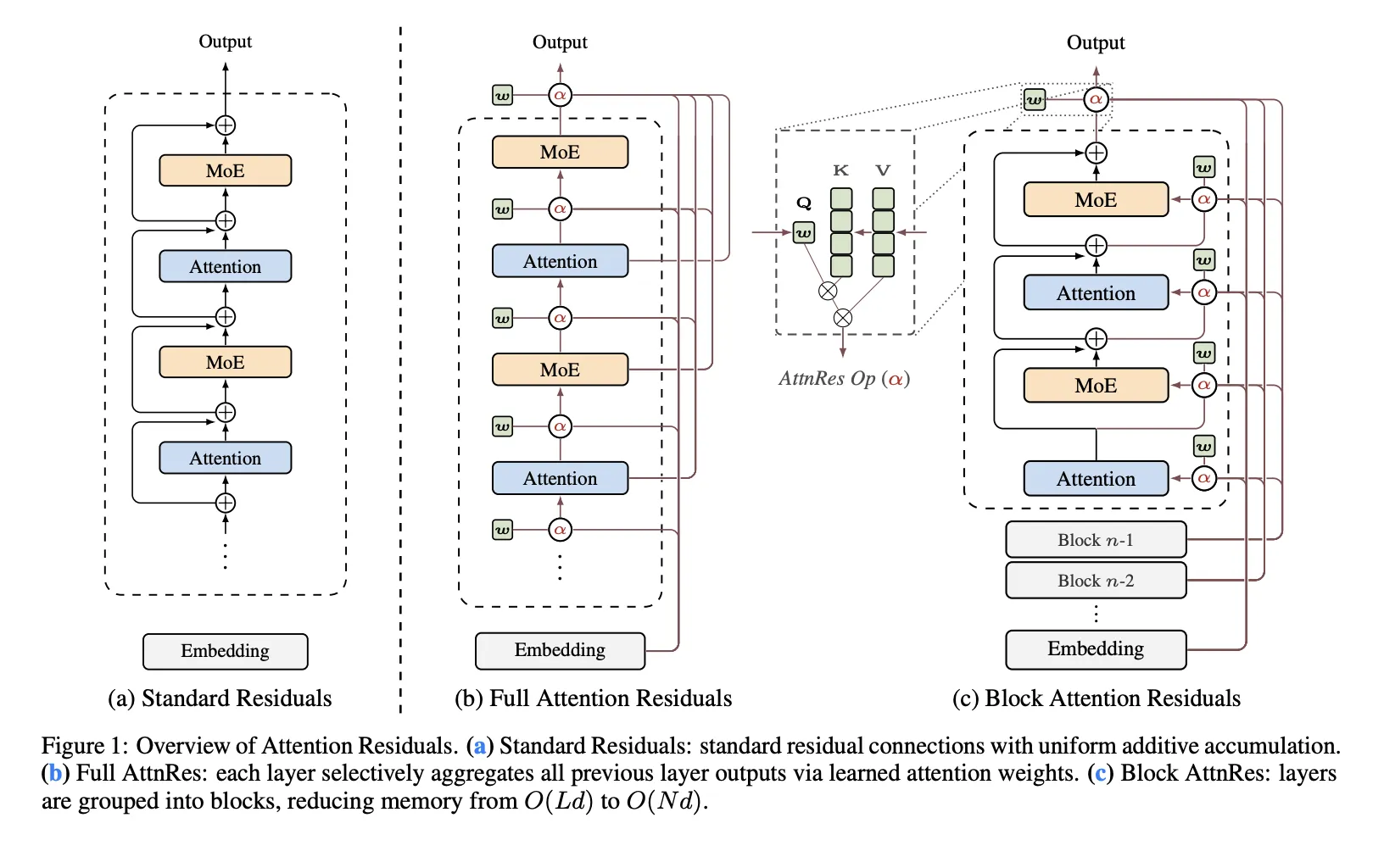

Moonshot AI has released Attention Residuals, a new approach that replaces traditional fixed residual connections in Transformer architectures with depth-wise attention mechanisms. The innovation addresses structural problems in PreNorm architectures where all prior layer outputs are mixed equally, potentially improving model scaling capabilities.

Key Takeaways

- →Moonshot AI introduces Attention Residuals to improve upon traditional residual connections in Transformers.

- →The new approach uses depth-wise attention instead of fixed residual mixing found in PreNorm architectures.

- →Traditional residual connections create structural problems by equally mixing all prior layer outputs.

- →The innovation aims to enhance scaling capabilities in Transformer models.

- →This represents a fundamental rethinking of one of the core components of modern Transformer design.

#moonshot-ai#attention-residuals#transformer-architecture#deep-learning#neural-networks#ai-research#model-scaling#prenorm#residual-connections

Read Original →via MarkTechPost

Act on this with AI

Stay ahead of the market.

Connect your wallet to an AI agent. It reads balances, proposes swaps and bridges across 15 chains — you keep full control of your keys.

Related Articles