←Back to feed

🧠 AI🟢 BullishImportance 6/10

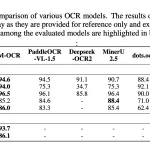

Zhipu AI Introduces GLM-OCR: A 0.9B Multimodal OCR Model for Document Parsing and Key Information Extraction (KIE)

2 images via MarkTechPost

🤖AI Summary

Zhipu AI has released GLM-OCR, a compact 0.9B parameter multimodal model designed to solve real-world document parsing challenges including OCR, table extraction, formula recognition, and key information extraction. The model aims to address the engineering difficulties of processing actual documents rather than clean demo images while maintaining resource efficiency.

Key Takeaways

- →Zhipu AI launched GLM-OCR, a 0.9B parameter multimodal model for document parsing and OCR tasks.

- →The model is designed to handle real-world document challenges including tables, formulas, and structured data extraction.

- →GLM-OCR focuses on resource efficiency while maintaining performance for practical document processing applications.

- →The model addresses key information extraction (KIE) capabilities beyond traditional OCR text recognition.

- →The release targets the gap between clean demo OCR performance and messy real-world document parsing needs.

#zhipu-ai#glm-ocr#multimodal#ocr#document-parsing#kie#ai-model#text-extraction#table-recognition#formula-parsing

Read Original →via MarkTechPost

Act on this with AI

Stay ahead of the market.

Connect your wallet to an AI agent. It reads balances, proposes swaps and bridges across 15 chains — you keep full control of your keys.

Related Articles