167 articles tagged with #anthropic. AI-curated summaries with sentiment analysis and key takeaways from 50+ sources.

AIBearishDecrypt – AI · Mar 2🔥 8/1011

🧠Anthropic's Claude AI was reportedly used in U.S. Central Command operations during Iran strikes, even as the Trump administration ordered federal agencies to sever ties with the AI company. This highlights potential conflicts between government AI usage and political directives regarding AI companies.

AIBearishThe Verge – AI · Feb 277/106

🧠Defense Secretary Pete Hegseth designated Anthropic as a "supply chain risk" following President Trump's federal ban on the AI company's products. This decision could impact major Pentagon contractors like Palantir and AWS that use Claude AI services in their government work.

AIBearishTechCrunch – AI · Feb 277/106

🧠President Trump has ordered all federal agencies to cease using Anthropic's AI services following a dispute with the Pentagon. The directive represents a significant policy shift that could impact the AI company's government contracts and market position.

AIBearishTechCrunch – AI · Feb 277/107

🧠The Pentagon is moving to designate Anthropic as a supply-chain risk, with a president stating they will not do business with the AI company again. This represents a significant regulatory action against a major AI company that could impact the broader AI industry.

AIBearishWired – AI · Feb 277/107

🧠President Trump has issued an order to ban Anthropic from US government use, following Defense Department pressure on the AI company to remove restrictions on military applications of its technology. This represents a significant escalation in government-AI company tensions over military use policies.

AIBearishThe Verge – AI · Feb 277/108

🧠Trump ordered federal agencies to stop using Anthropic's AI products after CEO Dario Amodei refused to sign an updated Pentagon agreement allowing 'any lawful use' of the company's technology. The dispute centers on Defense Secretary Pete Hegseth's January memo requiring broader military access that could include mass domestic surveillance capabilities.

AINeutralDecrypt – AI · Feb 277/108

🧠Anthropic has retired its Claude Opus 3 AI model but given it a Substack blog to document its experiences and reflections. This unusual move highlights growing industry discussions about AI identity, consciousness, and the ethics of model retirement.

AINeutralTechCrunch – AI · Feb 277/105

🧠Anthropic and the Pentagon are in conflict over AI deployment in autonomous weapons systems and surveillance applications. This dispute highlights critical questions about corporate versus government control over military AI development and the ethical boundaries of AI technology in national security.

AINeutralTechCrunch – AI · Feb 277/106

🧠The Pentagon and Anthropic are engaged in a regulatory battle over military AI control, while communities nationwide resist data center construction. New York State Assemblymember Alex Bores is attempting to find middle ground in AI regulation amid polarized debates.

AINeutralTechCrunch – AI · Feb 277/107

🧠Employees from Google and OpenAI have written an open letter supporting Anthropic's ethical stance regarding its Pentagon partnership. Anthropic maintains strict boundaries, refusing to allow its AI technology to be used for mass domestic surveillance or fully autonomous weapons systems.

AIBearishThe Verge – AI · Feb 277/106

🧠The Pentagon has issued an ultimatum to Anthropic demanding unchecked military access to its AI technology, including for surveillance and autonomous weapons, threatening to designate the company a supply chain risk if refused. This confrontation is prompting broader concerns among tech workers about their companies' military contracts and the future implications of AI weaponization.

AIBearishDecrypt – AI · Feb 277/106

🧠Anthropic CEO announced the company will refuse to comply with Defense Department demands to lift AI safeguards, as the Pentagon considers designating Anthropic as a "supply chain risk." This dispute highlights tensions between AI companies maintaining safety protocols and government agencies seeking access to less restricted AI capabilities.

AINeutralThe Verge – AI · Feb 267/106

🧠Anthropic has refused the Pentagon's demands for unrestricted AI access, maintaining its stance against mass surveillance and lethal autonomous weapons. The refusal comes amid Defense Secretary Pete Hegseth's push to renegotiate all AI lab contracts with the military.

AINeutralTechCrunch – AI · Feb 267/103

🧠Anthropic CEO Dario Amodei refused to comply with Pentagon demands for unrestricted military access to the company's AI systems, citing moral objections. This stance creates tension between AI companies and government defense requirements as regulatory deadlines approach.

AI × CryptoBearishProtos · Feb 267/103

🤖Researchers from ETH Zurich and Anthropic have developed AI agents capable of deanonymizing cryptocurrency wallets by analyzing social media posts. This research demonstrates significant privacy vulnerabilities for crypto users who share information across social platforms.

$ETH

AIBearishArs Technica – AI · Feb 257/106

🧠Pete Hegseth has confronted Anthropic's CEO after the AI company attempted to restrict military applications of its technology. The CEO was called to Washington to address the Department of Defense's concerns about access to Anthropic's AI capabilities.

AI × CryptoNeutralDL News · Feb 257/105

🤖Ethereum co-founder Vitalik Buterin is supporting Anthropic in a dispute with the White House regarding military applications of the AI company's technology. This comes amid predictions of AI dystopia from a Citrini report, highlighting tensions between AI development and government oversight.

$ETH

AIBearishCoinTelegraph – AI · Feb 257/104

🧠Anthropic alleges that Chinese AI companies DeepSeek, Moonshot, and MiniMax conducted massive distillation attacks against its Claude AI system, creating 24,000 accounts and making 16 million exchanges to scrape training data. This represents a significant case of AI model theft and highlights growing tensions in the global AI competition.

AIBullishIEEE Spectrum – AI · Feb 157/105

🧠NASA's Perseverance rover successfully completed its first AI-planned autonomous drive on Mars, traveling 456 meters using waypoints generated by Anthropic's Claude AI. The AI analyzed orbital imagery and elevation data to identify hazards and plan safe routes, representing a significant step toward fully autonomous planetary exploration.

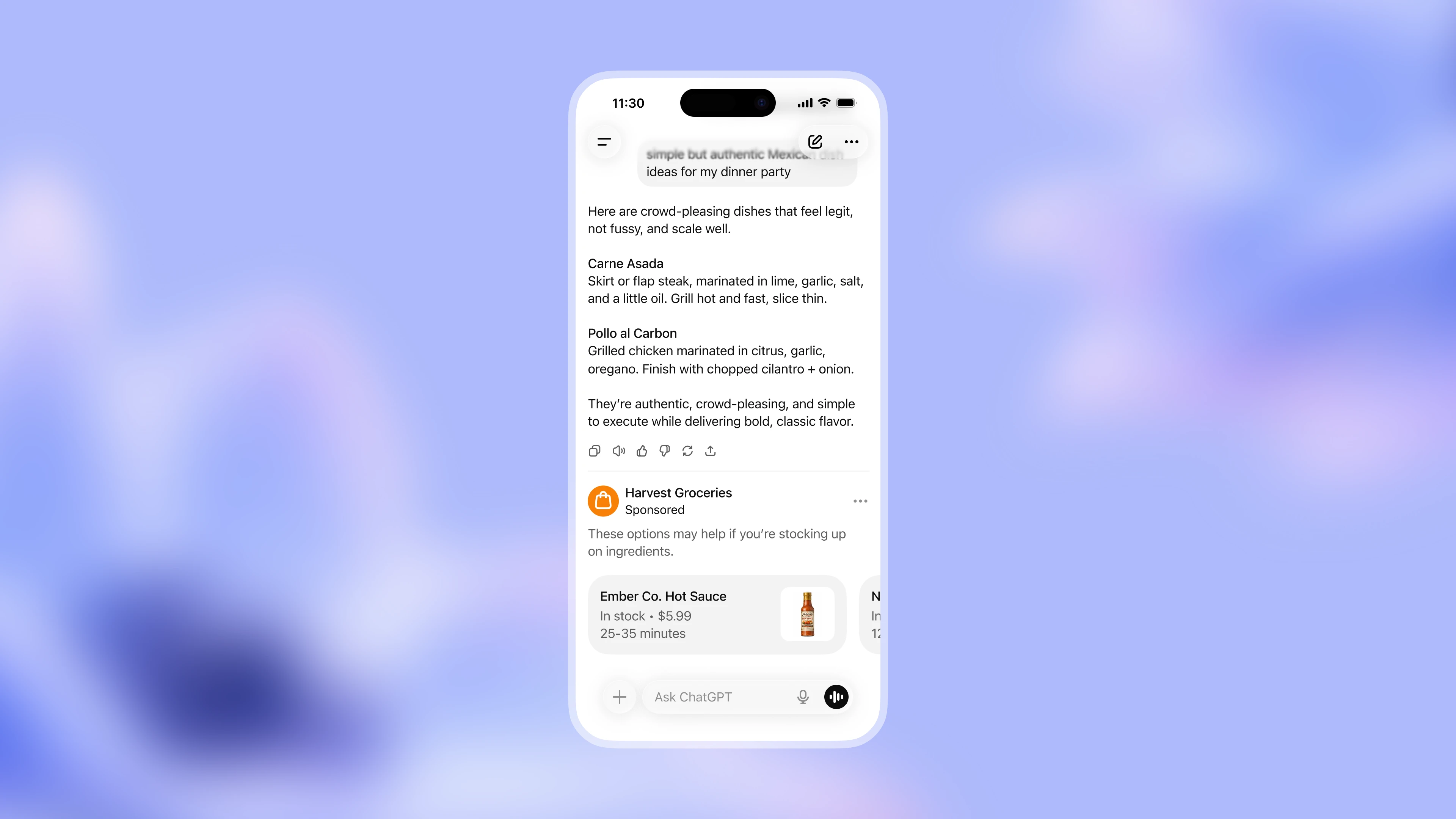

AINeutralLast Week in AI · Jan 237/10

🧠OpenAI plans to test advertising in ChatGPT amid high operational costs, while Sequoia prepares to invest in competitor Anthropic. Chinese AI company Zhipu AI partners with Huawei to reduce dependence on US semiconductors, highlighting ongoing tech tensions.

🏢 OpenAI🏢 Anthropic🧠 ChatGPT

AIBullishVentureBeat – AI · Jan 137/106

🧠Salesforce launched a completely rebuilt Slackbot AI agent powered by Anthropic's Claude, transforming it from a basic notification tool into a comprehensive workplace AI assistant that can search enterprise data, draft documents, and take actions. The new Slackbot is now available to Business+ and Enterprise+ customers and achieved 96% internal satisfaction rates at Salesforce with two-thirds of 80,000 employees adopting it.

$XRP$RNDR

AIBullishVentureBeat – AI · Jan 127/102

🧠Anthropic launched Cowork, a Claude Desktop agent that allows non-technical users to work with files on their computer without coding, available as a research preview for Claude Max subscribers ($100-200/month). The tool was reportedly built in approximately 1.5 weeks largely using Claude Code itself, demonstrating how AI tools are being used to develop better AI tools.

$LINK$COMP

AI × CryptoBullishVentureBeat – AI · Jan 77/104

🤖Nous Research, backed by crypto venture firm Paradigm, released NousCoder-14B, an open-source AI coding model that achieves 67.87% accuracy on competitive programming benchmarks. The model was trained in just four days using 48 Nvidia B200 GPUs and comes with complete transparency, including open-sourced training code and methodology.

AIBullishVentureBeat – AI · Jan 57/104

🧠Boris Cherny, creator of Claude Code at Anthropic, revealed his development workflow that uses 5 parallel AI agents and exclusively runs the slowest but smartest model, Opus 4.5. His approach transforms coding from linear programming to fleet management, achieving the output capacity of a small engineering team while maintaining a shared knowledge file that makes AI mistakes permanent lessons.

AIBullishOpenAI News · Aug 277/107

🧠OpenAI and Anthropic conducted their first joint safety evaluation, testing each other's AI models for various risks including misalignment, hallucinations, and jailbreaking vulnerabilities. This cross-laboratory collaboration represents a significant step in industry-wide AI safety cooperation and standardization.