98 articles tagged with #foundation-models. AI-curated summaries with sentiment analysis and key takeaways from 50+ sources.

AIBearisharXiv – CS AI · Apr 76/10

🧠A new research study reveals that major large language models exhibit systematic bias toward American English over British English across training data, tokenization, and outputs. The research introduces DiAlign, a method for measuring dialectal alignment, and finds evidence of linguistic homogenization that could impact global AI equity.

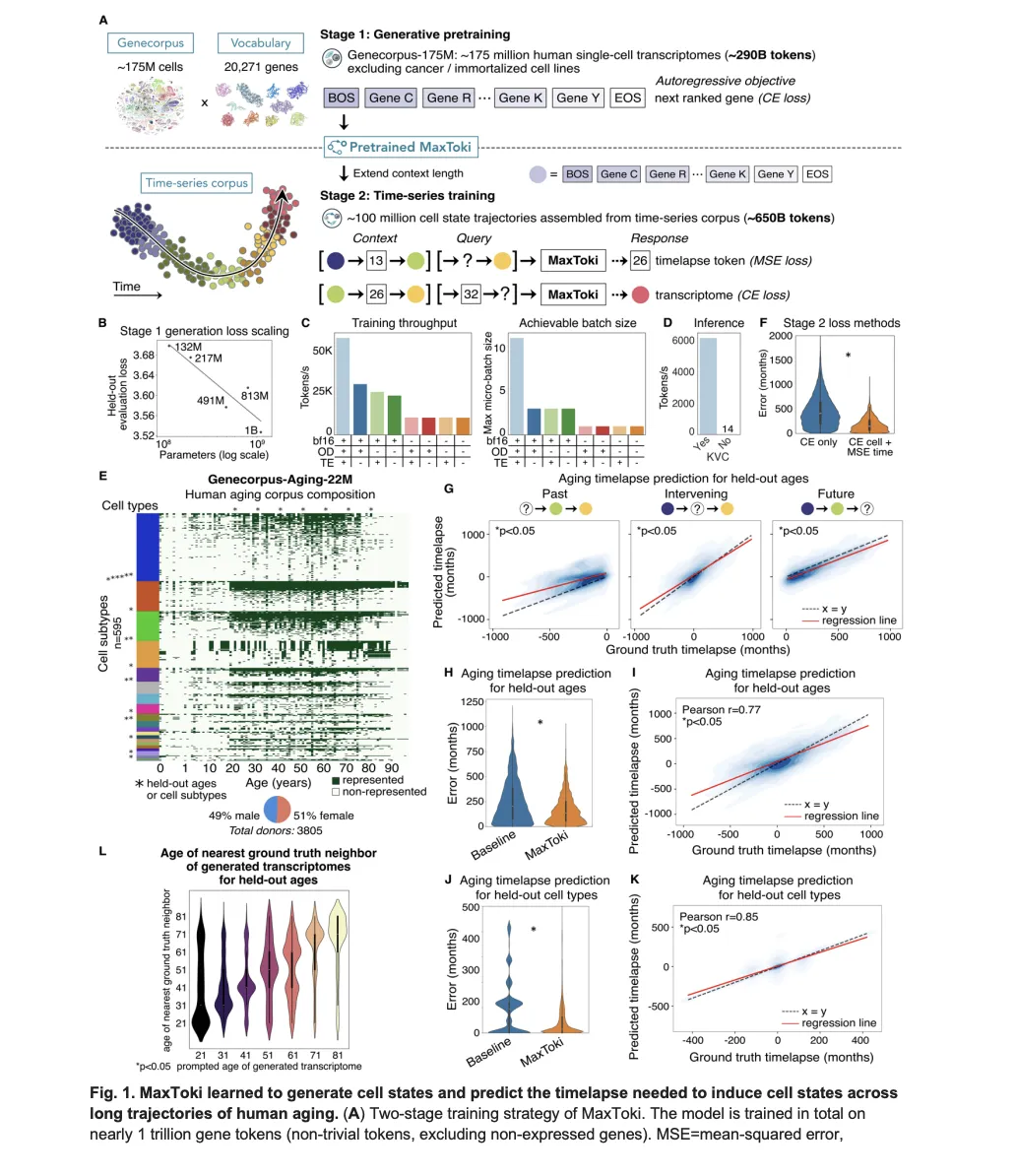

AIBullishMarkTechPost · Apr 56/10

🧠MaxToki is a new AI foundation model that can predict cellular aging patterns and trajectories, addressing a key limitation in existing biological models that only analyze cells as static snapshots. The technology represents a significant advancement in computational biology by incorporating temporal dynamics into cellular analysis.

AIBullisharXiv – CS AI · Mar 176/10

🧠Researchers propose ES-Merging, a new framework for combining specialized biological multimodal large language models (MLLMs) by using embedding space signals rather than traditional parameter-based methods. The approach estimates merging coefficients at both layer-wise and element-wise granularities, outperforming existing merging techniques and even task-specific fine-tuned models on cross-modal scientific problems.

AIBullisharXiv – CS AI · Mar 176/10

🧠Researchers introduce MVHOI, a new AI framework that significantly improves human-object interaction video generation by handling complex 3D manipulations through a two-stage process using 3D foundation models. The system can create realistic long-duration videos showing intricate object manipulations from multiple viewpoints, addressing limitations of existing approaches that struggle with non-planar movements.

AINeutralarXiv – CS AI · Mar 176/10

🧠Research shows that synthetic data designed to enhance in-context learning capabilities in AI models doesn't necessarily improve performance. The study found that while targeted training can increase specific neural mechanisms, it doesn't make them more functionally important compared to natural training approaches.

🏢 Perplexity

AINeutralarXiv – CS AI · Mar 166/10

🧠Researchers propose integrating causal methods into machine learning systems to balance competing objectives like fairness, privacy, robustness, accuracy, and explainability. The paper argues that addressing these principles in isolation leads to conflicts and suboptimal solutions, while causal approaches can help navigate trade-offs in both trustworthy ML and foundation models.

AINeutralarXiv – CS AI · Mar 126/10

🧠Researchers propose RandMark, a new method for watermarking visual foundation models to protect intellectual property rights. The approach uses a small encoder-decoder network to embed random digital watermarks into internal representations, enabling ownership verification with low false detection rates.

AIBullisharXiv – CS AI · Mar 116/10

🧠FALCON introduces a novel vision-language-action model that bridges the spatial reasoning gap by injecting 3D spatial tokens into action heads while preserving language reasoning capabilities. The system achieves state-of-the-art performance across simulation benchmarks and real-world tasks by leveraging spatial foundation models to provide geometric priors from RGB input alone.

AIBullisharXiv – CS AI · Mar 96/10

🧠Researchers developed a new training method to improve the robustness of AI foundation models like SAM3 for medical image segmentation by reducing sensitivity to prompt variations. The approach groups semantically similar prompts together and uses consistency constraints to ensure more reliable predictions across different prompt formulations.

AIBullisharXiv – CS AI · Mar 96/10

🧠Researchers introduce 3DThinker, a new framework that enables vision-language models to perform 3D spatial reasoning from limited 2D views without requiring 3D training data. The system uses a two-stage training approach to align 3D representations with foundation models and demonstrates superior performance across multiple benchmarks.

AIBullisharXiv – CS AI · Mar 36/107

🧠Researchers have developed RGLM, a new approach to improve how large language models understand and process graph data by incorporating explicit graph supervision alongside text instructions. The method addresses limitations in existing Graph-Tokenizing LLMs that rely too heavily on text supervision, leading to underutilization of graph context.

AIBullisharXiv – CS AI · Mar 37/104

🧠Researchers propose combining In-Weight Learning (IWL) and In-Context Learning (ICL) through modular memory architectures to solve continual learning challenges in AI. The framework aims to enable AI agents to continuously adapt and accumulate knowledge without catastrophic forgetting, addressing key limitations of current foundation models.

AIBullisharXiv – CS AI · Mar 36/104

🧠Researchers introduce Intention-Conditioned Flow Occupancy Models (InFOM), a new reinforcement learning approach that uses flow matching to predict future states and incorporates user intention as a latent variable. The method demonstrates significant improvements with 1.8x median return improvement and 36% higher success rates across 40 benchmark tasks.

AIBullisharXiv – CS AI · Mar 36/104

🧠Researchers introduce TTOM (Test-Time Optimization and Memorization), a training-free framework that improves compositional video generation in Video Foundation Models during inference. The system uses layout-attention optimization and parametric memory to better align text prompts with generated video outputs, showing strong transferability across different scenarios.

AIBullisharXiv – CS AI · Mar 36/108

🧠Researchers have developed FCN-LLM, a framework that enables Large Language Models to understand brain functional connectivity networks from fMRI scans through multi-task instruction tuning. The system uses a multi-scale encoder to capture brain features and demonstrates strong zero-shot generalization across unseen datasets, outperforming conventional supervised models.

AIBullisharXiv – CS AI · Mar 36/107

🧠Researchers developed a dual-pipeline framework for bird image segmentation using foundation models including Grounding DINO 1.5, YOLOv11, and SAM 2.1. The supervised pipeline achieved state-of-the-art results with 0.912 IoU on the CUB-200-2011 dataset, while the zero-shot pipeline achieved 0.831 IoU using only text prompts.

AIBullisharXiv – CS AI · Mar 36/109

🧠Researchers propose TARA (Taxonomy-Aware Representation Alignment), a new method to improve Large Multimodal Models' ability to recognize visual categories in hierarchical taxonomies. The approach aligns visual features with biology foundation models to enable better recognition of both known and novel biological categories.

AIBearisharXiv – CS AI · Mar 36/106

🧠Research reveals that leading foundation models (LLMs) perform poorly on real-world educational tasks despite excelling on AI benchmarks. The study found that 50% of misalignment errors are shared across models due to common pretraining approaches, with model ensembles actually worsening performance on learning outcomes.

AIBullisharXiv – CS AI · Mar 26/1014

🧠Researchers have developed SleepLM, a family of AI foundation models that combine natural language processing with sleep analysis using polysomnography data. The system can interpret and describe sleep patterns in natural language, trained on over 100K hours of sleep data from 10,000+ individuals, enabling new capabilities like language-guided sleep event detection and zero-shot generalization to novel sleep analysis tasks.

AIBullisharXiv – CS AI · Mar 27/1013

🧠Researchers have developed Brain-OF, the first omnifunctional brain foundation model that can process fMRI, EEG, and MEG data simultaneously within a unified framework. The model introduces novel techniques like Any-Resolution Neural Signal Sampler and Masked Temporal-Frequency Modeling, trained on 40 datasets to achieve superior performance across diverse neuroscience tasks.

AIBullisharXiv – CS AI · Mar 27/1012

🧠Researchers introduce HDFLIM, a new framework that aligns vision and language AI models without requiring computationally expensive fine-tuning by using hyperdimensional computing to create cross-modal mappings while keeping foundation models frozen. The approach achieves comparable performance to traditional training methods while being significantly more resource-efficient.

AIBullisharXiv – CS AI · Mar 27/1016

🧠Researchers introduced TradeFM, a 524M-parameter generative AI model that learns from billions of trade events across 9,000+ equities to understand market microstructure. The model can generate synthetic market data and generalizes across different markets without asset-specific calibration, potentially enabling new applications in trading and market simulation.

$COMP

AIBullisharXiv – CS AI · Mar 27/1012

🧠Researchers developed a new framework for selecting optimal medical AI foundation models without costly fine-tuning, achieving 31% better performance than existing methods. The topology-driven approach evaluates manifold tractability rather than statistical overlap to better assess model transferability for medical image segmentation tasks.

AIBullisharXiv – CS AI · Mar 27/1011

🧠Researchers propose a new framework for foundation world models that enables autonomous agents to learn, verify, and adapt reliably in dynamic environments. The approach combines reinforcement learning with formal verification and adaptive abstraction to create agents that can synthesize verifiable programs and maintain correctness while adapting to novel conditions.

AIBullisharXiv – CS AI · Mar 27/1019

🧠Researchers developed SocialNav, a foundation model for socially-aware robot navigation that uses a hierarchical architecture to understand social norms and generate compliant movement paths. The model was trained on 7 million samples and achieved 38% better success rates and 46% improved social compliance compared to existing methods.