AIBullishFortune Crypto · 4d ago7/10

🧠Intel's new CEO Lip-Bu Tan has implemented significant operational restructuring, cutting management layers in half while securing major capital infusions from Nvidia and SoftBank within 13 months. The company's stock has surged nearly 500%, reflecting investor confidence in the turnaround strategy as Intel works to regain competitiveness in AI chip manufacturing.

🏢 Nvidia

AIBullishCrypto Briefing · 6d ago7/10

🧠Nvidia is entering the personal computer market with a new AI chip designed to compete against Intel and AMD's processors. This move could shift AI processing from cloud servers to individual devices, potentially improving user data privacy and creating a significant competitive challenge for established PC chipmakers.

🏢 Nvidia

AIBearishBlockonomi · 6d ago7/10

🧠Intel's stock fell approximately 5% in premarket trading after NVIDIA announced a competing PC chip on the same day Intel launched its Crescent Island AI GPU. The simultaneous product announcements highlight intensifying competition in the PC processor market, with NVIDIA leveraging its AI momentum to challenge Intel's traditional computing dominance.

🏢 Nvidia

AINeutralCrypto Briefing · 6d ago7/10

🧠Nvidia announced a new Arm-based PC superchip that triggered market volatility, with Intel and AMD shares declining while Nvidia's stock rose. The move represents a significant architectural shift that could challenge the x86 dominance long held by Intel and AMD in the PC processor market.

🏢 Nvidia

AIBullishCrypto Briefing · Jun 17/10

🧠Nvidia has unveiled the GB10 Grace Blackwell Superchip, a new processor designed to democratize AI computing by reducing costs and enabling broader access to powerful AI capabilities. The chip positions Nvidia to compete directly with Apple and Intel in the personal AI computing market, representing a significant shift toward making advanced AI technology more accessible to businesses and developers.

🏢 Nvidia

GeneralBullishCrypto Briefing · May 297/10

📰Intel and 3DGS are establishing a $3.3 billion substrate manufacturing plant in India, a move designed to strengthen India's semiconductor ecosystem and reduce the country's reliance on imported substrates. This investment reflects broader efforts to diversify and localize global chip supply chains amid geopolitical tensions and supply chain vulnerabilities.

AIBullishCrypto Briefing · May 117/10

🧠Intel, Micron, and Qualcomm shares reached record highs at market open, driven by robust demand for AI-related semiconductor chips. The surge underscores how artificial intelligence adoption is reshaping the technology sector, though experts warn that volatility could emerge if AI growth trajectories slow or supply chain dynamics shift.

GeneralBullishBlockonomi · May 117/10

📰Intel's stock surged 14% following announcements of preliminary negotiations with Apple for chip manufacturing and talks with SK Hynix. The developments signal growing confidence in Intel's foundry business expansion as it seeks to diversify revenue beyond its traditional processor manufacturing.

GeneralBullishCrypto Briefing · Apr 187/10

📰Intel's CEO has announced strategic partnerships with Terafab and Google while acquiring a 49% stake in an Irish semiconductor fabrication facility. These moves aim to strengthen U.S. semiconductor supply chain independence and reduce reliance on concentrated global manufacturing, with potential long-term implications for chip market structure and geopolitical tech competition.

AIBullishBlockonomi · Apr 67/10

🧠KeyBanc raised Intel's price target to $70 and maintained Micron's target at $600, citing AI server demand driving chip shortages. The analysis anticipates price surges by Q2 2026 as supply constraints continue in the AI chip market.

AIBullishWired – AI · Apr 67/10

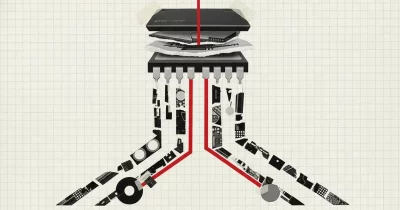

🧠Intel is making a major strategic bet on advanced chip packaging technology, positioning itself at the center of the AI boom. This technical focus on packaging could potentially generate billions in revenue as AI demand drives need for more sophisticated chip assembly and interconnection solutions.

AINeutralBlockonomi · Mar 267/10

🧠AMD reported strong performance with $34.6B revenue driven by AI growth, while Intel faces challenges with flat sales. Market analysts have upgraded AMD to 'Moderate Buy' while downgrading Intel to 'Reduce', highlighting the diverging trajectories of the two chip giants.

AIBullishBlockonomi · Mar 167/10

🧠Intel stock surged 4.4% following news of potential collaboration with Nvidia, along with AI partnerships with Ericsson and Infosys. The rally was also driven by progress reports on Intel's advanced 18A manufacturing process.

🏢 Nvidia

AIBullishHugging Face Blog · Oct 167/108

🧠Google Cloud announced its C4 compute instances deliver 70% total cost of ownership (TCO) improvement for GPT open-source models through collaboration with Intel and Hugging Face. This development represents a significant cost reduction for AI model deployment and training workloads.

AIBullishCrypto Briefing · 3d ago6/10

🧠Foxconn and Intel announced a partnership to jointly develop AI infrastructure, with details to be revealed at Computex 2026. The collaboration aims to strengthen both companies' positions in the rapidly expanding AI hardware ecosystem and accelerate innovation in AI computing solutions.

AIBearishBlockonomi · 5d ago6/10

🧠Intel unveiled significant product announcements at Computex 2026, including Xeon 6+ processors and AI infrastructure systems, yet the stock declined despite these developments. The market's negative reaction suggests investors remain concerned about Intel's competitive positioning and execution challenges in the AI and data center markets.

AINeutralArs Technica – AI · 6d ago6/10

🧠Intel has announced Crescent Island, an upcoming AI chip designed to compete with Nvidia and AMD offerings by delivering superior cost-efficiency and thermal performance through air-cooling and LPDDR5 memory integration. The move represents Intel's aggressive push to recapture market share in the competitive AI accelerator segment dominated by Nvidia's GPUs.

🏢 Nvidia

AIBullishCrypto Briefing · Jun 16/10

🧠Intel plans to launch a lower-cost AI chip by year-end, aiming to democratize access to AI hardware and challenge market leaders like NVIDIA. The move could reshape the competitive landscape of AI accelerators by offering more affordable alternatives to enterprises and developers.

AIBullishCrypto Briefing · May 296/10

🧠MediaTek is adopting Intel's advanced chip packaging technology alongside TSMC's manufacturing services, signaling a strategic shift toward supply chain diversification. This dual-sourcing approach strengthens resilience in AI hardware production by reducing dependence on single suppliers.

AIBullishBlockonomi · May 286/10

🧠Wolfe Research has identified AMD as the primary beneficiary of the AI CPU market, projecting $44B in revenue by 2028. The analysis suggests Intel is losing market share while Nvidia and Arm also capitalize on growth driven by agentic AI adoption.

🏢 Nvidia

AIBullishCrypto Briefing · May 116/10

🧠SK Hynix and Intel have partnered on 2.5D packaging technology to integrate HBM (High Bandwidth Memory) with logic chips, a development expected to reduce GPU production costs and improve computational efficiency. This collaboration has significant implications for high-performance computing sectors, including AI infrastructure and data center operations.

GeneralNeutralBlockonomi · May 116/10

📰Intel stock reached record highs following news of an Apple chip partnership deal, though Bank of America maintained an Underperform rating and cautioned that the positive sentiment is already reflected in current valuations, suggesting limited upside potential despite setting a $96 price target.

AIBullishCrypto Briefing · May 86/10

🧠AMD and Intel reached all-time highs driven by surging demand for agentic AI applications and CPU growth, with Intel's US-backed manufacturing turnaround providing additional momentum. The rally reflects broader strength in semiconductor stocks as the AI infrastructure buildout accelerates across the computing landscape.

AIBullishBlockonomi · Apr 206/10

🧠Morgan Stanley has upgraded its Intel price target while simultaneously signaling preference for memory chip manufacturers Micron and Sandisk as superior AI exposure plays. Wells Fargo's addition of Sandisk to the Nasdaq 100 and subsequent $975 price target increase reflects growing institutional confidence in memory makers over traditional processors in the AI infrastructure boom.

GeneralNeutralBlockonomi · Apr 196/10

📰U.S. equity markets reach new highs for the third consecutive week, with major indices showing sustained momentum. Key catalysts this week include Tesla earnings, Iran diplomatic developments, retail sales data, and Intel results, which will likely drive significant market volatility across sectors.