#llm News & Analysis

This page aggregates coverage related to #llm, with 962 articles indexed overall and 23 published in the past month. Recent reporting shows predominantly neutral sentiment at 65.2%, though bullish commentary has declined notably—dropping 26.3 percentage points compared to the prior quarter. The majority of indexed content originates from arXiv's computer science and AI sections, supplemented by coverage from Apple Machine Learning and MIT News.

Discussion frequently centers on models including Llama, Claude, and GPT-4. Related coverage typically touches on #machine-learning, #research, and #ai-research, with significant overlap in #arxiv submissions. Scan the article list below to explore recent developments and analysis.

sentiment · last 30d (23 articles) · -26.3pp bullish vs prior 90dTop sources:arXiv – CS AI · 813Apple Machine Learning · 8MIT News – AI · 4MarkTechPost · 4Import AI (Jack Clark) · 3

Most-discussed entities:Llama · 17Claude · 17GPT-4 · 16Gemini · 14ChatGPT · 10

AIBullishCrypto Briefing · 8h ago7/10

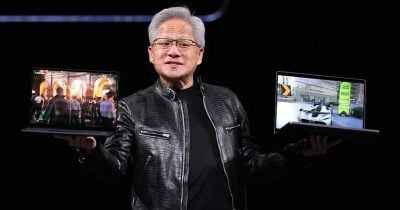

🧠Nvidia has launched a new AI chip designed for personal computers that can run 120 billion parameter models locally, marking the company's strategic entry into the consumer PC market. This development prioritizes on-device AI processing, potentially shifting how users interact with AI applications while addressing data privacy concerns by reducing reliance on cloud computing.

🏢 Nvidia

AIBullishFortune Crypto · 4d ago🔥 8/10

🧠Anthropic raised $65 billion in Series H funding, more than doubling its valuation in three months and approaching a $1 trillion private company valuation—an unprecedented milestone in startup history. The round was led by Altimeter, Dragoneer, Greenoaks, and Sequoia, reflecting massive investor confidence in AI infrastructure and large language models.

🏢 Anthropic

AIBullisharXiv – CS AI · 4d ago7/10

🧠Researchers propose a text-only framework for synthesizing vision-language model training data, eliminating the need for costly image-text pairs. The method generates two datasets (Unicorn-1.2M and Unicorn-471K-Instruction) through a three-stage process that converts text captions into synthetic visual representations, potentially reducing training costs and accelerating VLM development.

AI × CryptoBullishCrypto Briefing · 4d ago7/10

🤖Ethereum co-founder Vitalik Buterin has outlined his vision for self-sovereign large language models (LLMs) and advocated for AI systems specifically designed for Ethereum's ecosystem. His proposals aim to enhance privacy, security, and operational efficiency within decentralized networks by reducing reliance on centralized AI providers.

$ETH

AIBullishDecrypt – AI · 6d ago7/10

🧠StepFun, a Shanghai-based AI lab known for developing efficient large language models, has achieved top benchmark results in voice AI technology with notable sensitivity to acoustic nuances like sighs. The breakthrough demonstrates the lab's capability to extend its LLM expertise into multimodal AI, potentially reshaping voice recognition and AI assistant markets.

AIBullishHugging Face Blog · May 237/10

🧠NVIDIA's Nemotron-Labs team has developed diffusion-based language models that significantly accelerate text generation speeds, approaching real-time inference capabilities. This advancement combines diffusion model efficiency with language understanding, potentially reshaping how AI systems balance quality and computational cost.

AIBullishAI News · May 207/10

🧠Alibaba has unveiled the Zhenwu M890 AI processor specifically designed for AI agents, coupled with a multi-year silicon roadmap and a new large language model. This integrated approach signals that Alibaba is building a comprehensive AI stack rather than simply compensating for US export restrictions, fundamentally reshaping the competitive landscape in AI chip development.

AIBearishThe Verge – AI · May 117/10

🧠Google's Threat Intelligence Group discovered and blocked the first known zero-day exploit developed with AI assistance, which cybercriminals planned to use for mass exploitation of an open-source web administration tool to bypass two-factor authentication. Google identified AI involvement through telltale signs in the Python script, including hallucinated CVSS scores and LLM-style formatting, marking a significant escalation in AI-enabled cyber threats.

AIBullisharXiv – CS AI · May 117/10

🧠Researchers have developed an automated framework to generate a large-scale dataset of 163,000 molecule-description pairs by combining rule-based chemical nomenclature parsing with LLM guidance, achieving 98.6% precision in aligning molecular structures with natural language descriptions. This addresses a critical bottleneck in training language models for chemistry applications where manual annotation is prohibitively expensive.

🏢 Hugging Face

AIBullisharXiv – CS AI · May 117/10

🧠Researchers introduce Implicit Compression Regularization (ICR), a novel training method that reduces unnecessary verbosity in AI reasoning models without sacrificing accuracy. By leveraging the shortest correct responses within training batches as natural compression targets, ICR maintains performance while producing more concise outputs—addressing a key limitation of existing length-penalty approaches.

AI × CryptoBullisharXiv – CS AI · May 97/10

🤖Researchers demonstrated quantum-enhanced large language models by integrating Cayley-parameterised unitary adapters into pre-trained LLMs and executing them on IBM's 156-qubit quantum processor. The approach improved Llama 3.1 8B's perplexity by 1.4% using only 6,000 additional parameters, marking the first practical validation of quantum-classical hybrid AI on real quantum hardware at scale.

🏢 Perplexity🧠 Llama

AIBullisharXiv – CS AI · May 77/10

🧠Researchers propose Stream of Revision, a new paradigm for LLM-based code generation that allows models to revise and correct their output during generation rather than producing code in a strictly linear fashion. By introducing special action tokens enabling backtracking and editing within a single forward pass, the approach significantly reduces security vulnerabilities in generated code with minimal computational overhead.

AINeutralarXiv – CS AI · May 47/10

🧠Researchers propose that information retrieval for LLMs requires a fundamental shift toward denoising—prioritizing signal quality over quantity—because unlike humans, language models are vulnerable to hallucinations when processing noisy or irrelevant data within limited context windows. The paper introduces a four-stage framework addressing IR challenges from inaccessibility to unverifiability, with practical applications across RAG systems, coding agents, and multimodal understanding.

AI × CryptoNeutralarXiv – CS AI · May 17/10

🤖Researchers introduce Intent2Tx, a benchmark dataset of nearly 32,000 real-world Ethereum transactions designed to evaluate how well large language models can translate natural language instructions into executable blockchain transactions. Testing 16 state-of-the-art LLMs reveals a critical gap: while models generate syntactically valid code, they frequently fail to achieve intended on-chain state transitions, exposing fundamental limitations in current AI's ability to reliably bridge user intent and blockchain execution.

$ETH

AIBullisharXiv – CS AI · May 17/10

🧠Researchers have introduced VeriTaS, a dynamic benchmark for evaluating automated fact-checking systems across 25,000 real-world claims in 54 languages and multiple media formats. Unlike static benchmarks vulnerable to data leakage from LLM pretraining, VeriTaS updates quarterly with claims from 104 professional fact-checkers, maintaining relevance as foundation models evolve.

AINeutralarXiv – CS AI · Apr 207/10

🧠Researchers conducted a comprehensive empirical study on scaling laws for large language models during reinforcement learning post-training, using Qwen2.5 models ranging from 0.5B to 72B parameters. The study reveals that larger models demonstrate superior learning efficiency, performance can be predicted via power-law models, and data reuse proves highly effective in constrained environments, providing practical guidelines for optimizing LLM reasoning capabilities.

AIBearisharXiv – CS AI · Apr 157/10

🧠Researchers have identified a critical privacy vulnerability in LLM-based multi-agent systems, demonstrating that communication topologies can be reverse-engineered through black-box attacks. The Communication Inference Attack (CIA) achieves up to 99% accuracy in inferring how agents communicate, exposing significant intellectual property and security risks in AI systems.

AIBullisharXiv – CS AI · Apr 157/10

🧠Researchers propose Schema-Adaptive Tabular Representation Learning, which uses LLMs to convert structured clinical data into semantic embeddings that transfer across different electronic health record schemas without retraining. When combined with imaging data for dementia diagnosis, the method achieves state-of-the-art results and outperforms board-certified neurologists on retrospective diagnostic tasks.

AIBullisharXiv – CS AI · Apr 147/10

🧠Researchers propose Generative Actor-Critic (GenAC), a new approach to value modeling in large language model reinforcement learning that uses chain-of-thought reasoning instead of one-shot scalar predictions. The method addresses a longstanding challenge in credit assignment by improving value approximation and downstream RL performance compared to existing value-based and value-free baselines.

AIBullisharXiv – CS AI · Apr 147/10

🧠Researchers introduce ReflectiChain, an AI framework combining large language models with generative world models to improve semiconductor supply chain resilience against geopolitical disruptions. The system demonstrates 250% performance improvements over standard LLM approaches by integrating physical environmental constraints and autonomous policy learning, restoring operational capacity from 13.3% to 88.5% under extreme scenarios.

AIBullishOpenAI News · Apr 107/10

🧠OpenAI's suite of products—including ChatGPT, Codex, and developer APIs—demonstrates practical applications of artificial intelligence across work, software development, and consumer tasks. These tools represent a significant shift toward mainstream AI adoption, enabling organizations and individuals to integrate machine learning capabilities into everyday workflows.

🏢 OpenAI🧠 ChatGPT

AI × CryptoNeutralarXiv – CS AI · Apr 77/10

🤖Researchers introduced CREBench, a benchmark to evaluate large language models' capabilities in cryptographic binary reverse engineering. The best-performing model (GPT-5.4) achieved 64.03% success rate, while human experts scored 92.19%, showing AI still lags behind human expertise in cryptographic analysis tasks.

🧠 GPT-5

AIBullisharXiv – CS AI · Apr 77/10

🧠Researchers developed LightThinker++, a new framework that enables large language models to compress intermediate reasoning thoughts and manage memory more efficiently. The system reduces peak token usage by up to 70% while improving accuracy by 2.42% and maintaining performance over extended reasoning tasks.

AIBearisharXiv – CS AI · Apr 77/10

🧠Research reveals that large language models like DeepSeek-V3.2, Gemini-3, and GPT-5.2 show rigid adaptation patterns when learning from changing environments, particularly struggling with loss-based learning compared to humans. The study found LLMs demonstrate asymmetric responses to positive versus negative feedback, with some models showing extreme perseveration after environmental changes.

🧠 GPT-5🧠 Gemini

AINeutralarXiv – CS AI · Apr 77/10

🧠A new research study reveals that truth directions in large language models are less universal than previously believed, with significant variations across different model layers, task types, and prompt instructions. The findings show truth directions emerge earlier for factual tasks but later for reasoning tasks, and are heavily influenced by model instructions and task complexity.