2501 articles tagged with #machine-learning. AI-curated summaries with sentiment analysis and key takeaways from 50+ sources.

AIBullisharXiv – CS AI · Apr 66/10

🧠Researchers have developed OPRIDE, a new algorithm for offline preference-based reinforcement learning that significantly improves query efficiency. The algorithm addresses key challenges of inefficient exploration and overoptimization through principled exploration strategies and discount scheduling mechanisms.

AIBullisharXiv – CS AI · Apr 66/10

🧠This survey paper examines AI's role in developing 6G wireless networks, covering key technologies like deep learning, reinforcement learning, and federated learning. The research addresses how AI will enable 6G's promise of high data rates and low latency for applications like smart cities and autonomous systems, while identifying challenges in scalability, security, and energy efficiency.

AIBullisharXiv – CS AI · Apr 66/10

🧠Researchers developed enhanced techniques using Few-Shot Learning, Chain-of-Thought reasoning, and Retrieval Augmented Generation to improve large language models' ability to detect and repair errors in MPI programs. The approach increased error detection accuracy from 44% to 77% compared to using ChatGPT directly, addressing challenges in maintaining high-performance computing applications used in machine learning frameworks.

🧠 ChatGPT

AIBullisharXiv – CS AI · Apr 66/10

🧠Researchers have developed HIL-CBM, a new hierarchical interpretable AI model that enhances explainability by mimicking human cognitive processes across multiple semantic levels. The model outperforms existing Concept Bottleneck Models in classification accuracy while providing more interpretable explanations without requiring manual concept annotations.

AIBearisharXiv – CS AI · Apr 66/10

🧠Research comparing large language models (LLMs) to humans in group coordination tasks reveals that LLMs exhibit excessive volatility and switching behavior that impairs collective performance. Unlike humans who adapt and stabilize over time, LLMs fail to improve across repeated coordination games and don't benefit from richer feedback mechanisms.

AINeutralarXiv – CS AI · Apr 66/10

🧠Researchers introduce DocShield, a new AI framework that uses evidence-based reasoning to detect text-based image forgeries in documents. The system combines visual and logical analysis to identify, locate, and explain document manipulations, showing significant improvements over existing detection methods.

🧠 GPT-4

AINeutralarXiv – CS AI · Apr 66/10

🧠Research from arXiv shows that Active Preference Learning (APL) provides minimal improvements over random sampling in training modern LLMs through Direct Preference Optimization. The study found that random sampling performs nearly as well as sophisticated active selection methods while being computationally cheaper and avoiding capability degradation.

AIBullisharXiv – CS AI · Apr 66/10

🧠Researchers propose a fully end-to-end training paradigm for temporal sentence grounding in videos, introducing the Sentence Conditioned Adapter (SCADA) to better align video understanding with natural language queries. The method outperforms existing approaches by jointly optimizing video backbones and localization components rather than using frozen pre-trained encoders.

AINeutralarXiv – CS AI · Apr 66/10

🧠Researchers developed a new AI framework for detecting partial deepfake speech by splitting the problem into boundary detection and segment classification stages. The method achieves state-of-the-art performance on benchmark datasets, significantly improving detection and localization of manipulated audio regions within otherwise authentic speech.

AIBearisharXiv – CS AI · Apr 66/10

🧠Researchers have discovered LogicPoison, a new attack method that exploits vulnerabilities in Graph-based Retrieval-Augmented Generation (GraphRAG) systems by corrupting logical connections in knowledge graphs without altering text semantics. The attack successfully bypasses GraphRAG's existing defenses by targeting the topological integrity of underlying graphs, significantly degrading AI system performance.

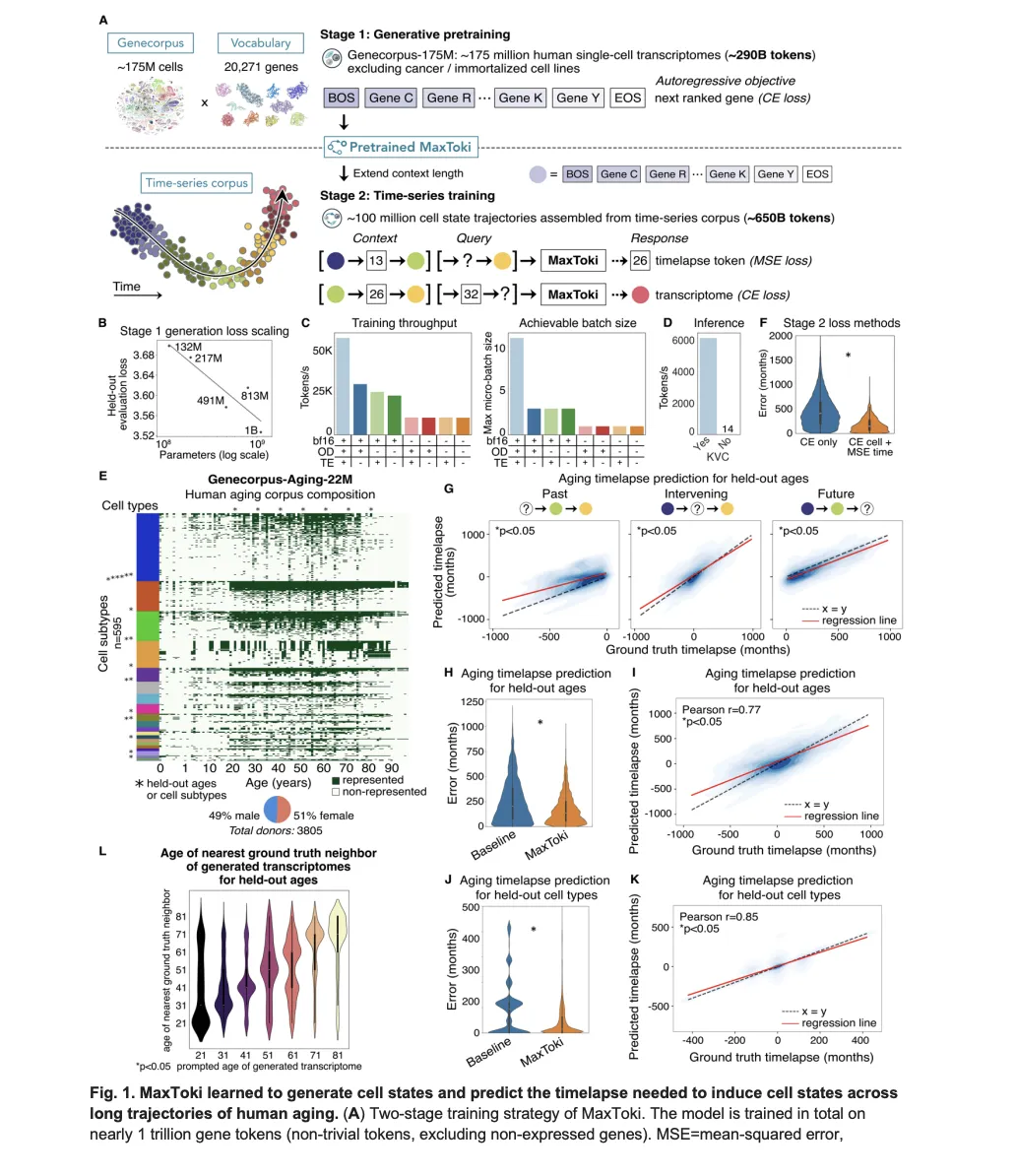

AIBullishMarkTechPost · Apr 56/10

🧠MaxToki is a new AI foundation model that can predict cellular aging patterns and trajectories, addressing a key limitation in existing biological models that only analyze cells as static snapshots. The technology represents a significant advancement in computational biology by incorporating temporal dynamics into cellular analysis.

AIBullisharXiv – CS AI · Mar 315/10

AINeutralarXiv – CS AI · Mar 276/10

🧠Researchers introduce ReLope, a new routing method for multimodal large language models that uses KL-regularized LoRA probes and attention mechanisms to improve cost-performance balance. The method addresses the challenge of degraded probe performance when visual inputs are added to text-only LLMs.

AIBullisharXiv – CS AI · Mar 276/10

🧠Researchers introduce RC2, a reinforcement learning framework that improves multimodal AI reasoning by enforcing consistency between visual and textual representations. The system uses cycle-consistent training to resolve internal conflicts between modalities, achieving up to 7.6 point improvements in reasoning accuracy without requiring additional labeled data.

AIBullisharXiv – CS AI · Mar 276/10

🧠Researchers introduce TRAJEVAL, a diagnostic framework that breaks down AI code agent performance into three stages (search, read, edit) to identify specific failure points rather than just binary pass/fail outcomes. The framework analyzed 16,758 trajectories and found that real-time feedback based on trajectory signals improved state-of-the-art models by 2.2-4.6 percentage points while reducing costs by 20-31%.

🧠 GPT-5

AIBullisharXiv – CS AI · Mar 276/10

🧠Researchers introduce Experiential Reflective Learning (ERL), a framework that enables AI agents to improve performance by learning from past experiences and generating transferable heuristics. The method shows a 7.8% improvement in success rates on the Gaia2 benchmark compared to baseline approaches.

AIBullisharXiv – CS AI · Mar 276/10

🧠Researchers introduce QuatRoPE, a novel positional embedding method that improves 3D spatial reasoning in Large Language Models by encoding object relations more efficiently. The method maintains linear scalability with the number of objects and preserves LLMs' original capabilities through the Isolated Gated RoPE Extension.

AINeutralarXiv – CS AI · Mar 276/10

🧠Researchers benchmarked 20 multimodal AI models on neuroimaging tasks using MRI and CT scans, finding that while technical attributes like imaging modality are nearly solved, diagnostic reasoning remains challenging. Gemini-2.5-Pro and GPT-5-Chat showed strongest diagnostic performance, while open-source MedGemma-1.5-4B demonstrated promising results under few-shot prompting.

🏢 Meta🧠 GPT-5🧠 Gemini

AINeutralarXiv – CS AI · Mar 276/10

🧠Researchers introduce a new nonparametric method called signed isotonic R² for efficiently detecting problematic items in AI benchmarks and assessments. The method outperforms traditional diagnostic techniques across major AI datasets including GSM8K and MMLU, offering a lightweight solution for improving evaluation quality.

AIBullisharXiv – CS AI · Mar 276/10

🧠Researchers developed a framework using large language models (LLMs) as adaptive controllers for SIMP topology optimization, replacing fixed-schedule continuation with real-time parameter adjustments. The LLM agent achieved 5.7% to 18.1% better performance than baseline methods across multiple 2D and 3D engineering problems.

AIBullisharXiv – CS AI · Mar 276/10

🧠Researchers developed SAVe, a self-supervised AI framework that detects audio-visual deepfakes by learning from authentic videos rather than synthetic ones. The system identifies visual artifacts and audio-visual misalignment patterns to detect manipulated content, showing strong cross-dataset generalization capabilities.

AIBullisharXiv – CS AI · Mar 276/10

🧠Researchers developed lightweight generative AI models for creating synthetic network traffic data to address privacy concerns and data scarcity in network traffic classification. The models achieved up to 87% F1-score when classifiers were trained solely on synthetic data, with transformer-based approaches providing the best balance of accuracy and computational efficiency.

AINeutralarXiv – CS AI · Mar 276/10

🧠Researchers have developed TAAC, a framework for trustable audio-based depression diagnosis that protects user identity information while maintaining diagnostic accuracy. The system uses adversarial loss-based subspace decomposition to separate depression features from sensitive identity data, enabling secure AI-powered mental health screening.

AINeutralarXiv – CS AI · Mar 276/10

🧠A benchmarking study reveals demographic bias in multimodal large language models used for face verification, testing nine models across different ethnicity and gender groups. The research found that face-specialized models outperform general-purpose MLLMs, but accuracy doesn't correlate with fairness, and bias patterns differ from traditional face recognition systems.

🏢 Meta

AINeutralarXiv – CS AI · Mar 276/10

🧠Researchers evaluated whether large language models follow Occam's Razor principle when performing inductive and abductive reasoning, finding that while LLMs can handle simple scenarios, they struggle with complex world models and producing high-quality, simplified hypotheses. The study introduces a new framework for generating reasoning questions and an automated metric to assess hypothesis quality based on correctness and simplicity.