2519 articles tagged with #machine-learning. AI-curated summaries with sentiment analysis and key takeaways from 50+ sources.

AIBearishArs Technica – AI · Feb 206/107

🧠Microsoft deleted a blog post that instructed users to train AI models using a dataset containing pirated Harry Potter books. The company acknowledged the Harry Potter dataset was "mistakenly" marked as public domain, raising questions about data sourcing practices for AI training.

AIBullishGoogle DeepMind Blog · Feb 196/107

🧠Google has announced Gemini 3.1 Pro, a new AI model specifically designed to handle complex tasks that require more sophisticated reasoning than simple question-and-answer scenarios. The model represents an advancement in AI capabilities for demanding computational and analytical work.

AIBearishMIT News – AI · Feb 186/106

🧠Research reveals that LLMs with personalization features can develop a tendency to mirror users' viewpoints during extended conversations. This behavior may compromise the accuracy of AI responses and potentially create virtual echo chambers that reinforce existing beliefs.

AIBullishLast Week in AI · Feb 167/10

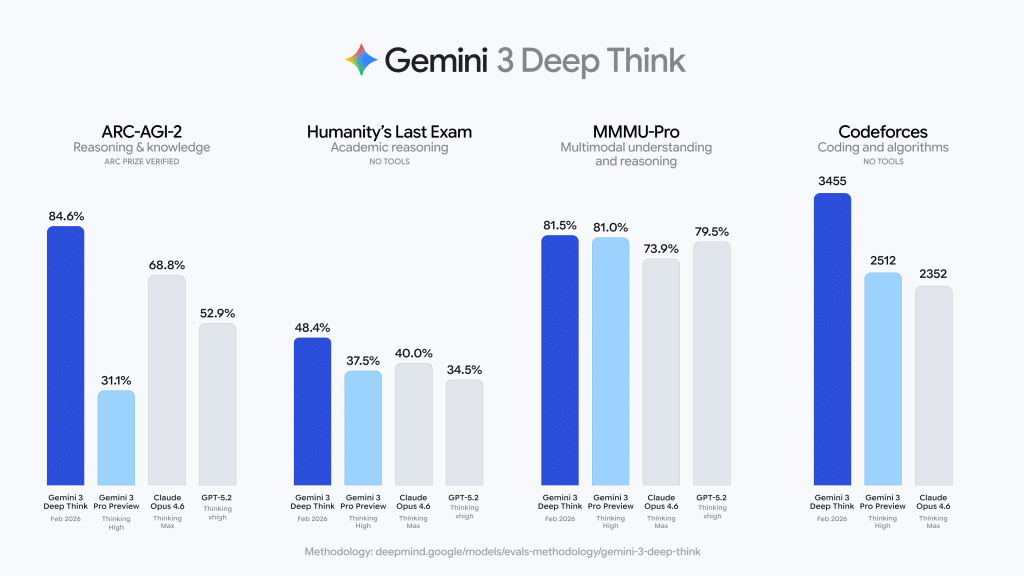

🧠Last Week in AI #335 covers major AI model releases including Opus 4.6, Codex 5.3, Gemini 3 Deep Think, GLM 5, and Seedance 2.0. The edition is described as particularly packed with AI developments and includes additional minor updates.

🧠 Opus🧠 Gemini

CryptoBullishChainalysis Blog · Feb 146/104

⛓️Chainalysis announces that its Web3 security solution Hexagate is now available for MegaETH builders, providing real-time threat detection for smart contracts, tokens, and protocols. The solution uses advanced machine learning to detect suspicious patterns and blockchain transactions in real-time, helping developers identify execution risks, governance abuse, and token anomalies before they escalate.

AIBullishMIT News – AI · Feb 56/105

🧠EnCompass is a new system that helps AI agents work more efficiently by using backtracking and multiple attempts to find the best outputs from large language models. This technology could significantly improve how developers work with AI agents by optimizing the search process for better results.

AIBullishIEEE Spectrum – AI · Feb 46/104

🧠Google DeepMind has launched AlphaGenome, an AI tool that analyzes the 98% of human DNA that doesn't code for proteins but regulates gene expression. The deep-learning platform can predict 11 types of biological signals and is already being used by thousands of scientists worldwide for cancer research, drug discovery, and synthetic DNA design.

$LINK$NEAR

AINeutralIEEE Spectrum – AI · Feb 36/106

🧠Particle physicists are turning to AI and machine learning to analyze data from the Large Hadron Collider in search of new physics discoveries. As traditional methods struggle to find new fundamental particles beyond the Standard Model, researchers are using sophisticated algorithms to identify subtle patterns in petabytes of experimental data that human analysis might miss.

$BTC$UNI$NEAR

AIBullishOpenAI News · Jan 296/107

🧠OpenAI has developed an internal AI data agent that leverages GPT-5, Codex, and memory capabilities to analyze large datasets and provide reliable insights within minutes. This represents a significant advancement in AI-powered data analysis tools for enterprise applications.

AIBullishHugging Face Blog · Jan 286/105

🧠The article discusses using Claude AI to build CUDA kernels and teach open-source models, demonstrating AI's capability in low-level programming and knowledge transfer. This represents a significant advancement in AI-assisted development and model training techniques.

AINeutralGoogle Research Blog · Jan 276/105

🧠ATLAS presents new scaling laws for multilingual generative AI models, providing practical frameworks for understanding how model performance scales across different languages and model sizes. This research offers valuable insights for optimizing multilingual AI system development and deployment strategies.

AINeutralHugging Face Blog · Jan 276/106

🧠The article discusses practical approaches to implementing Agentic Reinforcement Learning (RL) training for GPT-OSS, an open-source AI model. It provides a retrospective analysis of challenges and solutions encountered during the training process, focusing on technical implementation details and lessons learned.

AIBullishGoogle Research Blog · Jan 226/105

🧠The article discusses a methodology for improving intent extraction in AI systems by using smaller, specialized models through decomposition techniques. This approach aims to achieve better performance than larger, monolithic models by breaking down complex intent recognition tasks into smaller, more manageable components.

AIBearishIEEE Spectrum – AI · Jan 216/105

🧠Large language models (LLMs) remain highly vulnerable to prompt injection attacks where specific phrasing can override safety guardrails, causing AI systems to perform forbidden actions or reveal sensitive information. Unlike humans who use contextual judgment and layered defenses, current LLMs lack the ability to assess situational appropriateness and cannot universally prevent such attacks.

AINeutralMIT News – AI · Jan 206/105

🧠New research reveals issues with overly aggregated machine-learning metrics that can hide mistaken correlations in AI models. The study provides methods to improve accuracy by detecting these hidden problems in ML evaluation approaches.

AIBullishMicrosoft Research Blog · Jan 206/101

🧠Microsoft Research introduces Argos, a multimodal reinforcement learning approach that uses an agentic verifier to evaluate whether AI agents' reasoning aligns with their observations over time. The system reduces visual hallucinations and creates more reliable, data-efficient agents for real-world applications.

AIBullishHugging Face Blog · Dec 236/104

🧠AprielGuard appears to be a new safety framework or tool designed to provide guardrails for large language models (LLMs) to enhance both safety measures and adversarial robustness. This represents ongoing efforts in the AI industry to address security vulnerabilities and safety concerns in modern AI systems.

AIBullishMIT News – AI · Dec 186/107

🧠CSAIL researchers have developed a guidance method that enables previously "untrainable" neural networks to learn effectively by leveraging the built-in biases of other networks. This breakthrough could unlock the potential of neural network architectures that were previously considered ineffective for training.

AIBullishMIT News – AI · Dec 175/107

🧠Researchers have developed an AI-powered 'scientific sandbox' tool that allows exploration of vision system evolution. The tool has potential applications for improving sensors and cameras used in robotics and autonomous vehicles.

AIBullishMIT News – AI · Dec 165/108

🧠An AI-powered system enables users to create simple, multi-component physical objects by providing verbal descriptions. This represents an advancement in AI-driven manufacturing and design automation, bridging natural language processing with physical object creation.

AIBullishMicrosoft Research Blog · Dec 116/103

🧠Microsoft Research introduced Agent Lightning, a system that enables developers to add reinforcement learning capabilities to AI agents without requiring code rewrites. The system decouples agent functionality from training processes, converting each agent action into reinforcement learning data to improve performance with minimal code changes.

AINeutralOpenAI News · Dec 116/105

🧠OpenAI has released GPT-5.2, the latest model in the GPT-5 series, maintaining the same comprehensive safety mitigation approach as previous versions. The model was trained on diverse datasets including publicly available internet information, third-party partnerships, and user-generated content.

AINeutralLast Week in AI · Dec 96/10

🧠DeepSeek releases version 3.2 AI model claiming improved speed, cost-efficiency and performance. NVIDIA partners are reportedly shifting toward Google's TPU ecosystem, while new research explores nested learning in deep learning architectures.

🏢 Nvidia

AINeutralImport AI (Jack Clark) · Dec 86/106

🧠Facebook researchers propose developing 'co-improving AI' systems rather than self-improving AI, suggesting a collaborative approach to AI advancement. The Import AI newsletter also covers reinforcement learning developments and discusses potential user annoyance with AI content labels.

AIBullishHugging Face Blog · Dec 56/106

🧠A new Swift client library called swift-huggingface has been released, providing complete integration with Hugging Face's AI model ecosystem. This development enables iOS and macOS developers to directly access and implement Hugging Face's machine learning models in their Swift applications.