329 articles tagged with #open-source. AI-curated summaries with sentiment analysis and key takeaways from 50+ sources.

AIBullisharXiv – CS AI · 1d ago7/10

🧠Researchers introduce JanusCoder, a foundational multimodal AI model that bridges visual and programmatic intelligence by processing both code and visual outputs. The team created JanusCode-800K, the largest multimodal code corpus, enabling their 7B-14B parameter models to match or exceed commercial AI performance on code generation tasks combining textual instructions and visual inputs.

AIBullishBlockonomi · 2d ago7/10

🧠Nvidia released open-source Ising quantum AI models designed to improve quantum computing calibration speed and error correction, driving stock gains. The move signals Nvidia's strategic expansion into quantum computing infrastructure, a field expected to reshape computational capabilities across industries.

🏢 Nvidia

AI × CryptoBullisharXiv – CS AI · 2d ago7/10

🤖Researchers have developed presidio-hardened-x402, an open-source middleware that filters personally identifiable information from AI agent payment requests using the x402 protocol before data reaches payment servers or centralized APIs. The tool achieves 97.2% precision in detecting PII with minimal latency, addressing a critical privacy gap where payment metadata is currently transmitted without data processing agreements.

AIBullisharXiv – CS AI · 2d ago7/10

🧠UniToolCall introduces a standardized framework unifying tool-use representation, training data, and evaluation for LLM agents. The framework combines 22k+ tools and 390k+ training instances with a unified evaluation methodology, enabling fine-tuned models like Qwen3-8B to achieve 93% precision—surpassing GPT, Gemini, and Claude in specific benchmarks.

🧠 Claude🧠 Gemini

AIBullisharXiv – CS AI · 2d ago7/10

🧠Researchers introduce GIANTS, a framework for training language models to anticipate scientific breakthroughs by synthesizing insights from foundational papers. The team releases GiantsBench, a 17k-example benchmark across eight scientific domains, and GIANTS-4B, a 4B-parameter model that outperforms larger proprietary baselines by 34% while generalizing to unseen research areas.

AINeutralarXiv – CS AI · 2d ago7/10

🧠Researchers introduce AgencyBench, a comprehensive benchmark for evaluating autonomous AI agents across 32 real-world scenarios requiring up to 1 million tokens and 90 tool calls. The evaluation reveals closed-source models like Claude significantly outperform open-source alternatives (48.4% vs 32.1%), with notable performance variations based on execution frameworks and model optimization.

🧠 Claude

AIBullisharXiv – CS AI · 2d ago7/10

🧠SemaClaw is an open-source framework addressing the shift from prompt engineering to 'harness engineering'—building infrastructure for controllable, auditable AI agents. Announced alongside OpenClaw's mass adoption in early 2026, it enables persistent personal AI agents through DAG-based orchestration, behavioral safety systems, and automated knowledge base construction.

AIBullisharXiv – CS AI · 2d ago7/10

🧠Researchers introduce soul.py, an open-source architecture addressing catastrophic forgetting in AI agents by distributing identity across multiple memory systems rather than centralizing it. The framework implements persistent identity through separable components and a hybrid RAG+RLM retrieval system, drawing inspiration from how human memory survives neurological damage.

AIBullisharXiv – CS AI · 2d ago7/10

🧠Researchers introduce Audio Flamingo Next (AF-Next), an advanced open-source audio-language model that processes speech, sound, and music with support for inputs up to 30 minutes. The model incorporates a new temporal reasoning approach and demonstrates competitive or superior performance compared to larger proprietary alternatives across 20 benchmarks.

AIBullisharXiv – CS AI · 3d ago7/10

🧠LLM-Rosetta is an open-source translation framework that solves API fragmentation across major Large Language Model providers by establishing a standardized intermediate representation. The hub-and-spoke architecture enables bidirectional conversion between OpenAI, Anthropic, and Google APIs with minimal overhead, addressing the O(N²) adapter problem that currently locks applications into specific vendors.

🏢 OpenAI🏢 Anthropic

AIBullisharXiv – CS AI · 6d ago7/10

🧠Researchers have developed a scalable system for interpreting and controlling large language models distributed across multiple GPUs, achieving up to 7x memory reduction and 41x throughput improvements. The method enables real-time behavioral steering of frontier LLMs like LLaMA and Qwen without fine-tuning, with results released as open-source tooling.

AIBullisharXiv – CS AI · Apr 77/10

🧠MemMachine is an open-source memory system for AI agents that preserves conversational ground truth and achieves superior accuracy-efficiency tradeoffs compared to existing solutions. The system integrates short-term, long-term episodic, and profile memory while using 80% fewer input tokens than comparable systems like Mem0.

🧠 GPT-4🧠 GPT-5

AIBullisharXiv – CS AI · Apr 77/10

🧠Researchers developed QED-Nano, a 4B parameter AI model that achieves competitive performance on Olympiad-level mathematical proofs despite being much smaller than proprietary systems. The model uses a three-stage training approach including supervised fine-tuning, reinforcement learning, and reasoning cache expansion to match larger models at a fraction of the inference cost.

🧠 Gemini

AIBullishMarkTechPost · Apr 67/10

🧠RightNow AI has released AutoKernel, an open-source framework that uses autonomous LLM agents to optimize GPU kernels for PyTorch models. This tool aims to automate the complex process of writing efficient GPU code, addressing one of the most challenging aspects of machine learning engineering.

AIBullisharXiv – CS AI · Apr 67/10

🧠JoyAI-LLM Flash is a new efficient Mixture-of-Experts language model with 48B parameters that activates only 2.7B per forward pass, trained on 20 trillion tokens. The model introduces FiberPO, a novel reinforcement learning algorithm, and achieves higher sparsity ratios than comparable industry models while being released open-source on Hugging Face.

🏢 Hugging Face

AIBullisharXiv – CS AI · Apr 67/10

🧠Researchers introduce IMAgent, an open-source visual AI agent trained with reinforcement learning to handle multi-image reasoning tasks. The system addresses limitations of current VLM-based agents that only process single images, using specialized tools for visual reflection and verification to maintain attention on image content throughout inference.

🏢 OpenAI🧠 o1🧠 o3

AIBullisharXiv – CS AI · Apr 67/10

🧠Researchers propose Council Mode, a multi-agent consensus framework that reduces AI hallucinations by 35.9% by routing queries to multiple diverse LLMs and synthesizing their outputs through a dedicated consensus model. The system operates through intelligent triage classification, parallel expert generation, and structured consensus synthesis to address factual accuracy issues in large language models.

AIBullisharXiv – CS AI · Apr 67/10

🧠Researchers analyzed data movement patterns in large-scale Mixture of Experts (MoE) language models (200B-1000B parameters) to optimize inference performance. Their findings led to architectural modifications achieving 6.6x speedups on wafer-scale GPUs and up to 1.25x improvements on existing systems through better expert placement algorithms.

🏢 Hugging Face

AIBearisharXiv – CS AI · Mar 277/10

🧠Research reveals that open-source large language models (LLMs) lack hierarchical knowledge of visual taxonomies, creating a bottleneck for vision LLMs in hierarchical visual recognition tasks. The study used one million visual question answering tasks across six taxonomies to demonstrate this limitation, finding that even fine-tuning cannot overcome the underlying LLM knowledge gaps.

AIBullisharXiv – CS AI · Mar 277/10

🧠Researchers propose SWAA (Sliding Window Attention Adaptation), a toolkit that enables efficient long-context processing in large language models by adapting full attention models to sliding window attention without expensive retraining. The solution achieves 30-100% speedups for long context inference while maintaining acceptable performance quality through four core strategies that address training-inference mismatches.

AIBullishTechCrunch – AI · Mar 267/10

🧠Mistral has released a new open-source speech generation model that is lightweight enough to run on mobile devices including smartwatches and smartphones. This represents a significant advancement in making AI speech capabilities more accessible and portable for edge computing applications.

AIBullishMarkTechPost · Mar 267/10

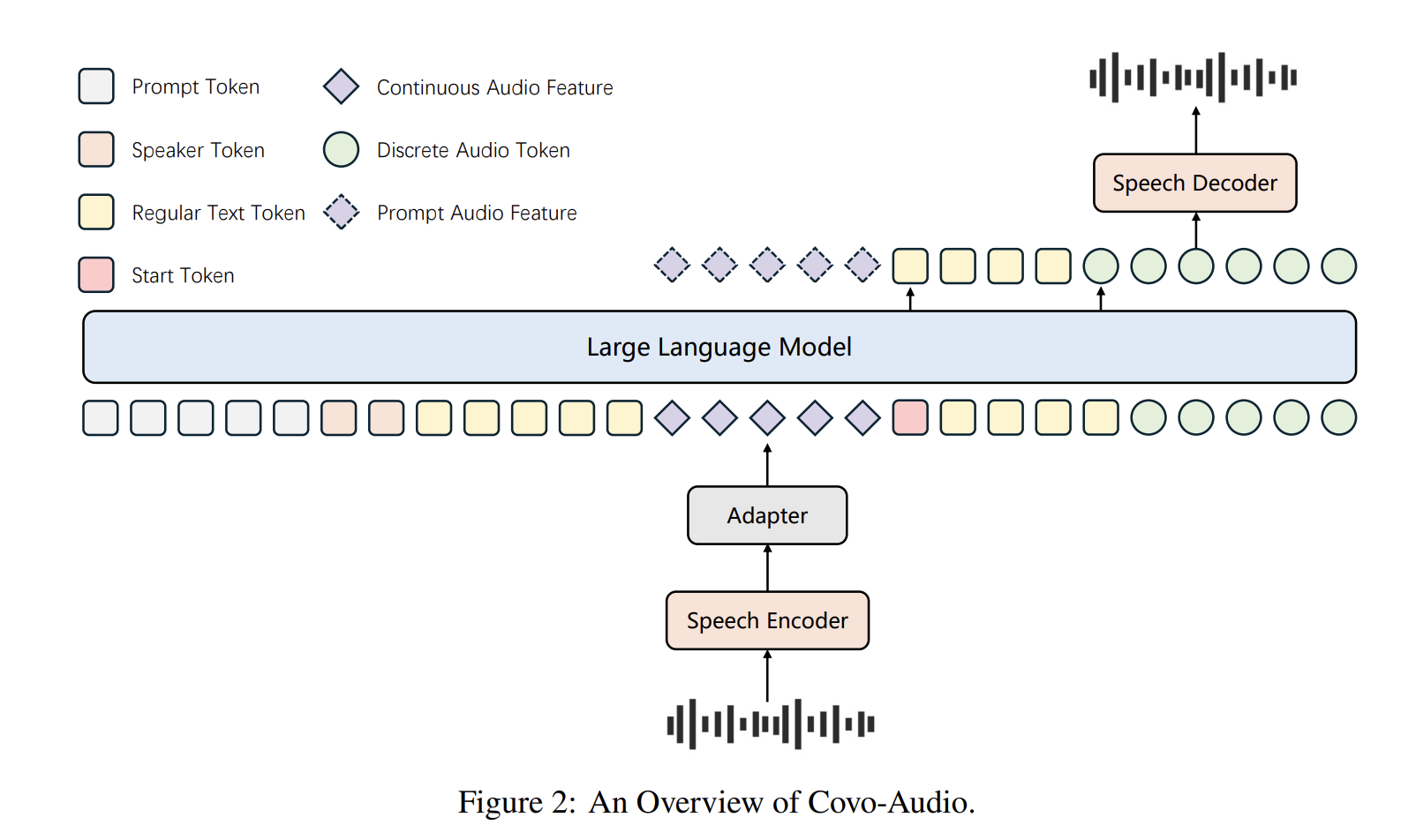

🧠Tencent AI Lab has open-sourced Covo-Audio, a 7B-parameter Large Audio Language Model that can process continuous audio inputs and generate audio outputs in real-time. The model unifies speech processing and language intelligence within a single end-to-end architecture designed for seamless cross-modal interaction.

AIBullisharXiv – CS AI · Mar 267/10

🧠Researchers have created OSS-CRS, an open framework that makes DARPA's AI Cyber Challenge systems usable for real-world cybersecurity applications. The system successfully ported the winning Atlantis CRS and discovered 10 previously unknown bugs, including three high-severity issues, across 8 open-source projects.

AIBullisharXiv – CS AI · Mar 267/10

🧠Researchers have released DanQing, a large-scale Chinese vision-language dataset containing 100 million high-quality image-text pairs curated from Common Crawl data. The dataset addresses the bottleneck in Chinese VLP development and demonstrates superior performance compared to existing Chinese datasets across various AI tasks.

AIBullisharXiv – CS AI · Mar 267/10

🧠Alberta Health Services deployed Berta, an open-source AI scribe platform that reduces clinical documentation costs by 70-95% compared to commercial alternatives. The system was used by 198 emergency physicians across 105 facilities, generating over 22,000 clinical sessions while keeping all data within secure health system infrastructure.