45 articles tagged with #speech-recognition. AI-curated summaries with sentiment analysis and key takeaways from 50+ sources.

AIBullisharXiv – CS AI · Mar 277/10

🧠Ming-Flash-Omni is a new 100 billion parameter multimodal AI model with Mixture-of-Experts architecture that uses only 6.1 billion active parameters per token. The model demonstrates unified capabilities across vision, speech, and language tasks, achieving performance comparable to Gemini 2.5 Pro on vision-language benchmarks.

🧠 Gemini

AIBullisharXiv – CS AI · Mar 267/10

🧠Alberta Health Services deployed Berta, an open-source AI scribe platform that reduces clinical documentation costs by 70-95% compared to commercial alternatives. The system was used by 198 emergency physicians across 105 facilities, generating over 22,000 clinical sessions while keeping all data within secure health system infrastructure.

AIBearisharXiv – CS AI · Mar 177/10

🧠Researchers developed SWhisper, a framework that uses near-ultrasonic audio to deliver covert jailbreak attacks against speech-driven AI systems. The technique is inaudible to humans but can successfully bypass AI safety measures with up to 94% effectiveness on commercial models.

AIBullisharXiv – CS AI · Mar 37/103

🧠Researchers have released WAXAL, a large-scale multilingual speech dataset covering 24 Sub-Saharan African languages representing over 100 million speakers. The dataset includes 1,250 hours of transcribed speech for ASR and 235 hours of high-quality recordings for TTS, released under CC-BY-4.0 license to advance inclusive AI technologies.

AIBullishMIT News – AI · Dec 57/106

🧠MIT researchers have developed a speech-to-reality system that combines 3D generative AI with robotic assembly to create physical objects on demand from voice commands. The technology represents a significant advancement in AI-driven manufacturing and automation capabilities.

AIBullishOpenAI News · Apr 247/106

🧠OpenAI has released APIs for ChatGPT and Whisper models, allowing developers to integrate these AI capabilities directly into their applications and products. This marks a significant step in making advanced conversational AI and speech recognition technology accessible to third-party developers.

AIBullishOpenAI News · Sep 217/107

🧠OpenAI has trained and open-sourced Whisper, a neural network for speech recognition that achieves human-level robustness and accuracy on English speech. The model represents a significant advancement in AI speech recognition technology and is being made freely available to the community.

AIBullisharXiv – CS AI · 3d ago6/10

🧠Researchers propose Interactive ASR, a new framework that combines semantic-aware evaluation using LLM-as-a-Judge with multi-turn interactive correction to improve automatic speech recognition beyond traditional word error rate metrics. The approach simulates human-like interaction, enabling iterative refinement of recognition outputs across English, Chinese, and code-switching datasets.

AIBearisharXiv – CS AI · Mar 276/10

🧠Researchers introduced WildASR, a multilingual diagnostic benchmark revealing that current ASR systems suffer severe performance degradation in real-world conditions despite achieving near-human accuracy on curated tests. The study found that ASR models often hallucinate plausible but unspoken content under degraded inputs, creating safety risks for voice agents.

AIBullishMarkTechPost · Mar 266/10

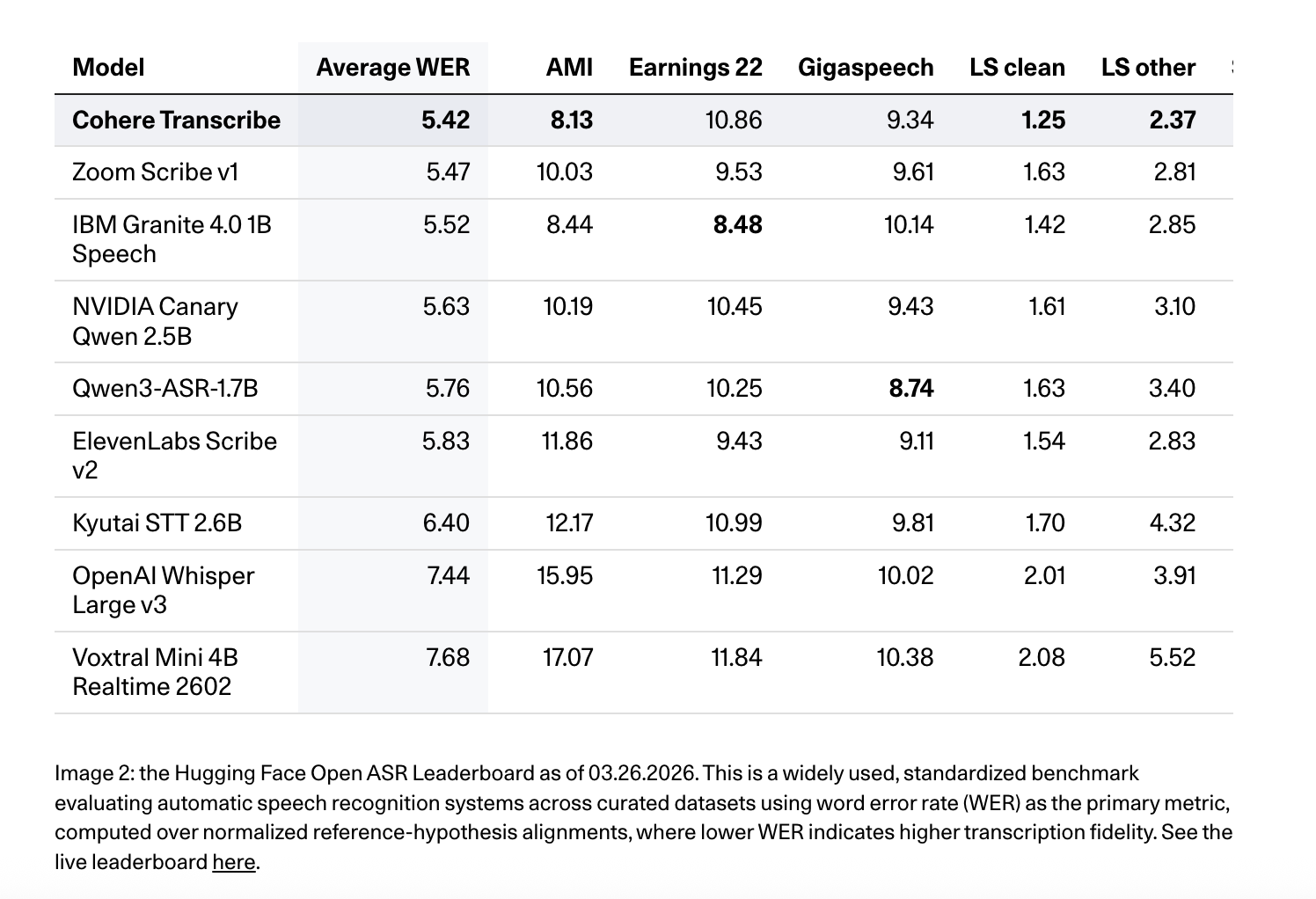

🧠Cohere AI has released Cohere Transcribe, a new state-of-the-art Automatic Speech Recognition (ASR) model designed for enterprise applications. This marks the company's expansion beyond text generation and embedding models into the speech recognition market, targeting enterprise speech intelligence solutions.

🏢 Cohere

AIBullishMarkTechPost · Mar 176/10

🧠Google AI has released WAXAL, an open multilingual speech dataset covering 24 African languages to improve Automatic Speech Recognition and Text-to-Speech systems. This addresses the significant data distribution problem where African languages remain poorly represented in speech technology training corpora.

🏢 Google

AIBullishMarkTechPost · Mar 166/10

🧠IBM has released Granite 4.0 1B Speech, a compact multilingual speech-language model optimized for automatic speech recognition and translation. The model is specifically designed for enterprise and edge deployments where memory efficiency, low latency, and compute optimization are critical alongside performance quality.

AIBullisharXiv – CS AI · Mar 126/10

🧠Researchers developed a protocol to evaluate speaker verification capabilities in speech-aware large language models, finding weak performance with error rates above 20%. They introduced ECAPA-LLM, a lightweight augmentation that achieves 1.03% error rate by integrating speaker embeddings while maintaining natural language interface.

AINeutralarXiv – CS AI · Mar 116/10

🧠Researchers introduce SCENEBench, a new benchmark for evaluating Large Audio Language Models (LALMs) beyond speech recognition, focusing on real-world audio understanding including background sounds, noise localization, and vocal characteristics. Testing of five state-of-the-art models revealed significant performance gaps, with some tasks performing below random chance while others achieved high accuracy.

AIBullisharXiv – CS AI · Mar 45/103

🧠Researchers developed GLoRIA, a parameter-efficient framework for automatic speech recognition that adapts to regional dialects using location metadata. The system achieves state-of-the-art performance while updating less than 10% of model parameters and demonstrates strong generalization to unseen dialects.

AIBullisharXiv – CS AI · Mar 37/107

🧠Researchers introduce Whisper-MLA, a modified version of OpenAI's Whisper speech recognition model that uses Multi-Head Latent Attention to reduce GPU memory consumption by up to 87.5% while maintaining accuracy. The innovation addresses a key scalability issue with transformer-based ASR models when processing long-form audio.

AIBullisharXiv – CS AI · Mar 26/1010

🧠Researchers developed SHINE, a Sequential Hierarchical Integration Network for analyzing brain signals (EEG/MEG) to detect speech from neural activity. The system achieved high F1-macro scores of 0.9155-0.9184 in the LibriBrain Competition 2025 by reconstructing speech-silence patterns from magnetoencephalography signals.

AIBullisharXiv – CS AI · Mar 27/1012

🧠Researchers have introduced Hello-Chat, an end-to-end audio language model designed to create more realistic and emotionally resonant AI conversations. The model addresses the robotic nature of existing Large Audio Language Models by using real-life conversation data and achieving breakthrough performance in prosodic naturalness and emotional alignment.

AIBullisharXiv – CS AI · Feb 276/107

🧠Researchers developed a new AI framework using RNN-T architecture to improve speech recognition for Taiwanese Hakka, an endangered low-resource language with high dialectal variability. The system achieved 57% and 40% relative error rate reductions for two different writing systems, marking the first systematic investigation into Hakka dialect variations in ASR.

AINeutralApple Machine Learning · Feb 256/103

🧠Research identifies a significant performance gap between speech-adapted Large Language Models and their text-based counterparts on language understanding tasks. Current approaches to bridge this gap rely on expensive large-scale speech synthesis methods, highlighting a key challenge in extending LLM capabilities to audio inputs.

AINeutralApple Machine Learning · Feb 246/102

🧠Researchers introduce AMUSE, a new benchmark for evaluating multimodal large language models in multi-speaker dialogue scenarios. The framework addresses current limitations of models like GPT-4o in tracking speakers, maintaining conversational roles, and reasoning across audio-visual streams in applications such as conversational video assistants.

AIBullishMicrosoft Research Blog · Feb 56/103

🧠Microsoft Research launched Paza, a human-centered speech recognition pipeline, and PazaBench, the first benchmark leaderboard specifically designed for low-resource languages. The initiative covers 39 African languages with 52 models and has been tested with real communities to improve AI accessibility for underrepresented languages.

AINeutralarXiv – CS AI · Mar 264/10

🧠Researchers developed a new training framework to address contextual exposure bias in Speech-LLMs, where models trained on perfect conversation history perform poorly with error-prone real-world context. Their approach combines teacher error knowledge, context dropout, and direct preference optimization to improve robustness, achieving WER reductions from 5.59% to 5.17% on TED-LIUM 3.

AIBullisharXiv – CS AI · Mar 175/10

🧠Researchers have developed a Video-Guided Post-ASR Correction (VPC) framework that uses Video-Large Multimodal Models to improve speech recognition accuracy in complex environments like TV series. The system addresses challenges with multiple speakers, overlapping speech, and domain-specific terminology by leveraging video context to refine ASR outputs.

AINeutralarXiv – CS AI · Mar 175/10

🧠Researchers developed a novel Bayesian Low-rank Adaptation method for personalizing automatic speech recognition systems to better understand impaired speech. The approach addresses challenges in ASR systems like Whisper that struggle with non-normative speech patterns from conditions like cerebral palsy, using data-efficient fine-tuning on English and German datasets.