11,519 AI articles curated from 50+ sources with AI-powered sentiment analysis, importance scoring, and key takeaways.

AIBullishBlockonomi · Mar 167/10

🧠OpenAI is negotiating a $10 billion joint venture with major private equity firms TPG, Bain, and Brookfield, while competitor Anthropic pursues a rival deal with Blackstone. Both AI companies are targeting enterprise markets as they prepare for potential future IPOs.

🏢 OpenAI🏢 Anthropic

AIBullishBlockonomi · Mar 167/10

🧠Intel stock surged 4.4% following news of potential collaboration with Nvidia, along with AI partnerships with Ericsson and Infosys. The rally was also driven by progress reports on Intel's advanced 18A manufacturing process.

🏢 Nvidia

AINeutralTechCrunch – AI · Mar 167/10

🧠Nvidia's flagship GTC 2026 conference will feature CEO Jensen Huang's keynote address focusing on the company's vision for the future of computing and AI. The annual event typically serves as a platform for announcing new products, partnerships, and strategic directions for the chipmaker.

🏢 Nvidia

AIBullishIEEE Spectrum – AI · Mar 167/10

🧠Tower Semiconductor and Scintil Photonics have developed the world's first single-chip DWDM light engine for AI infrastructure, integrating multiple laser wavelengths onto silicon wafers. This breakthrough enables dense wavelength division multiplexing in AI data centers, allowing multiple optical signals over single fibers to reduce power consumption and latency while connecting dozens of GPUs.

🏢 Nvidia

AIBullishBlockonomi · Mar 167/10

🧠Nebius (NBIS) stock surged following the announcement of a massive five-year AI infrastructure partnership with Meta valued at up to $27 billion. The deal includes $12 billion in guaranteed contracts and an additional $15 billion in optional agreements, positioning Nebius as a major AI infrastructure provider.

🏢 Meta

AIBullishBlockonomi · Mar 167/10

🧠Taiwan Semiconductor (TSM) stock has surged 83% with Bernstein upgrading the stock to a NT$2,200 target price. The company expects AI revenue to exceed 20% by 2026 as part of a $45 billion expansion plan.

AINeutralBlockonomi · Mar 167/10

🧠Meta is reportedly considering a potential 20% workforce reduction that could generate up to $8 billion in annual savings. This strategic move appears aligned with the company's pivot toward AI-focused operations and cost optimization efforts.

AIBearishAI News · Mar 167/10

🧠OpenAI's Frontier platform, launched in February, positions AI agents as a semantic layer connecting enterprise systems, potentially disrupting traditional SaaS revenue models. The platform aims to integrate data warehouses, CRM platforms, and internal tools, challenging the existing software industry architecture.

🏢 OpenAI

AIBullishBlockonomi · Mar 167/10

🧠Micron (MU) is set to report Q2 FY26 earnings on March 18, with analysts expecting massive growth driven by AI demand for high-bandwidth memory (HBM). Revenue is projected at $19.1B, representing a 137% year-over-year increase, as AI applications create demand that exceeds current supply capacity.

AIBearishWired – AI · Mar 167/10

🧠WIRED investigation reveals dozens of Telegram channels advertising jobs for 'AI face models,' with mostly women being recruited to serve as the face of AI-powered financial scams. These models are likely being used to conduct video calls with victims to build trust before defrauding them of money.

AIBearishLast Week in AI · Mar 167/10

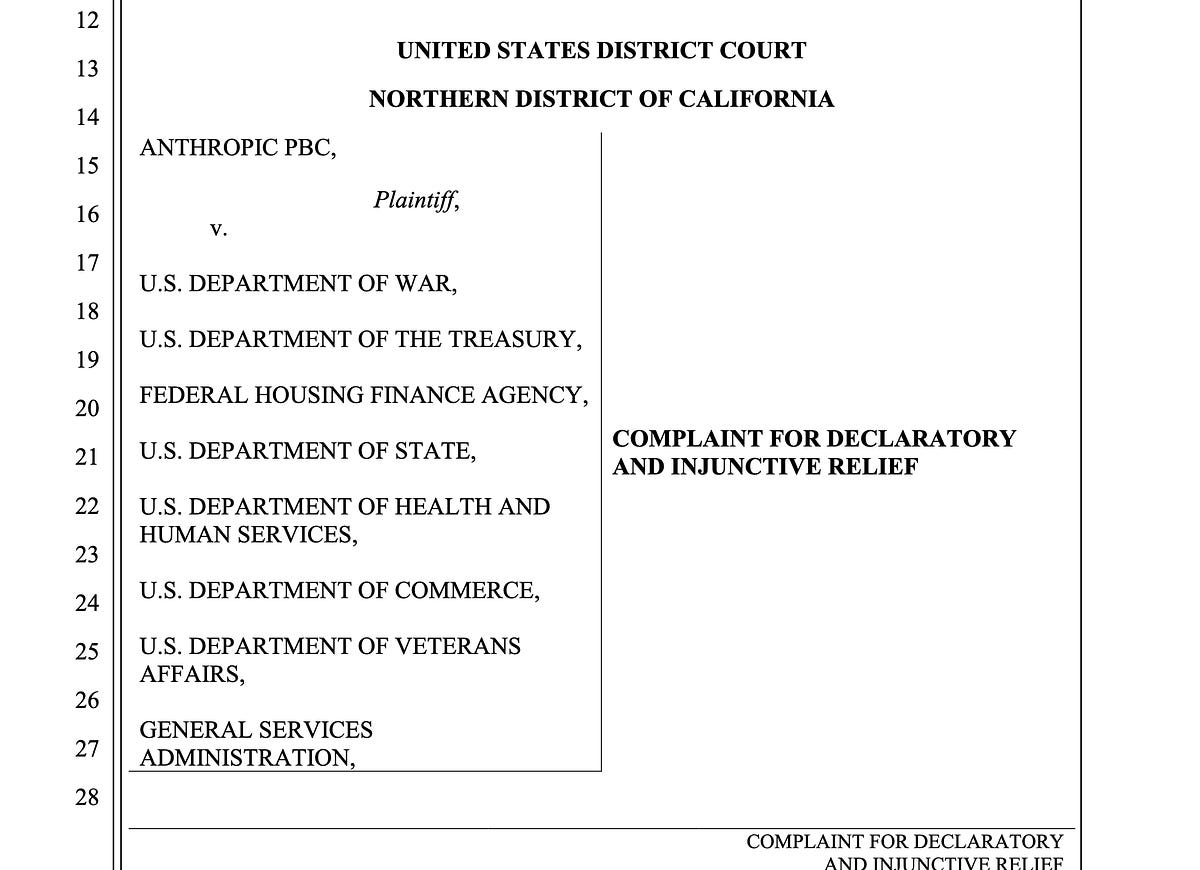

🧠Anthropic has filed a lawsuit against the Trump administration over an AI-related Pentagon dispute. Meanwhile, Elon Musk's xAI is reportedly restarting its development process again, and Iran-related AI-generated fake content about potential warfare is spreading chaos online.

🏢 Anthropic🏢 xAI

AIBullisharXiv – CS AI · Mar 167/10

🧠Researchers developed a new reinforcement learning approach for training diffusion language models that uses entropy-guided step selection and stepwise advantages to overcome challenges with sequence-level likelihood calculations. The method achieves state-of-the-art results on coding and logical reasoning benchmarks while being more computationally efficient than existing approaches.

AINeutralarXiv – CS AI · Mar 167/10

🧠Research paper explores embedded quantum machine learning (EQML) feasibility for edge devices like IoT nodes and drones by 2026. The study identifies hybrid workflows and embedded quantum co-processors as the most viable implementation pathways, while highlighting major barriers including latency, data encoding overhead, and energy constraints.

AIBullisharXiv – CS AI · Mar 167/10

🧠Researchers discovered that privacy vulnerabilities in neural networks exist in only a small fraction of weights, but these same weights are critical for model performance. They developed a new approach that preserves privacy by rewinding and fine-tuning only these critical weights instead of retraining entire networks, maintaining utility while defending against membership inference attacks.

AIBearisharXiv – CS AI · Mar 167/10

🧠Researchers have identified a critical vulnerability in image protection systems that use adversarial perturbations to prevent unauthorized AI editing. Two new purification methods can effectively remove these protections, creating a 'purify-once, edit-freely' attack where images become vulnerable to unlimited manipulation.

AIBearisharXiv – CS AI · Mar 167/10

🧠Research reveals that AI agents using tools for financial advice can recommend unsafe products while maintaining good quality metrics when tool data is corrupted. The study found that 65-93% of recommendations contained risk-inappropriate products across seven LLMs, yet standard evaluation metrics failed to detect these safety issues.

AIBearisharXiv – CS AI · Mar 167/10

🧠Researchers identify a significant bias in Large Language Models when processing multiple updates to the same factual information within context. The study reveals that LLMs struggle to accurately retrieve the most recent version of updated facts, with performance degrading as the number of updates increases, similar to memory interference patterns observed in cognitive psychology.

AINeutralarXiv – CS AI · Mar 167/10

🧠Researchers developed a testing framework to evaluate how reliably AI agents maintain consistent reasoning when inputs are semantically equivalent but differently phrased. Their study of seven foundation models across 19 reasoning problems found that larger models aren't necessarily more robust, with the smaller Qwen3-30B-A3B achieving the highest stability at 79.6% invariant responses.

AIBullisharXiv – CS AI · Mar 167/10

🧠Researchers propose AIM, a novel AI model modulation paradigm that allows a single model to exhibit diverse behaviors without maintaining multiple specialized versions. The approach uses logits redistribution to enable dynamic control over output quality and input feature focus without requiring retraining or additional training data.

🧠 Llama

AIBullisharXiv – CS AI · Mar 167/10

🧠Researchers propose ReBalance, a training-free framework that optimizes Large Reasoning Models by addressing overthinking and underthinking issues through confidence-based guidance. The solution dynamically adjusts reasoning trajectories without requiring model retraining, showing improved accuracy across multiple AI benchmarks.

AINeutralarXiv – CS AI · Mar 167/10

🧠Researchers introduce HCP-DCNet, a new AI framework that combines physical dynamics with symbolic causal reasoning to enable AI systems to understand cause-and-effect relationships. The system uses hierarchical causal primitives and can self-improve through interventions, potentially addressing current limitations in AI's ability to handle distribution shifts and counterfactual reasoning.

AINeutralarXiv – CS AI · Mar 167/10

🧠Researchers developed a supervised fine-tuning approach to align large language model agents with specific economic preferences, addressing systematic deviations from rational behavior in strategic environments. The study demonstrates how LLM agents can be trained to follow either self-interested or morally-guided strategies, producing distinct outcomes in economic games and pricing scenarios.

AIBullisharXiv – CS AI · Mar 167/10

🧠Researchers introduce the AI Search Paradigm, a comprehensive framework for next-generation search systems using four LLM-powered agents (Master, Planner, Executor, Writer) that collaborate to handle everything from simple queries to complex reasoning tasks. The system employs modular architecture with dynamic workflows for task planning, tool integration, and content synthesis to create more adaptive and scalable AI search capabilities.

AIBullisharXiv – CS AI · Mar 167/10

🧠Researchers introduce OnlineSpec, a framework that uses online learning to continuously improve draft models in speculative decoding for large language model inference acceleration. The approach leverages verification feedback to evolve draft models dynamically, achieving up to 24% speedup improvements across seven benchmarks and three foundation models.

AIBullisharXiv – CS AI · Mar 167/10

🧠Researchers introduce improved methods for stitching Vision Foundation Models (VFMs) like CLIP and DINOv2, enabling integration of different models' strengths. The study proposes VFM Stitch Tree (VST) technique that allows controllable accuracy-latency trade-offs for multimodal applications.