AIBearishTechCrunch – AI · Mar 5🔥 8/10

🧠The U.S. government is reportedly considering sweeping new chip export controls that would give it oversight over every chip export sale globally, regardless of the originating country. This drafted proposal represents a significant expansion of U.S. regulatory reach in the semiconductor industry.

AINeutralCrypto Briefing · 1d ago7/10

🧠Major funding from OpenAI and SpaceX is driving investor attention toward Asian technology firms critical to AI hardware infrastructure. However, these companies face significant valuation pressures and geopolitical risks despite strong demand from US-based AI investments.

🏢 OpenAI

AIBullishDecrypt – AI · 2d ago7/10

🧠Lenovo's stock surged 109% in May, marking its best month in 27 years, driven by explosive demand for AI servers that now represent 38% of quarterly revenue. Goldman Sachs more than doubled its price target on the company, reflecting confidence in sustained AI infrastructure growth.

$MKR

AIBullishBlockonomi · 2d ago7/10

🧠Dell Technologies stock surged over 30% amid surging demand for AI servers, contributing to a historic market rally that pushed the Dow Jones Industrial Average past 51,000 for the first time. The rally reflects broader investor appetite for companies positioned to capitalize on artificial intelligence infrastructure buildout, though selective weakness appeared in other sectors like satellite communications.

AIBullishBlockonomi · 2d ago7/10

🧠Dell's stock surged 32% following Q1 earnings that exceeded expectations, driven by $16.1 billion in AI server revenue representing a 757% year-over-year increase. Analyst upgrades with targets as high as $700 reflect growing confidence in the company's position within the artificial intelligence infrastructure boom.

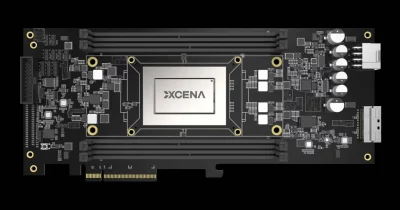

AIBullishCrypto Briefing · 2d ago7/10

🧠XCENA, an AI chip startup, raised $135M in Series B funding, achieving a $570M valuation. The company's memory-centric AI chip technology aims to improve data processing efficiency and could influence the competitive landscape of AI hardware development.

AIBullishCrypto Briefing · 3d ago7/10

🧠Groq has secured $750 million in Series E funding at a $6.9 billion valuation, demonstrating sustained investor confidence in AI infrastructure development. The capital will support scaling of the company's specialized AI inference hardware, reflecting broader market momentum toward dedicated AI acceleration solutions.

AI × CryptoBullishBlockonomi · May 117/10

🤖Cerebras Systems has increased its IPO price range to $150-$160 per share, targeting a $4.8 billion valuation ahead of its May 13 Nasdaq debut under ticker CBRS. The offering experienced extraordinary demand with 20x oversubscription, signaling strong investor interest in AI infrastructure and semiconductor companies.

AIBullishFortune Crypto · May 97/10

🧠Qualcomm CEO Cristiano Amon revealed the company is collaborating with major AI players on undisclosed next-generation devices, including powering OpenAI's first hardware venture. The announcement signals a strategic shift away from traditional smartphones toward AI-centric computing devices, positioning Qualcomm as critical infrastructure for the emerging AI hardware ecosystem.

🏢 OpenAI

AIBullishTechCrunch – AI · May 47/10

🧠Cerebras, an AI chip manufacturer with deep ties to OpenAI, is preparing for an IPO that could value the company at $26.6 billion or higher. The upcoming public offering highlights the growing strategic importance of specialized AI hardware providers in the competitive artificial intelligence ecosystem.

$MKR🏢 OpenAI

AIBullishDecrypt · May 27/10

🧠Apple has regained relevance in the AI market following the release of OpenClaw, an open-source agent framework that transformed the Mac mini into a sought-after AI development platform. The unexpected demand surge has created supply constraints, demonstrating how specialized software can dramatically shift hardware market dynamics.

AIBullishBlockonomi · Apr 207/10

🧠IonQ stock surged 60% following Nvidia's announcement of quantum AI tools, signaling mainstream tech adoption in quantum computing. The rally reflects growing institutional interest in quantum technology and validates IonQ's position in an emerging market.

🏢 Nvidia

AIBearishCrypto Briefing · Apr 177/10

🧠Nvidia's dominant position in AI chip manufacturing faces increasing pressure from competing internal chip development efforts by Google and Amazon, while geopolitical export controls create additional market uncertainty. The article examines how GPU significance in AI model training intersects with strategic competition and international trade restrictions affecting the semiconductor industry.

🏢 Nvidia

AIBullisharXiv – CS AI · Apr 147/10

🧠Researchers introduce Deep Optimizer States, a technique that reduces GPU memory constraints during large language model training by dynamically offloading optimizer state between host and GPU memory during computation cycles. The method achieves 2.5× faster iterations compared to existing approaches by better managing the memory fluctuations inherent in transformer training pipelines.

AIBullishBlockonomi · Apr 137/10

🧠Broadcom's stock gains momentum following UBS's upgrade of AI revenue projections to $145 billion, driven by Google's extension of its TPU chip partnership through 2031 and increased compute allocation to Anthropic. The extended partnership signals sustained demand for specialized AI infrastructure and validates Broadcom's positioning as a critical supplier in the competitive AI hardware ecosystem.

🏢 Anthropic

DeFiBullishThe Defiant · Mar 257/10

💎Obex, a Sky-backed stablecoin incubator, is deploying $1 billion in USDS across eight projects focused on mortgages, AI hardware, and solar energy. This represents the protocol's largest effort to diversify yield sources beyond traditional crypto-native investments.

AI × CryptoBullishCoinDesk · Mar 257/10

🤖Sky-backed stablecoin incubator Obex is deploying $1 billion across tokenized assets in AI hardware, energy, and housing sectors to diversify yield sources beyond traditional crypto markets. This strategic move aims to expand Sky's ecosystem by targeting real-world assets rather than relying on circular cryptocurrency yields.

AIBullishBlockonomi · Mar 257/10

🧠Nvidia stock rises following three major developments: Arm's new AI CPU launch, a massive $50B+ AWS order for 1 million GPUs, and resumed chip sales to China potentially worth $32B annually. These combined deals represent approximately $82B in new revenue streams for the semiconductor giant.

🏢 Nvidia

AIBullishIEEE Spectrum – AI · Mar 167/10

🧠Nvidia announced the Groq 3 LPU at GTC 2024, its first chip specifically designed for AI inference rather than training, incorporating technology licensed from startup Groq for $20 billion. The chip uses SRAM memory integrated within the processor to achieve 7x faster memory bandwidth than traditional GPUs, optimizing for the low latency required for real-time AI inference applications.

🏢 Nvidia

AIBullishThe Register – AI · Mar 127/10

🧠Meta has unveiled four custom AI chips developed in partnership with Broadcom, claiming some outperform existing commercial silicon solutions. This move represents Meta's strategic shift toward developing proprietary AI hardware to reduce dependence on third-party chip manufacturers.

AIBullisharXiv – CS AI · Mar 67/10

🧠A research paper presents a 10-year roadmap for coordinated AI and hardware co-development, targeting 1000x efficiency improvements in AI training and inference by 2035. The vision emphasizes energy efficiency over raw compute scaling, proposing integrated solutions across algorithms, architectures, and systems to enable sustainable AI deployment from cloud to edge environments.

AIBearishTechCrunch – AI · Mar 57/10

🧠Meta faces a lawsuit over privacy concerns regarding its AI smart glasses, with allegations that the company's marketing promised user control while subcontractors were actually reviewing customer footage including sensitive content. The legal action centers on discrepancies between Meta's privacy promises and actual data handling practices.

AIBearishIEEE Spectrum – AI · Feb 107/106

🧠The memory chip shortage is driven by massive AI demand for high-bandwidth memory (HBM), causing DRAM prices to surge 80-90% this quarter. While major AI companies have secured supply through 2028, other industries face scarce supply and inflated prices that won't normalize for years.

$MKR

AIBullishIEEE Spectrum – AI · Feb 97/105

🧠Researchers at UC San Diego developed a new type of bulk resistive RAM (RRAM) that overcomes traditional limitations by switching entire layers rather than forming filaments. The technology achieved 90% accuracy in AI learning tasks and could enable more efficient edge computing by allowing computation within memory itself.

AINeutralIEEE Spectrum – AI · Dec 277/104

🧠AI data centers are hitting physical limits with copper cables as GPU-to-GPU data rates approach terabit-per-second speeds, requiring thicker, shorter cables that complicate dense connections. Startups Point2 Technology and AttoTude are developing radio-based cable solutions that promise longer reach, lower power consumption, and narrower cables than copper alternatives.

$LINK$MKR