49 articles tagged with #ai-reasoning. AI-curated summaries with sentiment analysis and key takeaways from 50+ sources.

AIBullisharXiv – CS AI · 2d ago7/10

🧠A frontier language model has achieved a perfect score on the LSAT, marking the first documented instance of an AI system answering all questions without error on the standardized law school admission test. Research shows that extended reasoning and thinking processes are critical to this performance, with ablation studies revealing up to 8 percentage point drops in accuracy when these mechanisms are removed.

AIBullisharXiv – CS AI · Mar 177/10

🧠Researchers at NVIDIA developed NEMOTRON-CROSSTHINK, a new AI framework that uses reinforcement learning with multi-domain data to improve language model reasoning across diverse fields beyond just mathematics. The system shows significant performance improvements on both mathematical and non-mathematical reasoning benchmarks while using 28% fewer tokens for correct answers.

AIBearisharXiv – CS AI · Mar 177/10

🧠Researchers evaluated the faithfulness of closed-source AI models like ChatGPT and Gemini in medical reasoning, finding that their explanations often appear plausible but don't reflect actual reasoning processes. The study revealed these models frequently incorporate external hints without acknowledgment and their chain-of-thought reasoning doesn't causally drive predictions, raising safety concerns for medical applications.

🧠 ChatGPT🧠 Gemini

AIBullisharXiv – CS AI · Mar 177/10

🧠Researchers developed Token-Selective Dual Knowledge Distillation (TSD-KD), a new framework that improves AI reasoning by allowing smaller models to learn from larger ones more effectively. The method achieved up to 54.4% better accuracy than baseline models on reasoning benchmarks, with student models sometimes outperforming their teachers by up to 20.3%.

AIBullishMarkTechPost · Mar 167/10

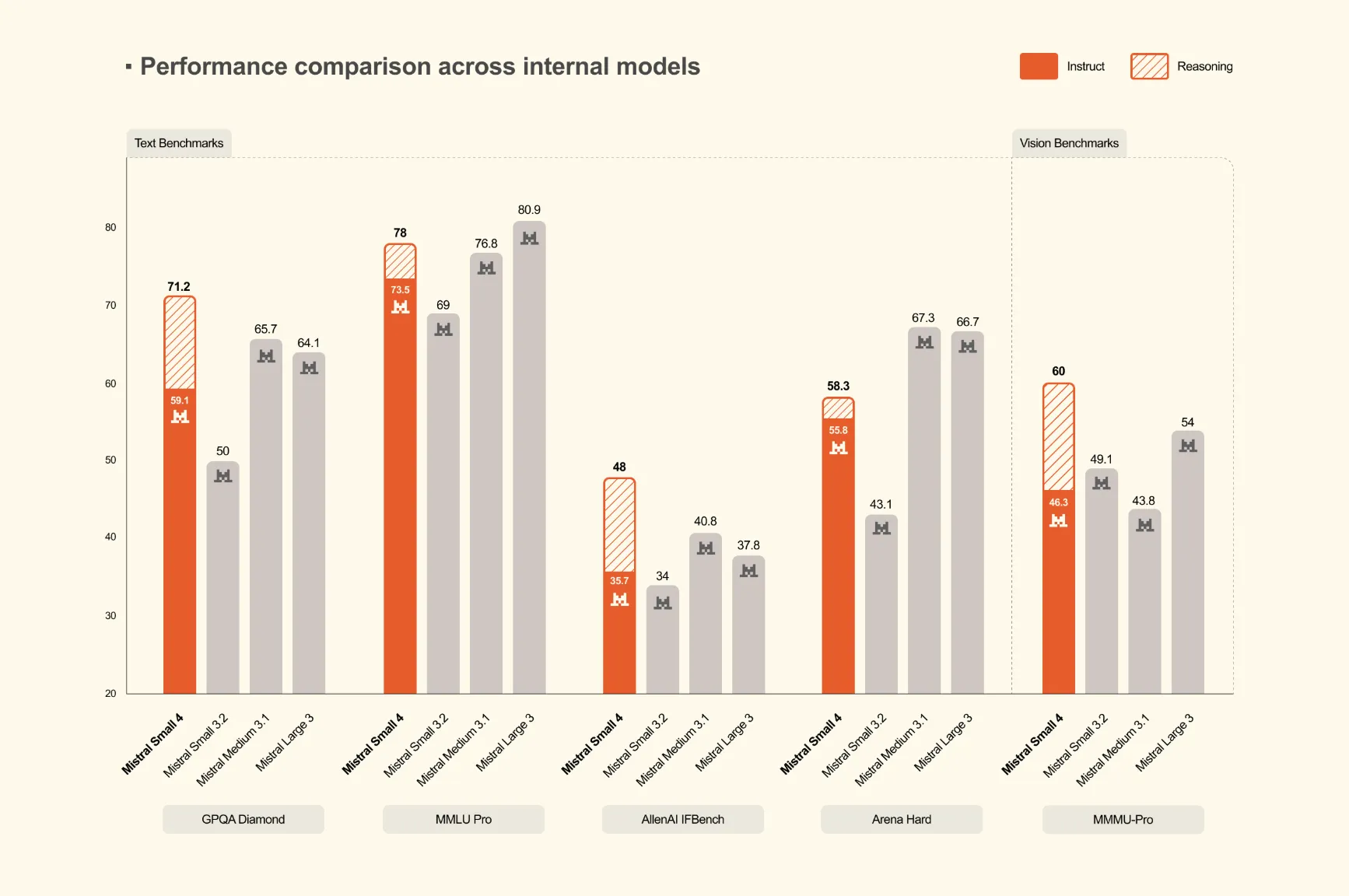

🧠Mistral AI has launched Mistral Small 4, a 119-billion parameter Mixture of Experts (MoE) model that unifies instruction following, reasoning, and multimodal capabilities into a single deployment. This represents the first model from Mistral to consolidate the functions of their previously separate Mistral Small, Magistral, and Pixtral models.

🏢 Mistral

AIBullisharXiv – CS AI · Mar 167/10

🧠Researchers propose ReBalance, a training-free framework that optimizes Large Reasoning Models by addressing overthinking and underthinking issues through confidence-based guidance. The solution dynamically adjusts reasoning trajectories without requiring model retraining, showing improved accuracy across multiple AI benchmarks.

AINeutralarXiv – CS AI · Mar 127/10

🧠Researchers introduce TRACED, a framework that evaluates AI reasoning quality through geometric analysis rather than traditional scalar probabilities. The system identifies correct reasoning as high-progress stable trajectories, while AI hallucinations show low-progress unstable patterns with high curvature fluctuations.

AIBullisharXiv – CS AI · Mar 117/10

🧠AlphaApollo is a new AI reasoning system that addresses limitations in foundation models through multi-turn agentic reasoning, learning, and evolution components. The system demonstrates significant performance improvements across math reasoning benchmarks, with success rates exceeding 85% for tool calls and substantial gains from reinforcement learning across different model scales.

AIBullisharXiv – CS AI · Mar 117/10

🧠Researchers introduce Logos, a compact AI model that combines multi-step logical reasoning with chemical consistency for molecular design. The model achieves strong performance in structural accuracy and chemical validity while using fewer parameters than larger language models, and provides transparent reasoning that can be inspected by humans.

AIBullisharXiv – CS AI · Mar 97/10

🧠Researchers propose a new method for training large language models (LLMs) that addresses the diversity loss problem in reinforcement learning approaches. Their technique uses the α-divergence family to better balance precision and diversity in reasoning tasks, achieving state-of-the-art performance on theorem-proving benchmarks.

AINeutralarXiv – CS AI · Mar 56/10

🧠Research reveals that Large Language Models show varying vulnerabilities to different types of Chain-of-Thought reasoning perturbations, with math errors causing 50-60% accuracy loss in small models while unit conversion issues remain challenging even for the largest models. The study tested 13 models across parameter ranges from 3B to 1.5T parameters, finding that scaling provides protection against some perturbations but limited defense against dimensional reasoning tasks.

AIBullisharXiv – CS AI · Mar 57/10

🧠Google's Gemini 3.1 Pro Preview achieved a perfect score on IPhO 2025 theory problems across five runs, surpassing previous AI performance that fell behind top human contestants. However, the researchers acknowledge potential data contamination since the model was released after the competition.

🧠 Gemini

AIBullisharXiv – CS AI · Mar 56/10

🧠Researchers developed R1-Code-Interpreter, a large language model that uses multi-stage reinforcement learning to autonomously generate code for step-by-step reasoning across diverse tasks. The 14B parameter model achieves 72.4% accuracy on test tasks, outperforming GPT-4o variants and demonstrating emergent self-checking capabilities through code generation.

🏢 Hugging Face🧠 GPT-4

AIBullisharXiv – CS AI · Mar 47/102

🧠Researchers introduce SEM-CTRL, a new approach that ensures Large Language Models produce syntactically and semantically correct outputs without requiring fine-tuning. The system uses token-level Monte Carlo Tree Search guided by Answer Set Grammars to enforce context-sensitive constraints, allowing smaller pre-trained LLMs to outperform larger models on tasks like reasoning and planning.

AINeutralarXiv – CS AI · Mar 37/104

🧠Researchers analyzed compression effects on large reasoning models (LRMs) through quantization, distillation, and pruning methods. They found that dynamically quantized 2.51-bit models maintain near-original performance, while identifying critical weight components and showing that protecting just 2% of excessively compressed weights can improve accuracy by 6.57%.

AIBullisharXiv – CS AI · Mar 37/103

🧠Researchers introduce ExGRPO, a new framework that improves AI reasoning by reusing and prioritizing valuable training experiences based on correctness and entropy. The method shows consistent performance gains of +3.5-7.6 points over standard approaches across multiple model sizes while providing more stable training.

AIBullishGoogle DeepMind Blog · Feb 127/108

🧠Gemini 3 Deep Think represents an updated specialized reasoning mode designed to tackle complex challenges in modern science, research, and engineering. The advancement focuses on enhanced problem-solving capabilities for technical and scientific applications.

AIBullishOpenAI News · Dec 117/108

🧠OpenAI has released GPT-5.2, their most advanced model for mathematics and science applications, achieving state-of-the-art performance on benchmarks like GPQA Diamond and FrontierMath. The model demonstrates significant research capabilities, including solving open theoretical problems and generating reliable mathematical proofs.

AINeutralarXiv – CS AI · 1d ago6/10

🧠Researchers introduce PrivacyReasoner, an LLM-based agent architecture that reconstructs individual privacy perspectives from online comment history to predict how specific people would perceive data practices. The system outperforms baseline models in predicting privacy concerns across AI, e-commerce, and healthcare domains by contextually activating relevant privacy beliefs.

AINeutralarXiv – CS AI · 1d ago6/10

🧠Researchers present EMBER, a hybrid architecture combining spiking neural networks with large language models where the SNN acts as a persistent, biologically-inspired memory substrate that autonomously triggers LLM reasoning. The system demonstrates emergent autonomous behavior, initiating unprompted user contact after learning associations during idle periods, suggesting a fundamental shift in how AI systems could coordinate cognition and action.

AIBullisharXiv – CS AI · 2d ago6/10

🧠Researchers introduce M³KG-RAG, a novel multimodal retrieval-augmented generation system that enhances large language models by integrating multi-hop knowledge graphs with audio-visual data. The approach improves reasoning depth and answer accuracy by filtering irrelevant information through a new grounding and pruning mechanism called GRASP.

$KG

AINeutralarXiv – CS AI · 2d ago6/10

🧠Researchers introduced COMPOSITE-STEM, a new benchmark containing 70 expert-written scientific tasks across physics, biology, chemistry, and mathematics to evaluate AI agents. The top-performing model achieved only 21% accuracy, indicating the benchmark effectively measures capabilities beyond current AI reach and addresses the saturation of existing evaluation frameworks.

AINeutralarXiv – CS AI · 3d ago6/10

🧠Researchers present a novel approach using agentic language model feedback frameworks to generate planning domains from natural language descriptions augmented with symbolic information. The method employs heuristic search over model space optimized by various feedback mechanisms, including landmarks and plan validator outputs, to improve domain quality for practical deployment.

AINeutralarXiv – CS AI · 6d ago6/10

🧠SymptomWise introduces a deterministic reasoning framework that separates language understanding from diagnostic inference in AI-driven medical systems, combining expert-curated knowledge with constrained LLM use to improve reliability and reduce hallucinations. The system achieved 88% accuracy in placing correct diagnoses in top-five differentials on challenging pediatric neurology cases, demonstrating how structured approaches can enhance AI safety in critical domains.

AIBearisharXiv – CS AI · Apr 66/10

🧠Researchers introduce DeltaLogic, a new benchmark that tests AI models' ability to revise their logical conclusions when presented with minimal changes to premises. The study reveals that language models like Qwen and Phi-4 struggle with belief revision even when they perform well on initial reasoning tasks, showing concerning inertia patterns where models fail to update conclusions when evidence changes.