←Back to feed

🧠 AI🟢 BullishImportance 7/10

Mistral AI Releases Mistral Small 4: A 119B-Parameter MoE Model that Unifies Instruct, Reasoning, and Multimodal Workloads

2 images via MarkTechPost

🤖AI Summary

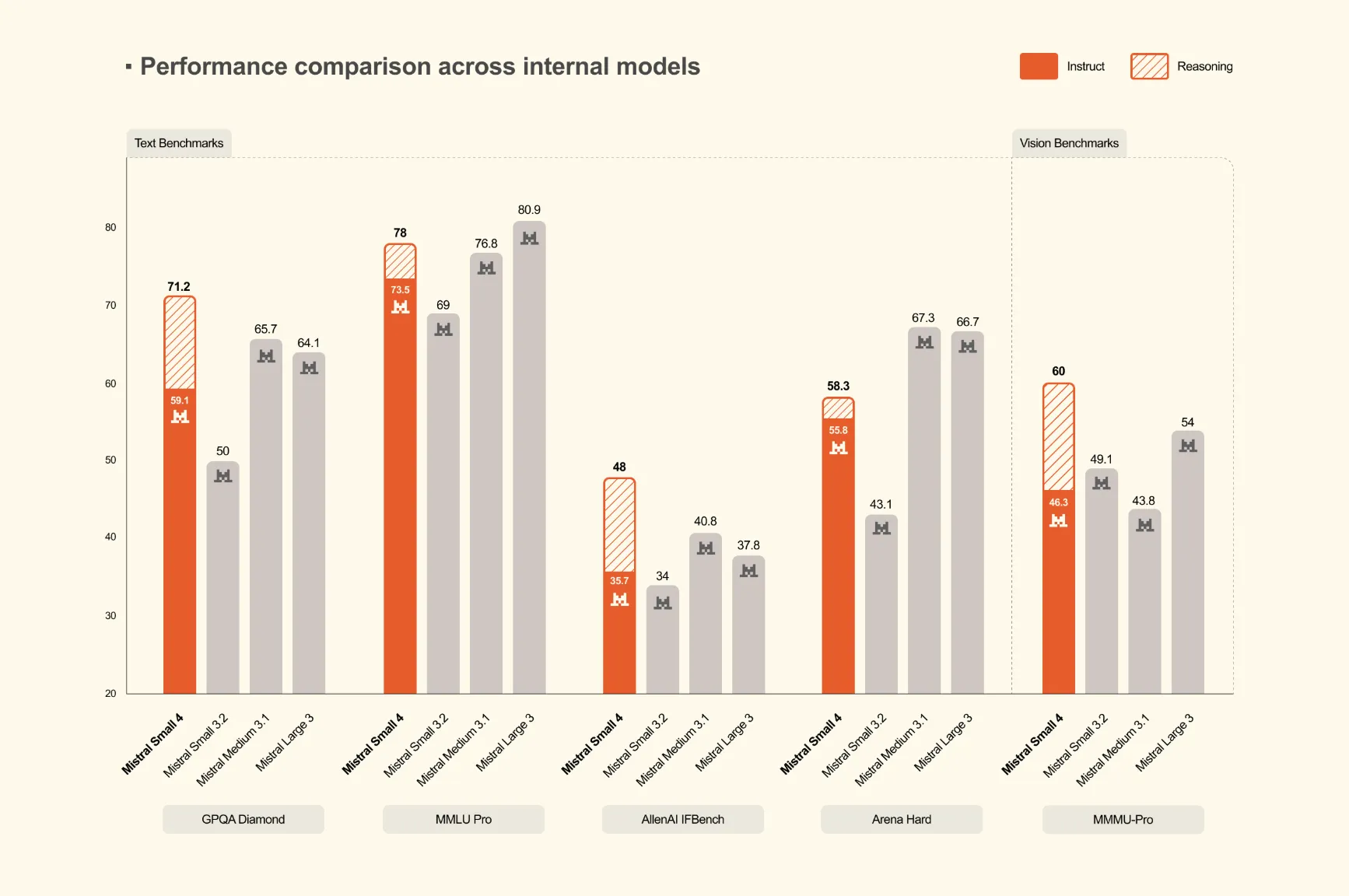

Mistral AI has launched Mistral Small 4, a 119-billion parameter Mixture of Experts (MoE) model that unifies instruction following, reasoning, and multimodal capabilities into a single deployment. This represents the first model from Mistral to consolidate the functions of their previously separate Mistral Small, Magistral, and Pixtral models.

Key Takeaways

- →Mistral Small 4 is a 119-billion parameter MoE model that combines multiple AI capabilities in one deployment.

- →The model unifies instruction following, reasoning, and multimodal understanding previously handled by separate models.

- →This consolidation eliminates the need for multiple model deployments for different AI workloads.

- →The release represents Mistral AI's strategy to create more versatile and efficient AI models.

- →The model combines capabilities from Mistral Small, Magistral, and Pixtral into a single solution.

Mentioned in AI

Companies

Mistral→

#mistral-ai#large-language-model#moe-model#multimodal-ai#ai-reasoning#model-deployment#artificial-intelligence#unified-model

Read Original →via MarkTechPost

Act on this with AI

Stay ahead of the market.

Connect your wallet to an AI agent. It reads balances, proposes swaps and bridges across 15 chains — you keep full control of your keys.

Related Articles