AIBullishMarkTechPost · Mar 167/10

🧠

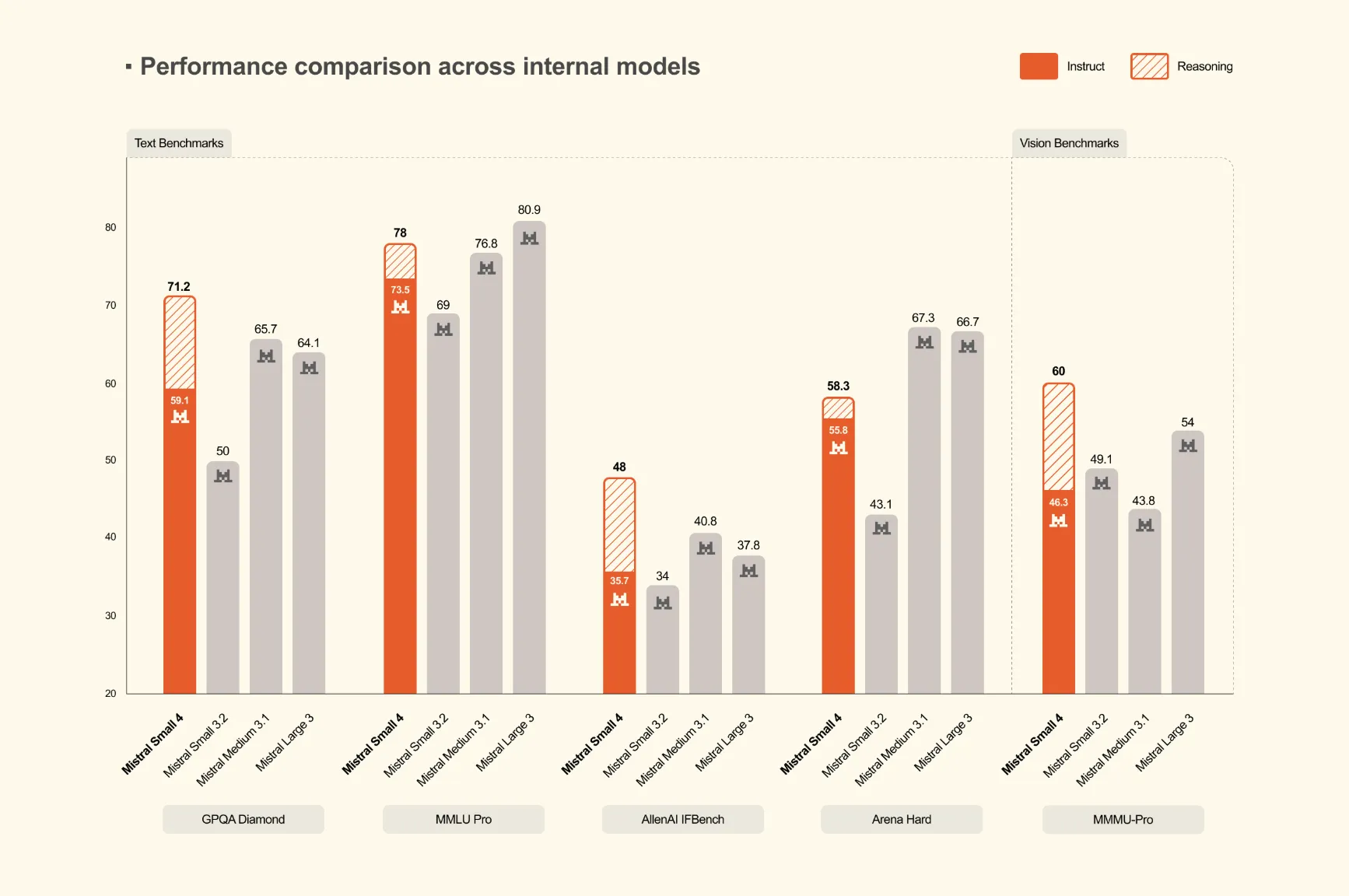

Mistral AI Releases Mistral Small 4: A 119B-Parameter MoE Model that Unifies Instruct, Reasoning, and Multimodal Workloads

Mistral AI has launched Mistral Small 4, a 119-billion parameter Mixture of Experts (MoE) model that unifies instruction following, reasoning, and multimodal capabilities into a single deployment. This represents the first model from Mistral to consolidate the functions of their previously separate Mistral Small, Magistral, and Pixtral models.

🏢 Mistral