AIBullisharXiv – CS AI · May 127/10

🧠Researchers introduce Mixed-Policy Distillation (MPD), a technique that compresses reasoning in smaller language models by having larger teacher models rewrite student-generated reasoning traces into more concise versions. The method reduces token usage by up to 27.1% while maintaining or improving performance, addressing critical deployment constraints around memory, latency, and serving costs.

AIBullisharXiv – CS AI · Apr 77/10

🧠Researchers propose SoLA, a training-free compression method for large language models that combines soft activation sparsity and low-rank decomposition. The method achieves significant compression while improving performance, demonstrating 30% compression on LLaMA-2-70B with reduced perplexity from 6.95 to 4.44 and 10% better downstream task accuracy.

🏢 Perplexity

AIBullishMarkTechPost · Mar 167/10

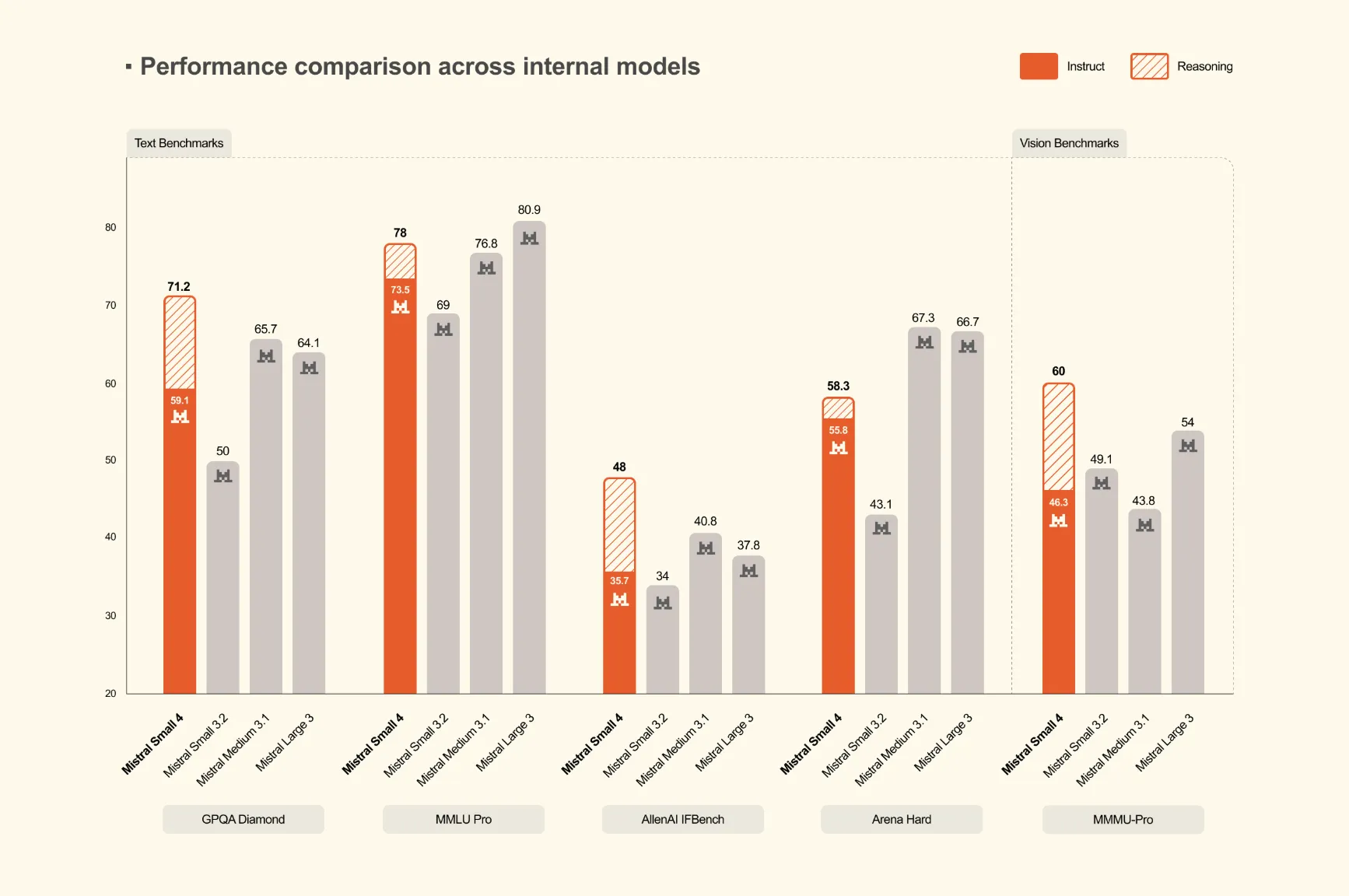

🧠Mistral AI has launched Mistral Small 4, a 119-billion parameter Mixture of Experts (MoE) model that unifies instruction following, reasoning, and multimodal capabilities into a single deployment. This represents the first model from Mistral to consolidate the functions of their previously separate Mistral Small, Magistral, and Pixtral models.

🏢 Mistral

AINeutralarXiv – CS AI · Mar 37/104

🧠Researchers analyzed 20 Mixture-of-Experts (MoE) language models to study local routing consistency, finding a trade-off between routing consistency and local load balance. The study introduces new metrics to measure how well expert offloading strategies can optimize memory usage on resource-constrained devices while maintaining inference speed.

AINeutralarXiv – CS AI · May 96/10

🧠Researchers propose a regime theory framework for selecting controller classes in language and vision-language models, determining whether AI systems should answer directly, retrieve evidence, defer to stronger models, or abstain. The work demonstrates that model expressivity doesn't uniformly improve performance in finite samples, and provides a principled method to match controller complexity to data availability across multiple benchmarks.

AINeutralarXiv – CS AI · Apr 146/10

🧠Gypscie is a new cross-platform AI artifact management system that unifies the complexity of managing machine learning models across diverse infrastructure through a knowledge graph and rule-based query language. The system streamlines the entire AI model lifecycle—from data preparation through deployment and monitoring—while enabling explainability through provenance tracking.

AINeutralarXiv – CS AI · Apr 146/10

🧠A-IO addresses critical memory-bound bottlenecks in LLM deployment on NPU platforms like Ascend 910B by tackling the 'Model Scaling Paradox' and limitations of current speculative decoding techniques. The research reveals that static single-model deployment strategies and kernel synchronization overhead significantly constrain inference performance on heterogeneous accelerators.

AINeutralAI News · Apr 136/10

🧠Enterprise security leaders face growing challenges securing edge AI deployments as models like Google Gemma 4 proliferate beyond traditional cloud infrastructure. Organizations built robust cloud security perimeters but now struggle to govern AI workloads running on distributed edge systems, requiring new governance approaches.

AIBullisharXiv – CS AI · Mar 266/10

🧠Researchers propose APreQEL, an adaptive mixed precision quantization method for deploying large language models on edge devices. The approach optimizes memory, latency, and accuracy by applying different quantization levels to different layers based on their importance and hardware characteristics.

AIBullisharXiv – CS AI · Mar 37/1010

🧠TriMoE introduces a novel GPU-CPU-NDP architecture that optimizes large Mixture-of-Experts model inference by strategically mapping hot, warm, and cold experts to their optimal compute units. The system leverages AMX-enabled CPUs and includes bottleneck-aware scheduling, achieving up to 2.83x performance improvements over existing solutions.

AIBullishHugging Face Blog · Jul 216/105

🧠NVIDIA has partnered with Hugging Face to integrate NIM (NVIDIA Inference Microservices) to accelerate large language model deployment and inference. This collaboration aims to make AI model deployment more efficient and accessible through optimized GPU acceleration on the Hugging Face platform.

AIBullishHugging Face Blog · Jul 296/105

🧠Hugging Face has partnered with NVIDIA to integrate NIM (NVIDIA Inference Microservices) for serverless AI model inference. This collaboration enables developers to deploy and scale AI models more efficiently using NVIDIA's optimized inference infrastructure through Hugging Face's platform.

AIBullishHugging Face Blog · Jun 76/106

🧠Hugging Face has launched a new Embedding Container for Amazon SageMaker, enabling easier deployment of embedding models in AWS cloud infrastructure. This integration streamlines the process for developers to implement text embeddings and vector search capabilities in production environments.

AIBullishHugging Face Blog · Sep 196/107

🧠Rocket Money partnered with Hugging Face to address challenges in scaling volatile machine learning models for production environments. The collaboration focuses on implementing robust infrastructure solutions to handle ML model instability and performance variations in real-world applications.

AIBullishHugging Face Blog · May 246/105

🧠Hugging Face has partnered with Microsoft to launch the Hugging Face Model Catalog on Azure, expanding access to AI models through Microsoft's cloud platform. This collaboration aims to make AI model deployment and integration more accessible for enterprise customers using Azure services.

AIBullishHugging Face Blog · Jul 254/107

🧠Hugging Face has introduced a new command-line interface called 'hf' that promises to be faster and more user-friendly than their previous CLI tools. This development aims to improve developer experience when working with Hugging Face's AI model repository and services.

AINeutralHugging Face Blog · Apr 304/107

🧠The article appears to focus on building an MCP (Model Context Protocol) server using Gradio, a Python library for creating machine learning interfaces. This represents a technical guide for developers working with AI model deployment and user interface creation.

AIBullishHugging Face Blog · May 225/106

🧠The article appears to discuss deploying machine learning models on AWS Inferentia2 chips using Hugging Face's platform. This represents continued integration between major cloud providers and AI model deployment platforms.

AINeutralLil'Log (Lilian Weng) · Jan 105/10

🧠Large transformer models face significant inference optimization challenges due to high computational costs and memory requirements. The article discusses technical factors contributing to inference bottlenecks that limit real-world deployment at scale.

AINeutralHugging Face Blog · Sep 274/109

🧠The article appears to be about Hugging Face's Accelerate library and how it enables running very large AI models using PyTorch. However, the article body is empty, making it impossible to provide specific technical details or implications.

AINeutralHugging Face Blog · Jul 254/105

🧠The article appears to focus on deploying TensorFlow computer vision models using Hugging Face's platform integrated with TensorFlow Serving infrastructure. This represents a technical tutorial on AI model deployment workflows combining popular machine learning frameworks.

AIBullishHugging Face Blog · Jan 115/105

🧠The article provides a technical guide on deploying GPT-J 6B, a large language model, for inference using Hugging Face Transformers library and Amazon SageMaker cloud platform. This demonstrates the accessibility of advanced AI model deployment for developers and organizations looking to implement large language models in production environments.

AINeutralHugging Face Blog · Nov 44/103

🧠This appears to be a technical article about optimizing BERT model inference performance on CPU architectures, part of a series on scaling transformer models. The article likely covers implementation strategies and performance improvements for running large language models efficiently on CPU hardware.

AINeutralHugging Face Blog · Aug 113/105

🧠The article discusses deploying Vision Transformer (ViT) models on Kubernetes using TensorFlow Serving. However, the article body appears to be empty or incomplete, limiting detailed analysis of the technical implementation.

AINeutralHugging Face Blog · Jul 181/106

🧠The article title suggests TGI Multi-LoRA is a technology solution that enables deploying a single system to serve 30 different models simultaneously. However, no article body content was provided to analyze the technical details, implementation, or market implications of this multi-model serving capability.