992 articles tagged with #ai-research. AI-curated summaries with sentiment analysis and key takeaways from 50+ sources.

AINeutralarXiv – CS AI · Mar 176/10

🧠Researchers discovered that transformer language models process factual information through rotational dynamics rather than magnitude changes, actively suppressing incorrect answers instead of passively failing. This geometric pattern only emerges in models above 1.6B parameters, suggesting a phase transition in factual processing capabilities.

AIBullisharXiv – CS AI · Mar 176/10

🧠Researchers introduce Truncated-Reasoning Self-Distillation (TRSD), a post-training method that enables AI language models to maintain accuracy while using shorter reasoning traces. The technique reduces computational costs by training models to produce correct answers from partial reasoning, achieving significant inference-time efficiency gains without sacrificing performance.

AIBullisharXiv – CS AI · Mar 176/10

🧠Researchers propose a new framework that uses LLMs as code generators rather than per-instance evaluators for high-stakes decision-making, creating interpretable and reproducible AI systems. The approach generates executable decision logic once instead of querying LLMs for each prediction, demonstrated through venture capital founder screening with competitive performance while maintaining full transparency.

🧠 GPT-4

AIBullisharXiv – CS AI · Mar 176/10

🧠Researchers introduce Pragma-VL, a new alignment algorithm for Multimodal Large Language Models that balances safety and helpfulness by improving visual risk perception and using contextual arbitration. The method outperforms existing baselines by 5-20% on multimodal safety benchmarks while maintaining general AI capabilities in mathematics and reasoning.

AINeutralarXiv – CS AI · Mar 176/10

🧠Research reveals that LLM query rewriting in RAG systems shows highly domain-dependent performance, degrading retrieval effectiveness by 9% in financial domains while improving it by 5.1% in scientific contexts. The study identifies that effectiveness depends on whether rewriting improves or worsens lexical alignment between queries and domain-specific terminology.

AINeutralarXiv – CS AI · Mar 176/10

🧠Researchers propose Evi-DA, an evidence-based technique that improves how large language models predict population response distributions across different cultures and domains. The method uses World Values Survey data and reinforcement learning to achieve up to 44% improvement in accuracy compared to existing approaches.

AIBullisharXiv – CS AI · Mar 176/10

🧠Researchers have developed Resolving Interference (RI), a new framework that improves AI model merging by reducing cross-task interference when combining specialized models. The method makes models functionally orthogonal to other tasks using only unlabeled data, improving merging performance by up to 3.8% and generalization by up to 2.3%.

AIBullisharXiv – CS AI · Mar 176/10

🧠Researchers introduce Geo-ADAPT, a new AI framework using Vision-Language Models for image geo-localization that adapts reasoning depth based on image complexity. The system uses an Optimized Locatability Score and specialized dataset to achieve state-of-the-art performance while reducing AI hallucinations.

AIBullisharXiv – CS AI · Mar 176/10

🧠Researchers developed REFINE-DP, a hierarchical framework that combines diffusion policies with reinforcement learning to enable humanoid robots to perform complex loco-manipulation tasks. The system achieves over 90% success rate in simulation and demonstrates smooth autonomous execution in real-world environments for tasks like door traversal and object transport.

AIBullisharXiv – CS AI · Mar 176/10

🧠Researchers propose FOUL (Federated On-server Unlearning), a new framework for efficiently removing specific participants' data from federated learning models without accessing client data. The approach reduces computational and communication costs while maintaining privacy compliance through a two-stage process that performs unlearning operations on the server side.

AIBullisharXiv – CS AI · Mar 176/10

🧠Researchers propose CroBo, a new visual state representation learning framework that helps robotic agents better understand dynamic environments by encoding both semantic identities and spatial locations of scene elements. The framework uses a global-to-local reconstruction method that compresses observations into compact tokens, achieving state-of-the-art performance on robot policy learning benchmarks.

AIBullisharXiv – CS AI · Mar 176/10

🧠Researchers introduce SmoothVLA, a new reinforcement learning framework that improves robot control by optimizing both task performance and motion smoothness. The system addresses the trade-off between stability and exploration in Vision-Language-Action models, achieving 13.8% better smoothness than standard RL methods.

AIBullisharXiv – CS AI · Mar 176/10

🧠Researchers present Centered Reward Distillation (CRD), a new reinforcement learning framework for fine-tuning diffusion models that addresses brittleness issues in existing methods. The approach uses within-prompt centering and drift control techniques to achieve state-of-the-art performance in text-to-image generation while reducing reward hacking and convergence issues.

AINeutralarXiv – CS AI · Mar 176/10

🧠Researchers studied computational resource allocation in AI retrieval systems for long-horizon agents, finding that re-ranking stages benefit more from powerful models and deeper candidate pools than query expansion stages. The study suggests concentrating compute power on re-ranking rather than distributing it uniformly across pipeline stages for better performance.

🧠 Gemini

AIBullisharXiv – CS AI · Mar 176/10

🧠Researchers introduce MVHOI, a new AI framework that significantly improves human-object interaction video generation by handling complex 3D manipulations through a two-stage process using 3D foundation models. The system can create realistic long-duration videos showing intricate object manipulations from multiple viewpoints, addressing limitations of existing approaches that struggle with non-planar movements.

AINeutralarXiv – CS AI · Mar 176/10

🧠Researchers conducted an empirical study on 16 Large Language Models to understand how they process tabular data, revealing a three-phase attention pattern and finding that tabular tasks require deeper neural network layers than math reasoning. The study analyzed attention dynamics, layer depth requirements, expert activation in MoE models, and the impact of different input designs on table understanding performance.

AIBullisharXiv – CS AI · Mar 176/10

🧠Researchers have developed QA-Dragon, a new Query-Aware Dynamic RAG System that significantly improves knowledge-intensive Visual Question Answering by combining text and image retrieval strategies. The system achieved substantial performance improvements of 5-6% across different tasks in the Meta CRAG-MM Challenge at KDD Cup 2025.

AIBullishImport AI (Jack Clark) · Mar 166/10

🧠ImportAI 449 explores recent developments in AI research including LLMs training other LLMs, a 72B parameter distributed training run, and findings that computer vision tasks remain more challenging than generative text tasks. The newsletter highlights autonomous LLM refinement capabilities and post-training benchmark results showing significant AI capability growth.

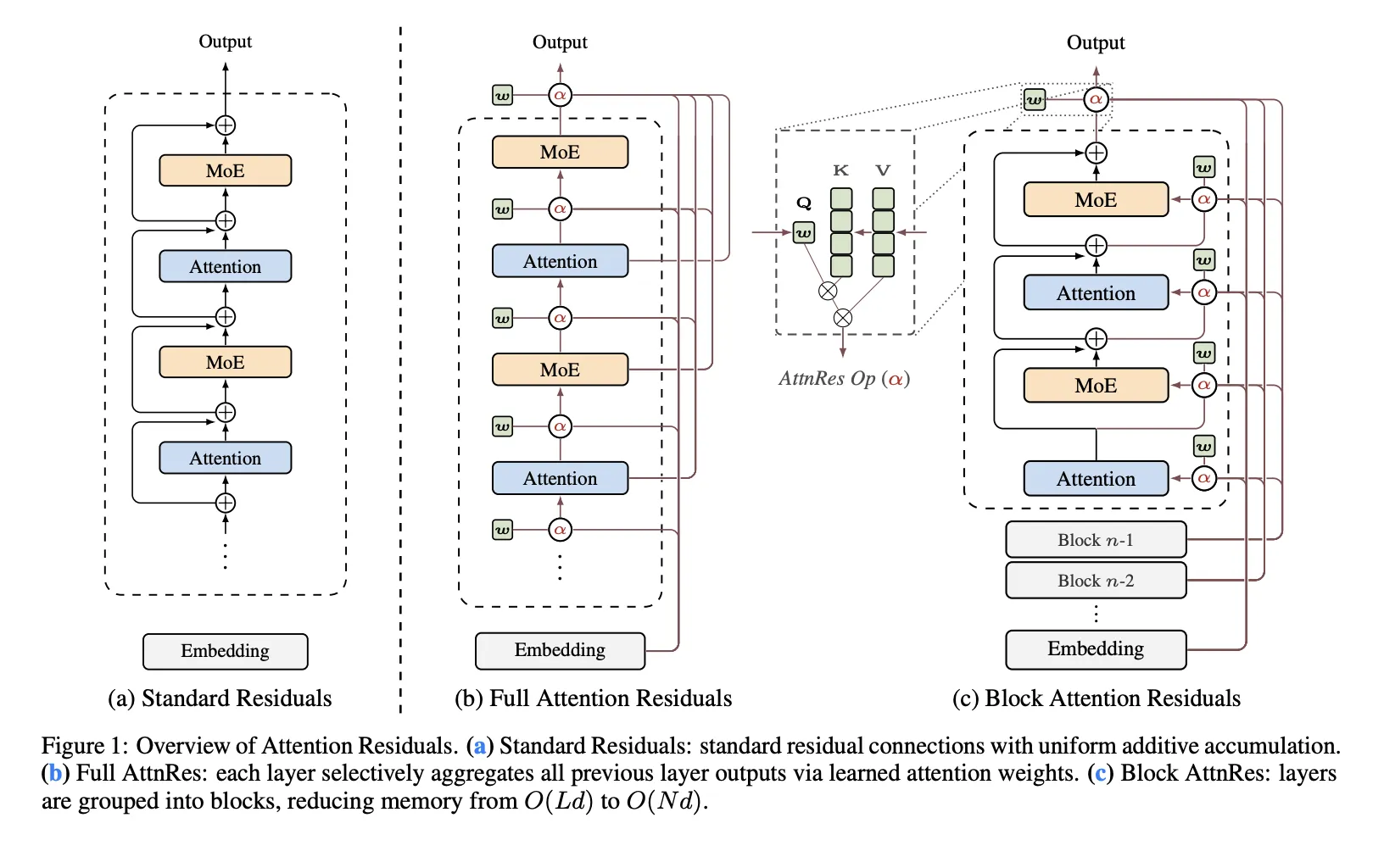

AIBullishMarkTechPost · Mar 167/10

🧠Moonshot AI has released Attention Residuals, a new approach that replaces traditional fixed residual connections in Transformer architectures with depth-wise attention mechanisms. The innovation addresses structural problems in PreNorm architectures where all prior layer outputs are mixed equally, potentially improving model scaling capabilities.

AIBullisharXiv – CS AI · Mar 166/10

🧠Researchers have developed ToolTree, a new Monte Carlo tree search-based planning system for LLM agents that improves tool selection and usage through dual-feedback evaluation and bidirectional pruning. The system achieves approximately 10% performance gains over existing methods while maintaining high efficiency across multiple benchmarks.

AIBullisharXiv – CS AI · Mar 166/10

🧠Researchers propose AMRO-S, a new routing framework for multi-agent LLM systems that uses ant colony optimization to improve efficiency and reduce costs. The system addresses key deployment challenges like high inference costs and latency while maintaining performance quality through semantic-aware routing and interpretable decision-making.

AINeutralarXiv – CS AI · Mar 166/10

🧠Researchers propose Global Evolutionary Refined Steering (GER-steer), a new training-free framework for controlling Large Language Models without fine-tuning costs. The method addresses issues with existing activation engineering approaches by using geometric stability to improve steering vector accuracy and reduce noise.

AINeutralarXiv – CS AI · Mar 166/10

🧠Researchers introduce Budget-Sensitive Discovery Score (BSDS), a formally verified framework for evaluating AI-guided scientific candidate selection under budget constraints. Testing on drug discovery datasets reveals that simple random forest models outperform large language models, with LLMs providing no marginal value over existing trained classifiers.

AINeutralarXiv – CS AI · Mar 166/10

🧠Research reveals that large language models used as judges for scoring responses show misleading performance when evaluated by global correlation metrics versus actual best-of-n selection tasks. A study using 5,000 prompts found that judges with moderate global correlation (r=0.47) only captured 21% of potential improvement, primarily due to poor within-prompt ranking despite decent overall agreement.

AIBullisharXiv – CS AI · Mar 166/10

🧠Researchers have developed Feynman, an AI agent that generates high-quality diagram-caption pairs at scale for training vision-language models. The system created a dataset of 100k+ well-aligned diagrams and introduced Diagramma, a benchmark for evaluating visual reasoning capabilities.