AIBullisharXiv – CS AI · 3d ago7/10

🧠Researchers propose Self-Trained Verification (STV), a novel approach that improves AI reasoning models by training verifiers to catch self-generated errors using reference solutions as supervision. The method doubles accuracy on hard math problems and achieves 14x improvement on scientific reasoning tasks, while also enabling more effective self-training through verifier-in-the-loop training that further boosts performance by 33%.

AIBullishCrypto Briefing · 4d ago7/10

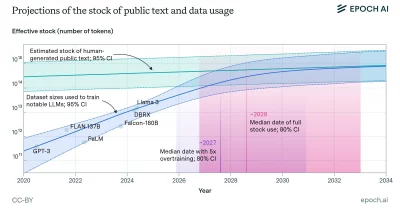

🧠Epoch AI forecasts that inference compute—the computational resources needed to run trained AI models—will surpass training compute by 2030, fundamentally shifting where resources and capital flow in AI infrastructure. This transition has major implications for data center investment, energy consumption patterns, and the competitive landscape of AI service providers.

AIBullishOpenAI News · May 117/10

🧠Enterprises are advancing AI deployment beyond initial pilots by implementing governance frameworks, trust mechanisms, workflow optimization, and quality assurance systems. This transition from experimentation to scaled operations represents a critical phase where organizational maturity determines whether AI investments deliver sustainable competitive advantage.

AIBullisharXiv – CS AI · May 97/10

🧠Researchers introduce Recursive Agent Optimization (RAO), a reinforcement learning method enabling AI agents to spawn and delegate tasks to themselves recursively. This approach allows agents to handle longer contexts, solve harder problems through divide-and-conquer strategies, and achieve better training efficiency with reduced computational time.

AIBullishCrypto Briefing · Apr 107/10

🧠Brad Lightcap discusses how scaling laws demonstrate that larger AI models consistently outperform smaller ones, while highlighting the evolution from language models to conversational AI interfaces and the emerging phenomenon of AI agency. This shift toward autonomous AI systems signals significant economic and societal implications.

AINeutralarXiv – CS AI · Mar 177/10

🧠Researchers propose the Institutional Scaling Law, challenging the assumption that AI performance improves monotonically with model size. The framework shows that institutional fitness (capability, trust, affordability, sovereignty) has an optimal scale beyond which capability and trust diverge, suggesting orchestrated domain-specific models may outperform large generalist models.

AINeutralarXiv – CS AI · Mar 177/10

🧠Researchers propose shifting from large monolithic AI models to domain-specific superintelligence (DSS) societies due to unsustainable energy costs and physical constraints of current generative AI scaling approaches. The alternative involves smaller, specialized models working together through orchestration agents, potentially enabling on-device deployment while maintaining reasoning capabilities.

AIBullisharXiv – CS AI · Mar 67/10

🧠A research paper presents a 10-year roadmap for coordinated AI and hardware co-development, targeting 1000x efficiency improvements in AI training and inference by 2035. The vision emphasizes energy efficiency over raw compute scaling, proposing integrated solutions across algorithms, architectures, and systems to enable sustainable AI deployment from cloud to edge environments.

AIBullishOpenAI News · Feb 277/107

🧠A major AI company announces $110B in new investment funding at a $730B pre-money valuation. The funding round includes significant contributions from three major tech players: $30B from SoftBank, $30B from NVIDIA, and $50B from Amazon.

AIBullishGoogle DeepMind Blog · Feb 177/105

🧠Google DeepMind launches the National Partnerships for AI initiative in India, focusing on scaling artificial intelligence applications in science and education sectors. This represents a significant expansion of AI infrastructure and collaboration in one of the world's largest emerging markets.

AIBullishOpenAI News · Jan 157/106

🧠OpenAI has launched a new Request for Proposal (RFP) initiative aimed at strengthening the U.S. AI supply chain through domestic manufacturing. The program focuses on accelerating local production capabilities, creating employment opportunities, and scaling AI infrastructure to reduce dependence on foreign supply chains.

AIBullishOpenAI News · Oct 237/106

🧠OpenAI has released an economic blueprint for South Korea outlining how the country can develop sovereign AI capabilities and leverage strategic partnerships to scale trusted AI systems. The blueprint focuses on driving economic growth through AI development and implementation strategies.

AINeutralCrypto Briefing · 3d ago6/10

🧠Nvidia is partnering with homebuilders to deploy small AI data centers in residential backyards, leveraging unused residential power capacity to decentralize AI infrastructure. While the XFRA initiative could reduce strain on centralized data centers and create new revenue streams for homeowners, it faces significant obstacles including scalability concerns, technical integration challenges, and uncertain homeowner adoption rates.

🏢 Nvidia

AIBearishCoinTelegraph – AI · Mar 117/10

🧠Current AI scaling approaches are consuming massive energy resources while increasing error rates rather than improving performance. The article suggests neurosymbolic reasoning and decentralized cognitive systems as more reliable alternatives to traditional scaling methods.

AIBearishWired – AI · Mar 56/10

🧠ByteDance's new AI video model Seedance 2.0 is facing significant operational challenges due to compute capacity limitations and mounting copyright complaints. The company's AI ambitions are being constrained by infrastructure bottlenecks and legal concerns over content generation.

AIBullishCrypto Briefing · Mar 46/102

🧠CoreWeave, a specialized AI cloud infrastructure provider, secured a multi-year partnership deal with AI search company Perplexity to power their workloads, causing CoreWeave's shares to rise. The partnership underscores the increasing demand for specialized cloud services tailored to AI applications as the industry scales.

AINeutralarXiv – CS AI · Mar 37/108

🧠Researchers propose a new method called total Variation-based Advantage aligned Constrained policy Optimization to address policy lag issues in distributed reinforcement learning systems. The approach aims to improve performance when scaling on-policy learning algorithms by mitigating the mismatch between behavior and learning policies during high-frequency updates.

AIBullisharXiv – CS AI · Mar 37/108

🧠Researchers propose GAC (Gradient Alignment Control), a new method to stabilize asynchronous reinforcement learning training for large language models. The technique addresses training instability issues that arise when scaling RL to modern AI workloads by regulating gradient alignment and preventing overshooting.

$NEAR

AIBullishHugging Face Blog · Feb 266/106

🧠The article discusses Mixture of Experts (MoEs) architecture in transformer models, which allows for scaling model capacity while maintaining computational efficiency. This approach enables larger, more capable AI models by activating only relevant expert networks for specific inputs.

AINeutralLast Week in AI · Jan 286/10

🧠OpenAI plans to test advertisements in ChatGPT as the company faces significant financial pressures from high operational costs. The article also covers ongoing issues at Thinking Machines and discusses STEM, a new approach to scaling transformer models through embedding modules.

🏢 OpenAI🧠 ChatGPT

AIBullishOpenAI News · Jan 186/105

🧠OpenAI's business model is designed to scale directly with advances in artificial intelligence capabilities, encompassing multiple revenue streams including subscriptions, API services, advertising, commerce, and compute resources. The growth strategy is fundamentally tied to increasing ChatGPT adoption and user engagement across these diverse monetization channels.

AIBullishHugging Face Blog · Oct 96/108

🧠The article discusses scaling AI-based data processing using Hugging Face in combination with Dask for distributed computing. This approach enables efficient handling of large-scale machine learning workloads by leveraging parallel processing capabilities.

AINeutralHugging Face Blog · Aug 174/106

🧠This article appears to be a technical guide introducing 8-bit matrix multiplication techniques for scaling transformer models using specific libraries including transformers, accelerate, and bitsandbytes. The content focuses on optimization methods for running large AI models more efficiently through reduced precision computing.

AINeutralHugging Face Blog · Oct 231/105

🧠Unable to analyze article content as the article body appears to be empty or not provided. The title suggests the introduction of HUGS, a platform or service for scaling AI with open models.