AIBearisharXiv – CS AI · 3d ago7/10

🧠Research reveals that voice cloning technology doesn't faithfully replicate voices but instead applies systematic style transfer, making cloned voices sound more authoritative and trustworthy than originals. The findings expose significant limitations in current voice cloning models, including homogenization of speaker characteristics and potential risks related to human behavioral manipulation through altered voice perception.

AIBullisharXiv – CS AI · Apr 147/10

🧠Researchers introduce Audio Flamingo Next (AF-Next), an advanced open-source audio-language model that processes speech, sound, and music with support for inputs up to 30 minutes. The model incorporates a new temporal reasoning approach and demonstrates competitive or superior performance compared to larger proprietary alternatives across 20 benchmarks.

AINeutralArs Technica – AI · Mar 267/10

🧠Google is launching Gemini 3.1 Flash Live, a new conversational audio AI system being integrated into search, Gemini platform, and developer tools. The advancement in AI conversational capabilities could make it increasingly difficult for users to distinguish between human and AI interactions.

🧠 Gemini

AIBullishMarkTechPost · Mar 267/10

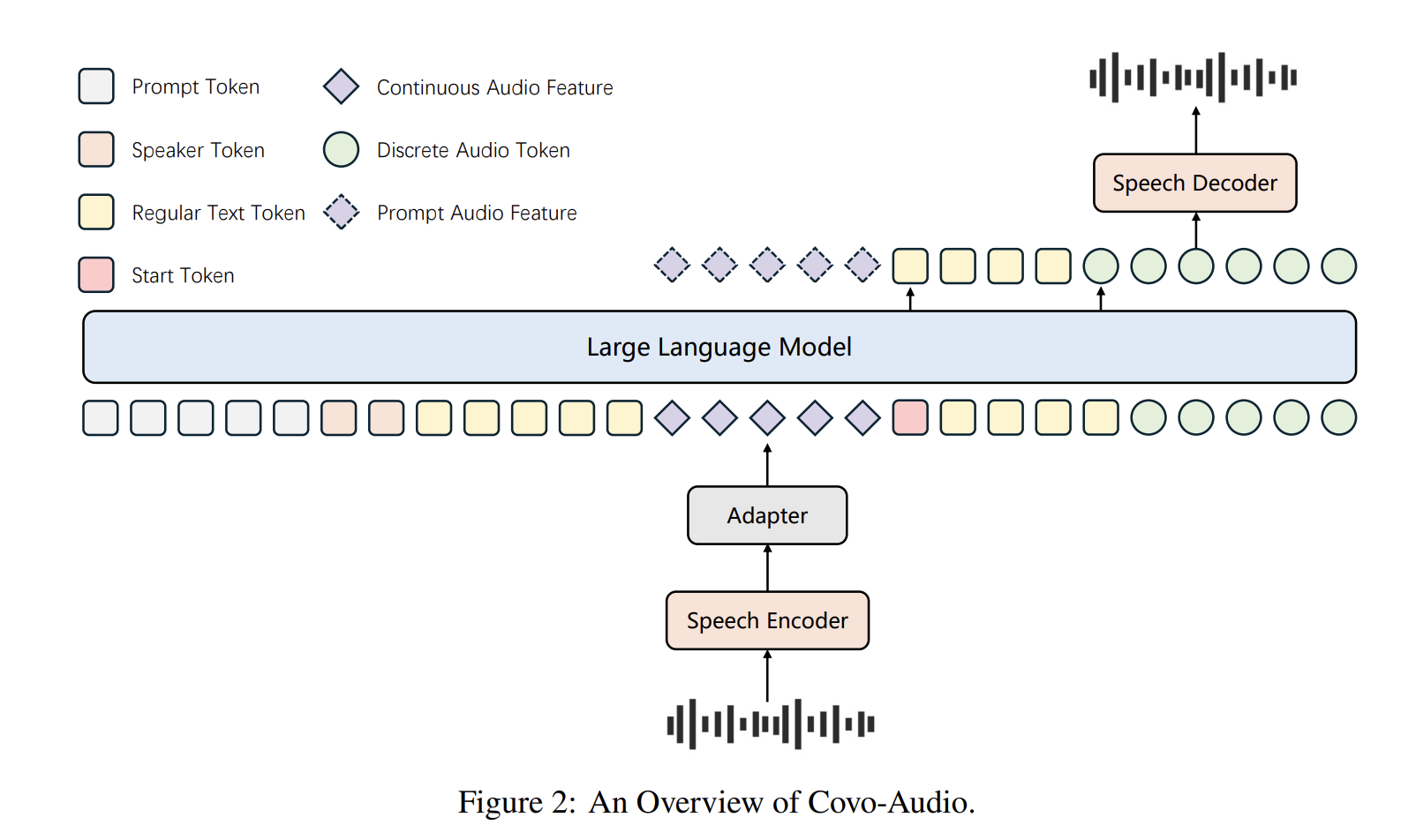

🧠Tencent AI Lab has open-sourced Covo-Audio, a 7B-parameter Large Audio Language Model that can process continuous audio inputs and generate audio outputs in real-time. The model unifies speech processing and language intelligence within a single end-to-end architecture designed for seamless cross-modal interaction.

AIBullisharXiv – CS AI · Mar 57/10

🧠Researchers have developed a new method called Latent-Control Heads (LatCHs) that enables efficient control of audio generation in diffusion models with significantly reduced computational costs. The approach operates directly in latent space, avoiding expensive decoder steps and requiring only 7M parameters and 4 hours of training while maintaining audio quality.

AIBearisharXiv – CS AI · Mar 46/102

🧠Researchers developed a new AI attack method that can fool speaker recognition systems with 10x fewer attempts than previous approaches. The technique uses feature-aligned inversion to optimize attacks in latent space, achieving up to 91.65% success rate with only 50 queries.

AIBullishTechCrunch – AI · Feb 277/107

🧠AI music generator Suno has reached 2 million paid subscribers and achieved $300 million in annual recurring revenue. The platform allows users to create music using natural language prompts, making music generation accessible to users without musical experience.

AIBullishGoogle DeepMind Blog · May 207/105

🧠Google announces Gemma 3n preview, a new open-source AI model optimized for mobile devices with multimodal capabilities including audio processing. The model features a unique 2-in-1 architecture designed to enable fast, interactive AI applications directly on devices.

AINeutralarXiv – CS AI · Apr 136/10

🧠Researchers propose Noise-Aware In-Context Learning (NAICL), a plug-and-play method to reduce hallucinations in auditory large language models without expensive fine-tuning. The approach uses a noise prior library to guide models toward more conservative outputs, achieving a 37% reduction in hallucination rates while establishing a new benchmark for evaluating audio understanding systems.

AIBullishCrypto Briefing · Apr 106/10

🧠Shubham Saboo discusses three emerging technologies reshaping AI capabilities: the Plod device for audio context capture, OpenClaw for enhanced AI agent functionalities, and effective onboarding strategies. These innovations enable AI agents to autonomously manage business operations and streamline workflows with improved productivity and efficiency.

AIBullisharXiv – CS AI · Mar 266/10

🧠Researchers developed novel 'dropin' and 'plasticity' algorithms inspired by brain neuroplasticity to improve deepfake audio detection efficiency. The methods dynamically adjust neuron counts in model layers, achieving up to 66% reduction in error rates while improving computational efficiency across multiple architectures including ResNet and Wav2Vec.

AINeutralarXiv – CS AI · Mar 126/10

🧠Researchers propose HIR-SDD, a new framework combining Large Audio Language Models with human-inspired reasoning to detect speech deepfakes. The method aims to improve generalization across different audio domains and provide interpretable explanations for deepfake detection decisions.

AINeutralarXiv – CS AI · Mar 116/10

🧠Researchers introduce SCENEBench, a new benchmark for evaluating Large Audio Language Models (LALMs) beyond speech recognition, focusing on real-world audio understanding including background sounds, noise localization, and vocal characteristics. Testing of five state-of-the-art models revealed significant performance gaps, with some tasks performing below random chance while others achieved high accuracy.

AIBullisharXiv – CS AI · Mar 55/10

🧠Researchers have developed MeanFlowSE, a new generative AI model for speech enhancement that performs single-step inference instead of requiring multiple computational steps. The method achieves strong audio quality with substantially lower computational costs, making it suitable for real-time applications without needing knowledge distillation or external teachers.

AIBullisharXiv – CS AI · Mar 27/1012

🧠Researchers have introduced Hello-Chat, an end-to-end audio language model designed to create more realistic and emotionally resonant AI conversations. The model addresses the robotic nature of existing Large Audio Language Models by using real-life conversation data and achieving breakthrough performance in prosodic naturalness and emotional alignment.

AIBullishGoogle DeepMind Blog · Jun 35/104

🧠Gemini 2.5 introduces new AI-powered audio dialog and generation capabilities, expanding Google's multimodal AI offerings. This represents an incremental advancement in conversational AI technology with enhanced audio processing features.

AINeutralThe Verge – AI · Mar 255/10

🧠Google has released Lyria 3 Pro, an upgraded AI music generation tool that can create tracks up to three minutes long, six times longer than the previous 30-second limit. The tool allows users to prompt for specific song elements like intros, choruses, and bridges, and can generate both music and lyrics from text prompts or reference photos.

AIBullisharXiv – CS AI · Mar 54/10

🧠Researchers have introduced LabelBuddy, an open-source audio annotation tool that uses AI assistance to bridge the gap between human intent and machine understanding in music information retrieval. The tool features collaborative tagging, containerized AI model backends, and supports multi-user consensus for creating richer audio datasets.

AINeutralarXiv – CS AI · Mar 44/104

🧠Researchers have developed TVF (Time-Varying Filtering), a lightweight 1 million parameter speech enhancement model that combines digital signal processing with deep learning for real-time speech denoising. The model uses a neural network to predict coefficients for a 35-band IIR filter cascade, offering interpretable processing while adapting dynamically to changing noise conditions.

AINeutralarXiv – CS AI · Mar 34/103

🧠CodecFlow is a new neural codec-based framework for speech bandwidth extension that efficiently reconstructs high-quality audio in compact latent space. The system uses conditional flow matching and residual vector quantization to improve speech clarity by restoring high-frequency content from low-bandwidth audio.

AIBullishHugging Face Blog · Jun 35/105

🧠The article discusses real-time AI sound generation technology running on Arm processors, positioning it as a tool for creative freedom. This represents an advancement in AI-powered audio creation capabilities on mobile and edge devices.

AINeutralarXiv – CS AI · Mar 34/107

🧠Researchers introduce SyncTrack, an AI model for multi-track music generation that addresses rhythmic stability and synchronization issues in existing models. The model uses track-shared modules for common rhythm and track-specific modules for diverse timbres, introducing new metrics to evaluate multi-track music quality.

AINeutralarXiv – CS AI · Mar 24/104

🧠Researchers introduce AudioCapBench, a new benchmark for evaluating how well large multimodal AI models can generate captions for audio content across sound, music, and speech domains. The study tested 13 models from OpenAI and Google Gemini, finding that Gemini models generally outperformed OpenAI in overall captioning quality, though all models struggled most with music captioning.

AINeutralHugging Face Blog · Dec 201/106

🧠The article title references 'Evaluating Audio Reasoning with Big Bench Audio' but no article body content was provided for analysis. Without the actual article content, a meaningful analysis of this AI research topic cannot be completed.