AIBearisharXiv – CS AI · 4d ago7/10

🧠A research paper reveals that large language models used to create and evaluate benchmarks systematically favor themselves, introducing significant bias into automated evaluation systems. The self-bias stems from both test generation and evaluation stages, with stylistic tendencies creating model-specific outputs that inflate scores, even when diversity controls are explicitly applied.

AINeutralarXiv – CS AI · Apr 67/10

🧠Researchers studied weight-space model merging for multilingual machine translation and found it significantly degrades performance when target languages differ. Analysis reveals that fine-tuning redistributes rather than sharpens language selectivity in neural networks, increasing representational divergence in higher layers that govern text generation.

AINeutralarXiv – CS AI · 2d ago6/10

🧠Researchers introduce Source-Grounded Semantic Reinforcement Learning (SG-SRL), a framework that leverages abundant source-language monolingual data to improve low-resource target-language generation through cross-lingual semantic rewards. The approach demonstrates significant gains in semantic grounding and factual coverage while maintaining fluency through a lightweight recovery stage.

AIBullisharXiv – CS AI · 2d ago6/10

🧠Researchers introduce Loong, an AI agent designed to improve long document translation by selectively retrieving relevant context from a 3E memory module rather than processing all available information. The system uses reinforcement learning to optimize context selection and demonstrates significant translation quality improvements across multiple language pairs, achieving gains up to 13 points on standard evaluation metrics.

AINeutralarXiv – CS AI · 3d ago6/10

🧠Researchers developed methods to preserve gender information in English-to-Hindi machine translation, a challenge caused by Hindi's ergative and honorific grammatical structures. Two inference-time interventions—Source-Aware Reranker and Phenomenon-Aware Reranker—significantly improved gender preservation but revealed a tradeoff between cultural fidelity and translation fluency.

🧠 GPT-4

AIBullisharXiv – CS AI · 3d ago6/10

🧠Researchers present a method for aggressively pruning expert modules from mixture-of-experts large language models to create specialized translation systems. The approach removes up to 90% of experts with minimal performance degradation, demonstrating that translation tasks require only a fraction of a full LLM's parameters, enabling substantial model compression.

AINeutralarXiv – CS AI · Apr 206/10

🧠Researchers introduce CLewR, a curriculum learning strategy that improves machine translation performance in large language models by reordering training data from easy to hard examples with periodic restarts. The approach demonstrates consistent improvements across multiple model families and preference optimization techniques, addressing a previously underexplored aspect of LLM training methodology.

🧠 Llama

AINeutralarXiv – CS AI · Apr 146/10

🧠Researchers identified systematic reasoning errors in machine translation systems across seven language pairs, finding that while these errors can be detected with high precision in some languages like Urdu, correcting them produces minimal improvements in translation quality. This suggests that reasoning traces in neural machine translation models lack genuine faithfulness to their outputs, raising questions about the reliability of reasoning-based approaches in translation systems.

AINeutralarXiv – CS AI · Apr 146/10

🧠Researchers introduce a Cross-Lingual Mapping Task during LLM pre-training to improve multilingual performance across languages with varying data availability. The method achieves significant improvements in machine translation, cross-lingual question answering, and multilingual understanding without requiring extensive parallel data.

AINeutralarXiv – CS AI · Apr 106/10

🧠Researchers evaluated how well large language models can perform formal grammar-based translation tasks using in-context learning, finding that LLM translation accuracy degrades significantly with grammar complexity and sentence length. The study identifies specific failure modes including vocabulary hallucination and untranslated source words, revealing fundamental limitations in LLMs' ability to apply formal grammatical rules to translation tasks.

AINeutralarXiv – CS AI · Mar 126/10

🧠Researchers introduce DIBJudge, a new framework to address systematic bias in large language models that favor machine-translated text over human-authored content in multilingual evaluations. The solution uses variational information compression to isolate bias factors and improve LLM judgment accuracy across languages.

AIBearisharXiv – CS AI · Mar 36/104

🧠A new research study analyzes how Large Language Models are impacting Wikipedia content and structure, finding approximately 1% influence in certain categories. The research warns of potential risks to AI benchmarks and natural language processing tasks if Wikipedia becomes contaminated by LLM-generated content.

AINeutralarXiv – CS AI · Mar 264/10

🧠Researchers developed Konkani LLM, a specialized language model for the low-resource Indian language Konkani, using a synthetic 100k instruction dataset. The model addresses training data scarcity across multiple scripts (Devanagari, Romi, Kannada) and demonstrates competitive performance against proprietary models in machine translation tasks.

🧠 Gemini🧠 Llama

AINeutralarXiv – CS AI · Mar 124/10

🧠Researchers developed an automated framework to evaluate Large Language Models' effectiveness in translating Mandarin Chinese to English, comparing GPT-4, GPT-4o, and DeepSeek against Google Translate. While LLMs performed well on news translation, they showed varying results with literary texts, with DeepSeek excelling at cultural subtleties and GPT-4o/DeepSeek better at semantic conservation.

🏢 Meta🧠 GPT-4

AIBullisharXiv – CS AI · Mar 115/10

🧠The DIMT 2025 Challenge advances research in Document Image Machine Translation, featuring OCR-free and OCR-based tracks for translating text in complex document layouts. The competition attracted 69 teams with 27 valid submissions, demonstrating that large-model approaches show promise for handling complex document translation tasks.

AINeutralarXiv – CS AI · Mar 44/103

🧠Researchers demonstrate that machine translation quality can be accurately predicted without running translation systems, using only token fertility ratios, token counts, and linguistic metadata. The study achieved R² scores of 0.66-0.72 when forecasting GPT-4o translation performance across 203 languages in the FLORES-200 benchmark.

$XX

AINeutralarXiv – CS AI · Mar 34/104

🧠Researchers developed an optimized speech-to-text translation pipeline for Nepali-to-English that addresses punctuation loss issues in low-resource language processing. By implementing a Punctuation Restoration Module, they achieved a 4.90 BLEU point improvement over baseline systems, demonstrating significant quality gains for cascaded translation architectures.

AINeutralarXiv – CS AI · Mar 25/104

🧠A study evaluated large language models (Claude, Gemini, ChatGPT) translating Ancient Greek texts, finding high performance on previously translated works (95.2/100) but declining quality on untranslated technical texts (79.9/100). Terminology rarity was identified as a strong predictor of translation failure, with rare terms causing catastrophic performance drops.

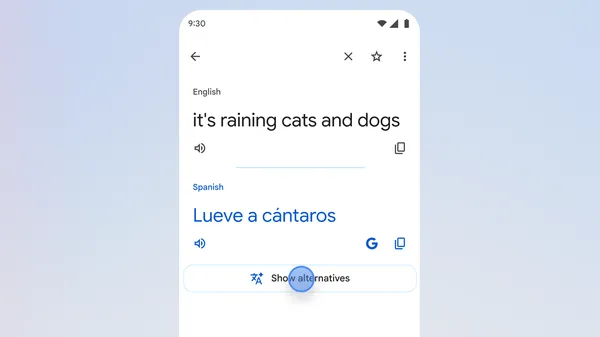

AIBullishGoogle AI Blog · Feb 264/10

🧠Google has introduced new AI-powered features to Google Translate, including 'understand' and 'ask' buttons that help users navigate the complexities of natural language translation. These updates aim to provide more context and deeper understanding for users working with translations.

AINeutralHugging Face Blog · Nov 33/106

🧠The article title suggests content about porting a fairseq WMT19 translation system to the transformers framework. However, the article body appears to be empty or unavailable, preventing detailed analysis of the technical implementation or implications.