AIBullishCrypto Briefing · 2d ago7/10

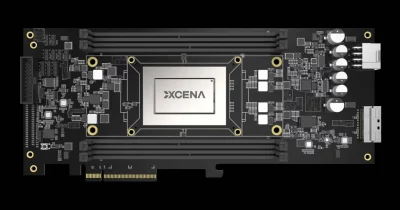

🧠XCENA, an AI chip startup, raised $135M in Series B funding, achieving a $570M valuation. The company's memory-centric AI chip technology aims to improve data processing efficiency and could influence the competitive landscape of AI hardware development.

AIBullisharXiv – CS AI · 2d ago7/10

🧠Researchers introduce Metacognitive Memory Policy Optimization (MMPO), a novel training method that improves how AI language model agents manage memory across long-horizon tasks. The approach uses Belief Entropy—a self-supervised metric measuring uncertainty about task state—to provide fine-grained supervision during memory summarization, enabling agents to maintain 97.1% performance even with 1.75M-token contexts.

AIBullisharXiv – CS AI · 3d ago7/10

🧠Researchers propose Hurwitz Quaternion Multiplicative Quantization (HQMQ), a calibration-free method for compressing KV caches in large language models using quaternion mathematics. The technique achieves 5x compression with minimal perplexity loss, matching full-precision performance at ~5 bits while outperforming existing quantization methods across five major model architectures.

🧠 Llama

AIBullisharXiv – CS AI · 4d ago7/10

🧠Researchers have developed a bias correction technique for quantizing KV-cache memory in video diffusion models, addressing a fundamental problem where quantization noise causes inflated attention to cached data. The method recovers near-full quality video generation while using 50% less memory than standard approaches, enabling longer video synthesis without sacrificing output quality.

AIBullisharXiv – CS AI · May 127/10

🧠Researchers propose DeMem, a decision-centric memory framework that optimizes agent memory allocation based on preserving distinctions needed for sound decision-making rather than descriptive accuracy. Using rate-distortion theory, the approach identifies what information can be safely forgotten under memory constraints and demonstrates performance gains on long-horizon language agent tasks.

AIBullisharXiv – CS AI · Apr 207/10

🧠OjaKV introduces a novel framework for compressing key-value caches in large language models through online low-rank projection, addressing a critical memory bottleneck in long-context inference. The method combines selective full-rank storage for important tokens with adaptive compression for intermediate tokens, maintaining accuracy while reducing memory consumption without requiring model fine-tuning.

🧠 Llama

AIBullisharXiv – CS AI · Apr 147/10

🧠IceCache is a new memory management technique for large language models that reduces KV cache memory consumption by 75% while maintaining 99% accuracy on long-sequence tasks. The method combines semantic token clustering with PagedAttention to intelligently offload cache data between GPU and CPU, addressing a critical bottleneck in LLM inference on resource-constrained hardware.

AIBullisharXiv – CS AI · Mar 267/10

🧠Researchers introduce Moonwalk, a new algorithm that solves backpropagation's memory limitations by eliminating the need to store intermediate activations during neural network training. The method uses vector-inverse-Jacobian products and submersive networks to reconstruct gradients in a forward sweep, enabling training of networks more than twice as deep under the same memory constraints.

AIBullishDecrypt · Mar 257/10

🧠Google has developed a technique that significantly reduces memory requirements for running large language models as context windows expand, without compromising accuracy. This breakthrough addresses a major constraint in AI deployment, though the article suggests there are limitations to the approach.

AIBullisharXiv – CS AI · Mar 167/10

🧠Researchers introduce LightMoE, a new framework that compresses Mixture-of-Experts language models by replacing redundant expert modules with parameter-efficient alternatives. The method achieves 30-50% compression rates while maintaining or improving performance, addressing the substantial memory demands that limit MoE model deployment.

AIBullisharXiv – CS AI · Mar 117/10

🧠Researchers propose ARKV, a new framework for managing memory in large language models that reduces KV cache memory usage by 4x while preserving 97% of baseline accuracy. The adaptive system dynamically allocates precision levels to cached tokens based on attention patterns, enabling more efficient long-context inference without requiring model retraining.

AIBullisharXiv – CS AI · Mar 117/10

🧠Researchers have developed Zipage, a new high-concurrency inference engine for large language models that uses Compressed PagedAttention to solve memory bottlenecks. The system achieves 95% performance of full KV inference engines while delivering over 2.1x speedup on mathematical reasoning tasks.

AIBullisharXiv – CS AI · Mar 117/10

🧠Researchers have developed UltraEdit, a breakthrough method for efficiently updating large language models without retraining. The approach is 7x faster than previous methods while using 4x less memory, enabling continuous model updates with up to 2 million edits on consumer hardware.

AIBullisharXiv – CS AI · Mar 67/10

🧠Researchers developed a memory management system for multi-agent AI systems on edge devices that reduces memory requirements by 4x through 4-bit quantization and eliminates redundant computation by persisting KV caches to disk. The solution reduces time-to-first-token by up to 136x while maintaining minimal impact on model quality across three major language model architectures.

🏢 Perplexity🧠 Llama

AIBullisharXiv – CS AI · Mar 46/103

🧠Researchers propose AlphaFree, a novel recommender system that eliminates traditional dependencies on user embeddings, raw IDs, and graph neural networks. The system achieves up to 40% performance improvements while reducing GPU memory usage by up to 69% through language representations and contrastive learning.

AIBullisharXiv – CS AI · Mar 37/102

🧠ButterflyMoE introduces a breakthrough approach to reduce memory requirements for AI expert models by 150× through geometric parameterization instead of storing independent weight matrices. The method uses shared ternary prototypes with learned rotations to achieve sub-linear memory scaling, enabling deployment of multiple experts on edge devices.

AIBullisharXiv – CS AI · Mar 37/104

🧠Researchers propose ROMA, a new hardware accelerator for running large language models on edge devices using QLoRA. The system uses ROM storage for quantized base models and SRAM for LoRA weights, achieving over 20,000 tokens/s generation speed without external memory.

AINeutralarXiv – CS AI · Mar 37/104

🧠Researchers analyzed 20 Mixture-of-Experts (MoE) language models to study local routing consistency, finding a trade-off between routing consistency and local load balance. The study introduces new metrics to measure how well expert offloading strategies can optimize memory usage on resource-constrained devices while maintaining inference speed.

AIBullisharXiv – CS AI · Mar 37/105

🧠Researchers provide mathematical proof that implicit models can achieve greater expressive power through increased test-time computation, explaining how these memory-efficient architectures can match larger explicit networks. The study validates this scaling property across image reconstruction, scientific computing, operations research, and LLM reasoning domains.

AIBullisharXiv – CS AI · Mar 37/103

🧠Researchers propose TRIM-KV, a novel approach that learns token importance for memory-bounded LLM inference through lightweight retention gates, addressing the quadratic cost of self-attention and growing key-value cache issues. The method outperforms existing eviction baselines across multiple benchmarks and provides insights into LLM interpretability through learned retention scores.

AIBullisharXiv – CS AI · Feb 277/108

🧠FlashOptim introduces memory optimization techniques that reduce AI training memory requirements by over 50% per parameter while maintaining model quality. The suite reduces AdamW memory usage from 16 bytes to 7 bytes per parameter through improved master weight splitting and 8-bit optimizer state quantization.

AIBullisharXiv – CS AI · 2d ago6/10

🧠Researchers propose semantic segmentation-based input representations to address memory and learning challenges in reinforcement learning for 3D environments, demonstrating 66-98% memory reduction in ViZDoom experiments while improving agent performance through enhanced visual information processing.

AINeutralarXiv – CS AI · 3d ago6/10

🧠Researchers introduce BudgetMem, a runtime memory framework for LLM agents that uses query-aware routing to dynamically allocate computational resources across memory modules at three cost tiers. The system employs reinforcement learning to optimize the performance-cost trade-off, demonstrating improvements over static memory approaches across multiple benchmark datasets.

AIBullisharXiv – CS AI · May 126/10

🧠Researchers introduce CERSA, a novel parameter-efficient fine-tuning method that uses singular value decomposition to reduce memory consumption while fine-tuning large language models. The technique outperforms existing methods like LoRA by capturing more rank characteristics of weight modifications while requiring substantially less memory for frozen weights.

AINeutralarXiv – CS AI · May 116/10

🧠Researchers propose Shadow Mask Distillation to address the memory bottleneck created by KV cache compression during reinforcement learning post-training of large language models. The technique tackles the critical off-policy bias that emerges when compressed contexts are used during rollout generation while full contexts are used for parameter updates, a problem that amplifies instability in RL optimization.