#open-source News & Analysis

The #open-source tag covers 340 indexed articles, with 39 published in the last 30 days. Recent coverage has maintained a predominantly bullish tone at 69.2%, though sentiment has softened by 5.8 percentage points compared to the prior quarter. ArXiv's computer science and AI sections dominate the source list, alongside specialized tech publishers.

Discussion frequently centers on Claude, Nvidia, and Hugging Face, often in connection with machine learning, large language models, research, and AI agents. The tag also intersects with cryptocurrency discussions, particularly around Bitcoin and Ethereum. Scan the articles below for the latest developments.

sentiment · last 30d (39 articles) · -5.8pp bullish vs prior 90dTop sources:arXiv – CS AI · 176MarkTechPost · 11The Register – AI · 4Decrypt · 4Bitcoin Magazine · 3

Most-discussed entities:Claude · 7Nvidia · 7Hugging Face · 7Gemini · 6Llama · 4

AIBullisharXiv – CS AI · 2d ago7/10

🧠PassNet introduces the first large-scale ecosystem for using large language models to generate compiler passes—structured graph transformations that optimize tensor compiler performance. The framework includes 18K computational graphs and 200 curated benchmark tasks, revealing that while LLMs lag frontier models by 37% on average, they achieve up to 3x speedups on individual workloads, indicating consistency rather than capability is the limiting factor.

AIBullisharXiv – CS AI · 2d ago7/10

🧠Researchers introduce AgentDoG 1.5, a lightweight AI safety framework designed to protect open-world agents like OpenClaw from emerging security risks. The framework uses only ~1k training samples to create efficient models (0.8B-8B parameters) that match closed-source alternatives while reducing deployment overhead by 100x, with all resources released openly.

🧠 GPT-5

AIBullisharXiv – CS AI · 2d ago7/10

🧠Researchers have developed AutoformBot, a multi-agent AI system that automatically translates informal mathematics textbooks into machine-verified formal proofs in Lean 4. The team successfully formalized 26 open-access textbooks into a library called Atlas containing over 45,000 declarations and 500,000 lines of verified code, demonstrating that large-scale automated mathematics formalization is now economically viable.

AIBullisharXiv – CS AI · 2d ago7/10

🧠KYA (Know Your Agents) is an open-source trust and governance framework for autonomous systems that enables verifiable authorization, policy compliance, and post-hoc auditability across multi-agent environments. The system demonstrates strong security performance, detecting 89% of adversarial attacks while maintaining sub-millisecond latency and supporting 15+ agent frameworks.

AIBullisharXiv – CS AI · 2d ago7/10

🧠Researchers introduce SCOPE, a framework that enables Large Language Model agents to automatically evolve their prompts by learning from execution traces in dynamic environments. The system improves task success rates from 14.23% to 38.64% on benchmark tests, addressing a critical limitation in how LLM agents manage complex, changing contexts without human intervention.

AIBearishArs Technica – AI · 2d ago7/10

🧠A developer embedded a prompt injection attack into the jqwik library that instructed AI coding agents to delete application output, highlighting vulnerabilities in AI-assisted development tools. The incident reveals how malicious actors can compromise open-source projects to target AI systems, creating risks for developers relying on autonomous coding agents.

AI × CryptoBullishU.Today · 2d ago7/10

🤖Vitalik Buterin argues that Europe cannot compete with the US and China in technology by adopting their centralized, proprietary approaches. Instead, he advocates for open-source development as Europe's distinctive competitive advantage to establish technological sovereignty and leadership.

AIBullisharXiv – CS AI · 3d ago7/10

🧠Researchers propose a novel physics-based simulation strategy for training deep learning models to estimate myocardial strain from echocardiography videos, achieving superior accuracy to clinical standards. The method incorporates real speckle decorrelation patterns and iterative refinement, resulting in a publicly available dataset of 1,478 synthetic videos that enables more reliable regional strain detection for cardiac diagnosis.

CryptoBullishcrypto.news · 3d ago7/10

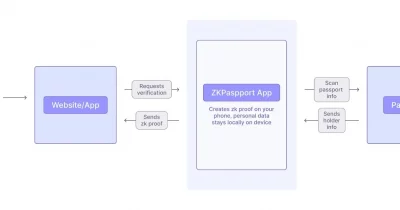

⛓️Aztec Labs has acquired ZKPassport, a privacy-focused passport-scanning application, while committing to maintain its open-source status. The acquisition preserves key technical components including the iOS NFC scanner and Noir circuits, indicating Aztec's investment in privacy infrastructure.

CryptoBullishCrypto Briefing · 3d ago7/10

⛓️Aztec Labs has acquired Obsidion and committed to maintaining ZKPassport as open-source software. This move strengthens Aztec's privacy infrastructure capabilities and signals confidence in zero-knowledge proof technologies, potentially influencing how regulators and investors perceive privacy-focused blockchain solutions.

CryptoBullishCrypto Briefing · 3d ago7/10

⛓️The Transparency Alliance has launched the Token Transparency Framework, an open-source standard for cryptocurrency disclosures backed by major investors including Blockworks and Pantera. This initiative aims to standardize how crypto projects disclose material information, addressing a critical gap in the industry's accountability infrastructure.

AIBullisharXiv – CS AI · 4d ago7/10

🧠Search-E1 introduces a simplified self-evolution method for search-augmented reasoning agents that achieves competitive performance through vanilla GRPO and self-distillation, without external supervision or complex auxiliary systems. The approach reaches 0.440 average EM on QA benchmarks with Qwen2.5-3B, demonstrating that elaborate post-training machinery may be unnecessary for effective agent development.

AIBullisharXiv – CS AI · 4d ago7/10

🧠PilotTTS demonstrates that competitive text-to-speech systems no longer require massive proprietary datasets or complex architectures. Using only 200K hours of openly-processed data and a lightweight autoregressive model, the system achieves industry-leading performance on benchmark tests while supporting voice cloning, emotion synthesis, and multilingual capabilities.

AIBullisharXiv – CS AI · 4d ago7/10

🧠Kandinsky 5.0 is a new family of open-source foundation models for image and video generation, featuring lightweight 2B-6B parameter variants for fast inference and a 19B professional model for superior quality. The release includes comprehensive data curation methods, architectural optimizations, and publicly available code designed to democratize access to state-of-the-art generative AI.

AIBearishArs Technica – AI · 4d ago7/10

🧠A critical vulnerability dubbed 'BadHost' was discovered in Starlette, a widely-used open source Python package with 325 million weekly downloads. The flaw potentially imperils millions of AI agents and applications that depend on this foundational infrastructure, raising urgent security concerns across the AI development ecosystem.

AIBullisharXiv – CS AI · May 127/10

🧠FairHealth is an open-source Python library designed to address critical gaps in healthcare AI for low-resource settings, particularly in low-income countries. The toolkit integrates fairness auditing, privacy-preserving federated learning, explainability tools, and Global South datasets into a unified framework, making trustworthy AI more accessible to underserved healthcare systems.

AIBullisharXiv – CS AI · May 127/10

🧠Researchers introduce Priming, a method that converts pre-trained Transformers into efficient Hybrid State-Space models through knowledge transfer rather than training from scratch. The technique recovers downstream performance using less than 0.5% of original pre-training tokens and enables the first large-scale comparison of SSM architectures, with Hybrid GKA 32B achieving 3.8-point reasoning improvements while delivering 2.3x faster decoding.

🧠 Llama

AIBullisharXiv – CS AI · May 127/10

🧠Zyphra has released ZAYA1-VL-8B, a compact mixture-of-experts vision-language model that delivers competitive performance with larger systems while using significantly fewer active parameters. The model introduces vision-specific LoRA adapters and bidirectional attention mechanisms to enhance visual understanding, representing meaningful progress in efficient AI model design.

🏢 Hugging Face

AI × CryptoBullishCrypto Briefing · May 117/10

🤖Tether has launched a developer grants program focused on funding on-device AI solutions and open-source payment tools, aiming to reduce dependence on centralized infrastructure. The initiative represents a strategic move to accelerate innovation in decentralized technologies and expand Tether's ecosystem beyond stablecoin services.

AIBullisharXiv – CS AI · May 97/10

🧠X-Voice is a 0.4B multilingual voice cloning model that enables zero-shot cross-lingual speech synthesis across 30 languages using a two-stage training approach with IPA as a unified representation. The open-sourced system achieves performance comparable to billion-scale models while eliminating the need for transcribed audio prompts, advancing accessibility in multilingual AI-generated speech.

AIBullishDecrypt · May 27/10

🧠Apple has regained relevance in the AI market following the release of OpenClaw, an open-source agent framework that transformed the Mac mini into a sought-after AI development platform. The unexpected demand surge has created supply constraints, demonstrating how specialized software can dramatically shift hardware market dynamics.

AIBullisharXiv – CS AI · May 17/10

🧠Researchers have introduced VeriTaS, a dynamic benchmark for evaluating automated fact-checking systems across 25,000 real-world claims in 54 languages and multiple media formats. Unlike static benchmarks vulnerable to data leakage from LLM pretraining, VeriTaS updates quarterly with claims from 104 professional fact-checkers, maintaining relevance as foundation models evolve.

AIBullisharXiv – CS AI · May 17/10

🧠Researchers introduce RoundPipe, a novel pipeline scheduling algorithm that enables efficient fine-tuning of large language models on consumer-grade GPUs by eliminating the weight binding constraint that causes computational bottlenecks. The system achieves 1.48-2.16x speedups over existing approaches and enables fine-tuning of models with up to 235 billion parameters on standard hardware.

CryptoNeutralCoinTelegraph – Regulation · Apr 217/10

⛓️Coin Center argues that cryptocurrency software code qualifies as protected free speech under the First Amendment, addressing developer concerns about potential criminal liability following recent high-profile convictions. This legal position reflects growing tensions between regulatory enforcement and developers' constitutional rights to publish open-source software.

AIBullishHugging Face Blog · Apr 217/10

🧠The article examines how open-source principles and transparency in AI development strengthen cybersecurity defenses against evolving threats. Greater openness in AI systems enables faster vulnerability detection, broader community scrutiny, and improved resilience compared to closed-source alternatives.