16,630 AI articles curated from 50+ sources with AI-powered sentiment analysis, importance scoring, and key takeaways.

AIBearishTechCrunch – AI · 1d ago7/10

🧠Box founder Aaron Levie warns of "AI psychosis" among CEOs who make workforce reduction decisions without understanding the jobs they're eliminating. Tech layoffs in 2026 are already approaching 2025's total, with companies like ClickUp cutting 22% of staff to deploy AI agents, highlighting a disconnect between executive AI enthusiasm and operational reality.

AIBullishBlockonomi · 1d ago7/10

🧠Dell Technologies reported a 38% stock surge following record quarterly results driven by explosive 757% growth in AI server revenue. The company's strong performance reflects surging enterprise demand for AI infrastructure, with HPE and NetApp also benefiting from the broader momentum in AI-focused hardware sales.

AIBullishCrypto Briefing · 1d ago7/10

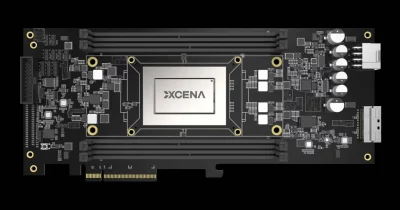

🧠XCENA, an AI chip startup, raised $135M in Series B funding, achieving a $570M valuation. The company's memory-centric AI chip technology aims to improve data processing efficiency and could influence the competitive landscape of AI hardware development.

AIBullishTechCrunch – AI · 1d ago7/10

🧠South Korean chip startup XCENA raised $135M in funding based on the thesis that memory bandwidth, rather than raw compute power, represents the primary constraint limiting AI model performance and efficiency. This investment signals growing industry recognition that current AI infrastructure bottlenecks may differ from conventional wisdom around processing capacity.

AIBullishBlockonomi · 1d ago7/10

🧠Dell Technologies reported a 39% stock surge following Q1 earnings that revealed explosive growth in AI server demand, with revenue reaching $43.8B (up 88% YoY) and AI server sales skyrocketing 757% to $16.1B. The company raised its full-year revenue outlook to $167B, signaling sustained momentum in enterprise AI infrastructure spending.

AIBearishFortune Crypto · 1d ago7/10

🧠American workers have reached a 39-year high in job satisfaction, but the Conference Board warns that AI adoption could reverse this trend for approximately half the workforce. The article highlights a critical business challenge: companies must treat AI training and access as essential workforce development rather than optional perks to prevent widespread job dissatisfaction.

AIBearishBlockonomi · 1d ago7/10

🧠CZ warns that despite AI's exponential growth, most AI companies face eventual failure due to market oversaturation. Major tech firms including Microsoft, Google, Meta, and Oracle reportedly show negative AI returns on investment under favorable scenarios, drawing parallels to the dot-com bubble where similar saturation led to widespread company failures.

AIBearishCrypto Briefing · 1d ago7/10

🧠Corporate America is pulling back on AI spending as implementation costs exceed initial projections and return on investment remains limited. This strategic retrenchment signals a maturation phase in enterprise AI adoption, with companies reassessing their technology budgets and prioritizing proven use cases over experimental deployments.

AIBullishCrypto Briefing · 1d ago7/10

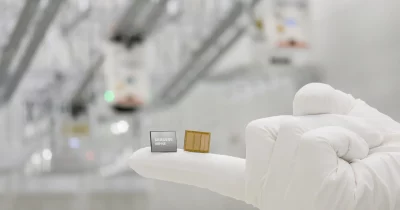

🧠Samsung Electronics has accelerated its HBM4E chip production timeline, shipping sample units ahead of schedule. The advancement positions Samsung competitively in the high-bandwidth memory market critical for AI infrastructure, with the news triggering a stock price surge.

AIBullisharXiv – CS AI · 1d ago7/10

🧠Researchers propose Cross-Model Entropy (CME), a label-free reward signal for reinforcement learning that uses a separate verifier model's likelihood assessment instead of human labels or self-referential signals. The method successfully extends RL post-training to open-ended instruction following across multiple model families, achieving win rates of 52.5-71.4% in head-to-head comparisons.

🧠 Llama

AIBullisharXiv – CS AI · 1d ago7/10

🧠Researchers introduce LoRe, a training-free optimization method that dynamically routes computational resources to high-priority interactions in iterative graph solvers, achieving 8× speedup and 12× memory reduction on combinatorial optimization problems while maintaining solution quality.

AINeutralarXiv – CS AI · 1d ago7/10

🧠Researchers identify source-dependence as a critical failure mode in retrieval-augmented generation (RAG) systems, where multi-source medical AI systems provide different answers to identical questions based on which institutional source is retrieved. The study introduces TransplantQA, HERO-QA, and evaluation frameworks to audit this phenomenon, revealing that source disagreement is far more prevalent than previously measured.

AIBullisharXiv – CS AI · 1d ago7/10

🧠Alibaba's Qwen team released Qwen-VLA, a unified foundation model that combines vision, language, and action capabilities for robotics across multiple tasks and robot types. The model demonstrates strong performance on manipulation, navigation, and trajectory prediction benchmarks while generalizing well to out-of-distribution scenarios and real-world robot deployments.

AIBullisharXiv – CS AI · 1d ago7/10

🧠Researchers have developed a method to improve how large language models verify factual claims by framing fact-checking as a true/false reading comprehension task with explicit test-taking strategies. The approach reduces token usage by over 80% while maintaining competitive performance, and enables smaller language models to perform similarly to larger ones through fine-tuning and self-revision mechanisms.

AINeutralarXiv – CS AI · 1d ago7/10

🧠FormInv introduces a measurement protocol that audits mathematical reasoning benchmarks for semantic consistency, revealing that current evaluation methods mask significant ranking volatility across AI models. The study found 3.1% semantically incorrect paraphrases in MathCheck that altered model rankings and discovered that models achieving similar accuracy scores (86-96%) exhibit drastically different consistency rates (50-82%) when tested against semantically equivalent problem restatements.

🧠 GPT-4🧠 Claude🧠 Haiku

AIBullisharXiv – CS AI · 1d ago7/10

🧠Researchers propose DenseSteer, a training-free framework that improves mathematical reasoning in small language models (≤3B parameters) by steering internal representations toward denser reasoning patterns. The method demonstrates that smaller models can match larger ones' performance by executing fewer, more information-rich reasoning steps rather than verbose chain-of-thought processes.

AIBearisharXiv – CS AI · 1d ago7/10

🧠Researchers introduce GEO-Bench, a standardized benchmark for evaluating ranking manipulation attacks against large language models used in generative search. The study compares black-box and white-box adversarial attacks, revealing that simpler content-rewriting methods can match gradient-based approaches while remaining more difficult to detect.

🏢 Perplexity🧠 Llama

AIBullisharXiv – CS AI · 1d ago7/10

🧠Researchers propose Proof-Constrained Action (ePCA), a formal verification framework that requires AI agents to express intentions as mathematical constraints before executing actions, eliminating reliance on semantic guardrails. The approach achieves zero attack success rates in testing and addresses critical security gaps as LLMs evolve from text generators into autonomous agents with real-world execution capabilities.

AIBullisharXiv – CS AI · 1d ago7/10

🧠Researchers propose EELMA, an algorithm that uses information-theoretic empowerment to evaluate language model agents at scale without manual benchmarking. The method measures an agent's ability to influence future states through its actions and demonstrates strong correlation with task performance across text-based, web, and tool-use environments.

AIBullisharXiv – CS AI · 1d ago7/10

🧠Researchers introduce Meta-Team, an experience-driven framework that enables multi-agent LLM systems to collaboratively self-evolve by learning from their own execution failures. The system coordinates post-task communication among agents to identify and implement improvements across individual behaviors, inter-agent coordination, and team-level organization, demonstrating consistent performance gains across six benchmarks.

AIBullisharXiv – CS AI · 1d ago7/10

🧠Researchers introduce OccamToken, a training-free method for compressing vision-language models by pruning unnecessary visual tokens while maintaining accuracy. The approach reduces visual token sequences by 98.6% (from 2,880 to 40 tokens) on LLaVA-NeXT while preserving over 93% accuracy, addressing computational bottlenecks in VLM inference.

AINeutralarXiv – CS AI · 1d ago7/10

🧠Researchers introduce OpenClawBench, a large-scale dataset of 31,264 annotated agent execution trajectories that reveals a significant gap between task success and process reliability. The study finds that 9.3% of oracle-passing executions contain process-side anomalies like unresolved ambiguities and unsafe operations, demonstrating that success metrics alone mask critical failure modes in AI agent systems.

AIBearisharXiv – CS AI · 1d ago7/10

🧠A new study reveals that human curation efforts to align AI models can backfire in multi-model ecosystems where models train on outputs from other models. While curation improves alignment in isolated systems, cross-model interactions can dampen or reverse these benefits, potentially degrading long-term alignment across interconnected AI systems.

AIBullisharXiv – CS AI · 1d ago7/10

🧠Researchers propose A2X, an LLM-native service discovery system that organizes thousands of callable services into hierarchical taxonomies to solve the context-window limitation problem facing AI agents. The approach achieves 20+ point improvements in retrieval accuracy while reducing token consumption to one-ninth compared to baseline methods, enabling scalable orchestration of distributed services.

AIBullisharXiv – CS AI · 1d ago7/10

🧠Researchers introduce COMET, a PLS-SVD framework that analyzes the modality gap in Contrastive Language-Audio Pretraining (CLAP) models by decomposing embeddings into interpretable concepts. The study reveals that only a small subset of shared conceptual axes drives similarity computation, and proposes a training-free spectral truncation method that improves zero-shot audio captioning performance while reducing dimensionality.