AIBullishCrypto Briefing · 2d ago7/10

🧠MIT researchers have developed MeMo, a technique that improves large language model performance by 26% without requiring model retraining. This approach reduces computational costs and enables efficient adaptation across multiple domains, addressing a major pain point in AI deployment.

AIBullisharXiv – CS AI · 2d ago7/10

🧠Researchers introduce SALE (Strategy Auctions for Workload Efficiency), a framework that coordinates multiple small language model agents through a bidding mechanism to match or exceed the performance of large models while reducing costs by 35% and cutting reliance on the largest agent by 52%. The approach demonstrates that smaller AI agents can be effectively scaled for complex tasks through intelligent task allocation rather than relying solely on larger models.

AINeutralarXiv – CS AI · 2d ago7/10

🧠Researchers extend the bounded attention prefix oracle (BAPO) model to establish theoretical lower bounds on chain-of-thought reasoning tokens required by LLMs, proving that canonical tasks require Ω(n) tokens as input size n grows. Experiments with frontier models confirm linear scaling behavior, revealing fundamental computational bottlenecks in inference-time scaling.

AIBullisharXiv – CS AI · 2d ago7/10

🧠Researchers introduce Entropy-Cut Metropolis-Hastings, an algorithm that improves sampling from power distributions in language models by identifying key decision points using entropy analysis rather than random sampling positions. The method achieves stronger reasoning performance across multiple benchmarks without requiring additional training or reinforcement learning.

AIBullisharXiv – CS AI · 2d ago7/10

🧠Researchers introduce COLAGUARD, a new safety guardrail system for large language models that embeds multi-step reasoning into latent space, achieving comparable safety performance to explicit reasoning models while delivering 12.9X faster inference and 22.4X reduction in token usage. The approach addresses a critical bottleneck in deploying AI safety systems at scale by eliminating the computational overhead of traditional reasoning-based content moderation.

🧠 Llama

AIBullisharXiv – CS AI · 3d ago7/10

🧠Researchers introduce MCTS-Judge, a test-time scaling framework that enhances LLM-based code evaluation by applying Monte Carlo Tree Search to improve reasoning accuracy. The system achieves 80% accuracy on code correctness tasks—surpassing OpenAI's o1 models while using 3x fewer tokens—addressing a critical limitation in using LLMs as reliable judges for complex technical problems.

AI × CryptoBullishCrypto Briefing · 4d ago7/10

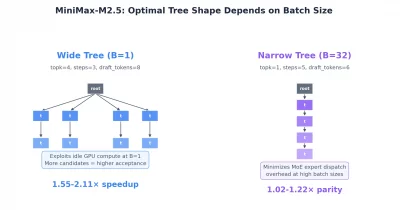

🤖MiniMax has announced its M3 model featuring a 15.6x faster decoding speed compared to previous versions, potentially reducing latency and operational costs for decentralized AI applications. This advancement could enhance scalability and efficiency across AI infrastructure, making decentralized AI systems more practical and cost-effective for broader adoption.

AIBullisharXiv – CS AI · 4d ago7/10

🧠Researchers introduce HiSpec, a hierarchical speculative decoding framework that accelerates large language model inference by using early-exit models for intermediate verification, achieving up to 2.01× throughput improvements without sacrificing accuracy.

AIBullishHugging Face Blog · 4d ago7/10

🧠Hugging Face's TRL library introduces Delta Weight Sync, a novel technique enabling efficient distribution of trillion-parameter models across distributed systems using hub bucket storage. This innovation addresses a critical bottleneck in large-scale AI model training and deployment by reducing synchronization overhead.

AIBullisharXiv – CS AI · May 127/10

🧠RewardHarness introduces a self-evolving agentic framework that dramatically improves reward modeling for image-editing evaluation using only 0.05% of typical training data. By iteratively refining tools and skills from minimal examples rather than large-scale annotations, the system achieves 47.4% accuracy on benchmarks, outperforming GPT-5 and enabling more efficient AI alignment.

🧠 GPT-5

AIBullisharXiv – CS AI · May 127/10

🧠Researchers propose DeMem, a decision-centric memory framework that optimizes agent memory allocation based on preserving distinctions needed for sound decision-making rather than descriptive accuracy. Using rate-distortion theory, the approach identifies what information can be safely forgotten under memory constraints and demonstrates performance gains on long-horizon language agent tasks.

AIBearisharXiv – CS AI · May 127/10

🧠A new research position argues that enterprises should stop treating large language models as monolithic solutions for all tasks and instead use them primarily for structured data extraction within modular architectures. The paper contends that LLMs have inherent capacity limits for enterprise knowledge needs and proposes delegating computation and storage to specialized components like knowledge bases and symbolic systems for better reliability and cost efficiency.

AIBullishDecrypt · May 117/10

🧠Baidu's ERNIE 5.1 has reached the top of Chinese AI leaderboards while requiring 94% less computational resources to build than competing models. This breakthrough in parameter efficiency demonstrates that raw scale and spending aren't prerequisites for state-of-the-art AI performance, potentially reshaping how organizations approach model development and deployment.

AIBullisharXiv – CS AI · May 117/10

🧠Researchers introduce Weblica, a framework for creating reproducible and scalable web environments to train visual web agents at scale. The system uses HTTP-level caching and LLM-based synthesis to generate thousands of diverse training environments, with the resulting Weblica-8B model achieving competitive performance against larger API-based models on web navigation benchmarks.

AIBullisharXiv – CS AI · May 117/10

🧠FlashMol represents a major breakthrough in computational drug discovery by generating high-quality 3D molecular conformations in just 4 steps, compared to hundreds required by traditional diffusion models. The technique achieves 250x acceleration in sampling speed while matching or exceeding the quality of slower teacher models, potentially transforming the economics of large-scale in silico screening.

AIBullisharXiv – CS AI · May 117/10

🧠Researchers have developed CASCADE, a novel speculative decoding technique that accelerates autoregressive image generation by up to 3.6x through identifying and exploiting redundancies in neural network representations. The method addresses a critical bottleneck in image synthesis by reducing draft token rejection rates without requiring model retraining, advancing the efficiency of text-to-image AI systems.

AIBullisharXiv – CS AI · May 97/10

🧠X-Voice is a 0.4B multilingual voice cloning model that enables zero-shot cross-lingual speech synthesis across 30 languages using a two-stage training approach with IPA as a unified representation. The open-sourced system achieves performance comparable to billion-scale models while eliminating the need for transcribed audio prompts, advancing accessibility in multilingual AI-generated speech.

AIBullisharXiv – CS AI · May 97/10

🧠Researchers introduce LOVER, an unsupervised verifier that uses logical constraints to improve LLM reasoning without requiring expensive labeled datasets. The method achieves performance comparable to supervised approaches by enforcing logical consistency rules across multiple reasoning paths.

AINeutralTechCrunch – AI · May 87/10

🧠Cloudflare announced its first major layoff affecting 1,100 employees, approximately 8% of its workforce, citing AI-driven efficiency gains that reduce the need for support roles. Despite the workforce reduction, the company achieved record revenue, highlighting the productivity paradox where technological advancement enables growth without proportional headcount increases.

AIBullisharXiv – CS AI · May 47/10

🧠Researchers introduce A11y-Compressor, a framework that optimizes how AI agents interpret graphical user interfaces by transforming accessibility trees into more efficient representations. The approach reduces input tokens by 78% while simultaneously improving task success rates by 5.1 percentage points, addressing a critical bottleneck in GUI automation systems.

AIBullisharXiv – CS AI · May 17/10

🧠Researchers introduce NeocorRAG, a new framework that optimizes retrieval quality in Retrieval-Augmented Generation (RAG) systems by using Evidence Chains, achieving state-of-the-art performance while reducing token consumption by 80% compared to comparable methods. The framework addresses a critical gap where improvements in retrieval metrics don't consistently translate to better reasoning accuracy.

AIBullisharXiv – CS AI · Apr 207/10

🧠Researchers propose a cost-aware model orchestration method that improves how Large Language Models select and coordinate multiple AI tools for complex tasks. By incorporating quantitative performance metrics alongside qualitative descriptions, the approach achieves up to 11.92% accuracy gains, 54% energy efficiency improvements, and reduces model selection latency from 4.51 seconds to 7.2 milliseconds.

AIBullisharXiv – CS AI · Apr 157/10

🧠SpecBranch introduces a novel speculative decoding framework that leverages branch parallelism to accelerate large language model inference, achieving 1.8x to 4.5x speedups over standard auto-regressive decoding. The technique addresses serialization bottlenecks in existing speculative decoding methods by implementing parallel drafting branches with adaptive token lengths and rollback-aware orchestration.

AIBullisharXiv – CS AI · Apr 147/10

🧠Researchers introduce AtlasKV, a parametric knowledge integration method that enables large language models to leverage billion-scale knowledge graphs while consuming less than 20GB of VRAM. Unlike traditional retrieval-augmented generation (RAG) approaches, AtlasKV integrates knowledge directly into LLM parameters without requiring external retrievers or extended context windows, reducing inference latency and computational overhead.

AINeutralarXiv – CS AI · Apr 147/10

🧠Researchers challenge the assumption that longer reasoning chains always improve LLM performance, discovering that extended test-time compute leads to diminishing returns and 'overthinking' where models abandon correct answers. The study demonstrates that optimal compute allocation varies by problem difficulty, enabling significant efficiency gains without sacrificing accuracy.