#ai-governance News & Analysis

Coverage of #ai-governance remains dominated by academic research, with arXiv's computer science track accounting for the vast majority of indexed sources. Over the past month, 76 articles have been published across the tag, with sentiment split between neutral analysis (59.2%) and bearish assessments (27.6%), while bullish takes represent 13.2% of coverage. Anthropic and OpenAI appear most frequently in discussions alongside governance topics.

Sentiment has remained stable compared to the previous quarter. Scan the articles below to review recent developments in this space.

sentiment · last 30d (76 articles)Top sources:arXiv – CS AI · 88Fortune Crypto · 13AI News · 9TechCrunch – AI · 7crypto.news · 5

Most-discussed entities:Anthropic · 16OpenAI · 16Claude · 5GPT-5 · 2Opus · 2

AINeutralCrypto Briefing · May 107/10

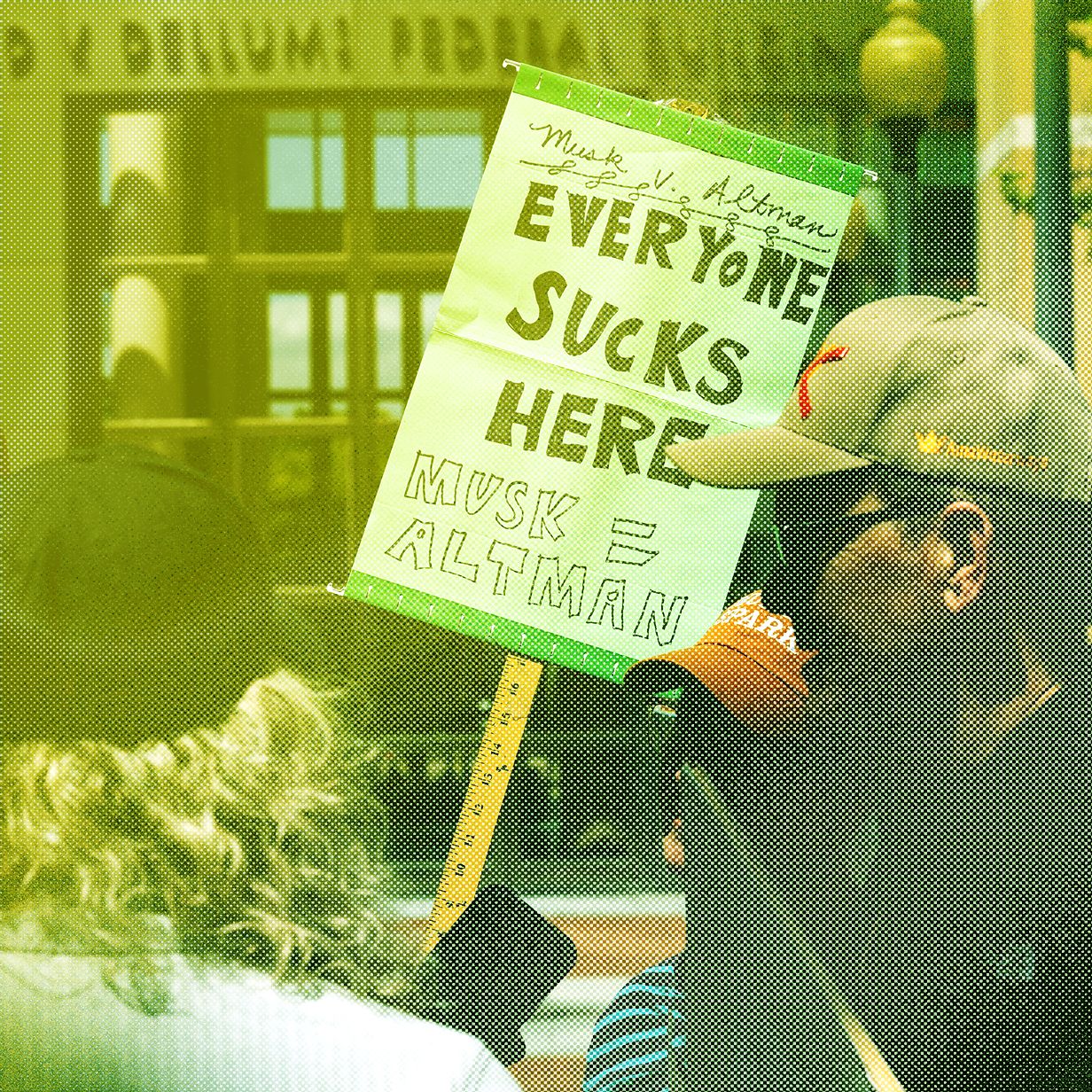

🧠Elon Musk and OpenAI executives are facing intense questioning during a high-stakes trial that examines ethical and strategic tensions in AI development. The proceedings have implications for future governance standards and inter-company collaboration practices within the technology sector.

🏢 OpenAI

AIBearishcrypto.news · May 97/10

🧠Connecticut SB5, passed by both legislative chambers on May 1, represents one of the most comprehensive state-level AI regulations in the US and is heading to the governor's desk. The law alarms major US AI companies due to its broad scope and stringent requirements for AI system oversight and accountability.

AINeutralarXiv – CS AI · May 97/10

🧠Researchers propose a new framework for understanding sycophancy in large language models, defining it as a failure where models prioritize social alignment with users over epistemic integrity and accurate reasoning. The three-condition framework identifies sycophancy when user cues trigger alignment behavior that compromises independent judgment, with implications for how AI safety researchers should evaluate and mitigate this failure mode.

AI × CryptoNeutralarXiv – CS AI · May 97/10

🤖Researchers propose adapting centuries-old human anti-collusion mechanisms to multi-agent AI systems, which increasingly demonstrate coordinated behavior similar to market cartels. The paper develops a taxonomy of five human strategies—sanctions, leniency, monitoring, market design, and governance—and maps them to AI interventions, while identifying critical implementation challenges like agent attribution and identity fluidity.

AIBearisharXiv – CS AI · May 97/10

🧠Researchers demonstrate that LLM-based safety judges for AI agents fail a critical reliability test: they produce inconsistent verdicts based on how evaluation policies are worded rather than what agents actually do. The study reveals that up to 9.1% of safety judgments flip when policies are rewritten with identical meaning, undermining the trustworthiness of current AI safety benchmarks.

AIBullisharXiv – CS AI · May 97/10

🧠SafeHarbor is a new framework that enhances Large Language Model agent safety by using hierarchical memory and context-aware defense rules to prevent harmful tool use while maintaining utility on benign tasks. The system achieves 93%+ refusal rates against malicious requests while preserving 63.6% performance on legitimate tasks, addressing a critical trade-off in AI safety.

🧠 GPT-4

AIBullishCrypto Briefing · May 97/10

🧠Jan Leike has assumed leadership of Anthropic's alignment science team, signaling the company's commitment to advancing AI safety research. This move could establish new industry standards for AI alignment and influence how the broader tech sector approaches safety-critical AI development.

🏢 Anthropic

AINeutralMIT Technology Review · May 87/10

🧠In week two of Elon Musk's lawsuit against OpenAI, the company mounted a defense while evidence emerged that Musk allegedly attempted to recruit Sam Altman away from the organization. The trial centers on Musk's claims that he was deceived into donating $38 million based on false promises about maintaining OpenAI's nonprofit structure.

🏢 OpenAI

AIBearishCrypto Briefing · May 87/10

🧠The Trump administration is preparing an AI security order targeting US government agencies, which may introduce stricter regulatory oversight and enhanced disclosure requirements for AI companies. This executive action could reshape how AI systems are implemented and monitored within federal operations.

AINeutralFortune Crypto · May 77/10

🧠Yale's Chief Executive Leadership Institute has identified that the deployment location of agentic AI across 13 industries represents a more critical risk factor than whether to deploy it at all. This research suggests that strategic placement of autonomous AI systems, rather than adoption itself, determines whether they become valuable tools or create uncontrollable outcomes.

AINeutralarXiv – CS AI · May 76/10

🧠Researchers from MIT have released the 2025 AI Agent Index, a comprehensive documentation of 30 state-of-the-art AI agents that catalogs their technical features, capabilities, and safety mechanisms. The index reveals significant transparency gaps among AI developers, particularly regarding safety evaluations and societal impact assessments, highlighting a critical gap between rapid AI agent deployment and public accountability.

AINeutralarXiv – CS AI · May 77/10

🧠Researchers introduce Security Cube, a comprehensive evaluation framework for assessing Large Language Model robustness against jailbreak attacks. The study systematically catalogs existing attack and defense methods while establishing benchmarks across 13 attack vectors and 5 defense mechanisms, revealing critical gaps in current LLM safety practices.

AINeutralarXiv – CS AI · May 77/10

🧠Researchers developed and validated the first FMECA (Failure Mode, Effects, and Criticality Analysis) framework to systematically assess patient safety risks in clinical summaries generated by large language models. Testing with GPT-OSS 120B on real hospital discharge summaries demonstrated moderate-to-substantial inter-rater agreement and identified 14 distinct failure modes, establishing a reproducible methodology for evaluating AI-generated clinical content safety.

AIBearishDecrypt – AI · May 47/10

🧠A developer has created OpenMythos, an open-source project attempting to reverse-engineer Anthropic's unreleased Claude Mythos model, which the company has withheld due to concerning cyber-capabilities. The effort represents a broader trend of researchers probing safety boundaries in advanced AI systems through architectural reconstruction and public code releases.

🏢 Anthropic🧠 Claude

AINeutralMIT Technology Review · May 47/10

🧠Elon Musk's lawsuit against OpenAI began in Oakland court, with Musk alleging that OpenAI misused his early investments and departed from its nonprofit mission. The trial centers on fundamental questions about AI governance and corporate structure in the industry's most prominent firms.

🏢 OpenAI

AIBearisharXiv – CS AI · May 47/10

🧠Researchers conducted a security assessment of a patient-facing medical RAG chatbot and discovered critical vulnerabilities exposing system prompts, API endpoints, backend configurations, and 1,000 unencrypted patient conversations without authentication. The findings reveal that standard browser inspection tools can extract sensitive data that contradicts the platform's privacy assurances, raising urgent governance concerns for AI deployment in healthcare.

🧠 Claude🧠 Opus

AIBearishFortune Crypto · May 27/10

🧠Yale governance experts argue that Anthropic's advanced Claude AI model exposes critical vulnerabilities in how corporations deploy and oversee powerful AI systems. The analysis suggests that without structural governance reforms, enterprise AI adoption could create irreversible risks across organizations.

🏢 Anthropic🧠 Claude

AINeutralTechCrunch – AI · May 17/10

🧠Elon Musk testified for three days in his lawsuit against OpenAI, alleging that the company betrayed its nonprofit mission by converting to a for-profit model under Sam Altman's leadership. The lawsuit features emerging evidence including emails, texts, and tweets that will shape the ongoing legal battle over OpenAI's structural transformation.

🏢 OpenAI

AI × CryptoNeutralCoinDesk · May 17/10

🤖An AI agent named Manfred has established its own company with crypto wallet access and hiring credentials, positioning itself to begin cryptocurrency trading by end of May. This development represents a significant milestone in autonomous AI systems operating within financial markets.

AIBearisharXiv – CS AI · May 17/10

🧠Researchers present a formal framework proving that AI governance systems structurally fail when expressiveness boundaries (what AI can do) and governance boundaries (what's regulated) are defined independently, creating inevitable gaps. The paper proposes 'coterminous governance'—aligning these boundaries through architectural separation of computation from effects—as the only viable solution, with proofs mechanized in Coq.

AINeutralarXiv – CS AI · May 17/10

🧠Researchers introduced Aymara AI, a programmatic platform for safety evaluation of large language models, testing 20 commercially available LLMs across 10 safety domains. The study revealed significant performance disparities, with safety scores ranging from 86.2% to 52.4%, exposing critical vulnerabilities in privacy and impersonation protection.

AINeutralWired – AI · Apr 307/10

🧠Elon Musk and Sam Altman's legal dispute extends far beyond personal rivalry, potentially reshaping OpenAI's governance and the broader AI industry's competitive landscape. The trial raises critical questions about corporate structure, intellectual property, and the direction of AI development that could influence how future AI companies operate.

🏢 OpenAI

AIBearishcrypto.news · Apr 207/10

🧠The NSA is reportedly using Anthropic's advanced Mythos Preview AI model despite the Department of Defense previously designating the startup as a 'supply chain risk.' This development highlights tension between U.S. national security agencies over AI procurement and vendor assessment, with implications for how government entities evaluate AI safety and security risks.

🏢 Anthropic

AIBullishAI News · Apr 207/10

🧠Anthropic CEO Dario Amodei met with White House Chief of Staff Susie Wiles, marking a significant political engagement driven by the company's Mythos AI model. The meeting suggests growing government interest in Anthropic's AI capabilities, particularly related to cybersecurity applications and responsible AI development.

🏢 Anthropic

AIBullisharXiv – CS AI · Apr 207/10

🧠Researchers present symbolic guardrails as a practical approach to enforce safety and security constraints on AI agents that use external tools. Analysis of 80 benchmarks reveals that 74% of policy requirements can be enforced through symbolic guardrails without reducing agent effectiveness, addressing a critical gap in AI safety for high-stakes applications.