AI × CryptoBullishBankless · 3d ago7/10

🤖Eigen Labs launched Darkbloom, a system that converts idle Apple Silicon Macs into a distributed private inference network for AI processing. This development addresses computational bottlenecks in AI inference while enabling hardware owners to monetize underutilized devices.

AI × CryptoBullishCrypto Briefing · 4d ago7/10

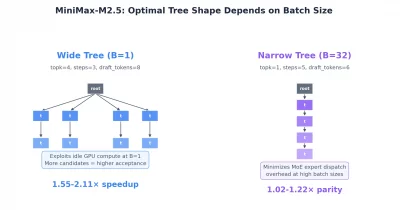

🤖MiniMax has announced its M3 model featuring a 15.6x faster decoding speed compared to previous versions, potentially reducing latency and operational costs for decentralized AI applications. This advancement could enhance scalability and efficiency across AI infrastructure, making decentralized AI systems more practical and cost-effective for broader adoption.

AI × CryptoBullishBankless · May 237/10

🤖Venice AI applies cypherpunk principles to artificial intelligence inference, building privacy protections into AI systems rather than treating it as an afterthought. The project draws philosophical parallels to the cypherpunk movement's core belief that privacy must be architecturally embedded, not granted by benevolent actors.

AIBullishHugging Face Blog · May 237/10

🧠NVIDIA's Nemotron-Labs team has developed diffusion-based language models that significantly accelerate text generation speeds, approaching real-time inference capabilities. This advancement combines diffusion model efficiency with language understanding, potentially reshaping how AI systems balance quality and computational cost.

AI × CryptoBullishBankless · May 157/10

🤖Cerebras' IPO signals a fundamental market shift from AI model training to inference optimization. Venice's ecosystem, featuring tokens like DIEM and POD, is positioned to capitalize on this transition as demand for efficient inference infrastructure grows.

AIBullisharXiv – CS AI · Apr 147/10

🧠Researchers introduce Introspective Diffusion Language Models (I-DLM), a new approach that combines the parallel generation speed of diffusion models with the quality of autoregressive models by ensuring models verify their own outputs. I-DLM achieves performance matching conventional large language models while delivering 3x higher throughput, potentially reshaping how AI systems are deployed at scale.

AIBullisharXiv – CS AI · Mar 177/10

🧠Researchers have discovered that large AI models develop decomposable internal structures during training, with many parameter dependencies remaining statistically unchanged from initialization. They propose a post-training method to identify and remove unsupported dependencies, enabling parallel inference without modifying model functionality.

AIBullishIEEE Spectrum – AI · Mar 167/10

🧠Nvidia announced the Groq 3 LPU at GTC 2024, its first chip specifically designed for AI inference rather than training, incorporating technology licensed from startup Groq for $20 billion. The chip uses SRAM memory integrated within the processor to achieve 7x faster memory bandwidth than traditional GPUs, optimizing for the low latency required for real-time AI inference applications.

🏢 Nvidia

AIBullisharXiv – CS AI · Mar 56/10

🧠Researchers developed NRR-Phi, a framework that prevents large language models from prematurely committing to single interpretations of ambiguous text. The system maintains multiple valid interpretations in a non-collapsing state space, achieving 1.087 bits of mean entropy compared to zero for traditional collapse-based models.

AIBullisharXiv – CS AI · Mar 57/10

🧠Researchers introduce the Probability Navigation Architecture (PNA) framework that trains State Space Models with thermodynamic principles, discovering that SSMs develop 'architectural proprioception' - the ability to predict when to stop computation based on internal state entropy. This breakthrough shows SSMs can achieve computational self-awareness while Transformers cannot, with significant implications for efficient AI inference systems.

AIBullisharXiv – CS AI · Mar 46/103

🧠Researchers propose a heterogeneous computing framework for Mixture-of-Experts AI models that combines analog in-memory computing with digital processing to improve energy efficiency. The approach identifies noise-sensitive experts for digital computation while running the majority on analog hardware, eliminating the need for costly retraining of large models.

AIBullisharXiv – CS AI · Mar 37/104

🧠Researchers have developed Hierarchical Speculative Decoding (HSD), a new method that significantly improves AI inference speed while maintaining accuracy by solving joint intractability problems in verification processes. The technique shows over 12% performance gains when integrated with existing frameworks like EAGLE-3, establishing new state-of-the-art efficiency standards.

AIBullisharXiv – CS AI · May 116/10

🧠Researchers propose VecCISC, an optimization framework for weighted majority voting in large language models that reduces computational costs by 47% while maintaining accuracy. The method filters redundant or hallucinated reasoning traces using semantic similarity before evaluation, addressing the expensive overhead of confidence-scoring multiple candidate answers.

AIBullishDecrypt – AI · May 76/10

🧠Google has developed Multi-Token Prediction drafters that accelerate Gemma 4 inference by up to 3x on local hardware without requiring cloud infrastructure or sacrificing output quality. This advancement makes efficient on-device AI more practical for developers and users seeking faster, privacy-preserving language model performance.

AINeutralarXiv – CS AI · May 16/10

🧠Researchers present a framework for optimizing AI inference workload placement across geographically distributed data centers by treating computation as relocatable electricity demand. The model balances latency constraints against energy costs and carbon intensity, revealing that workload flexibility significantly expands execution geography but faces practical friction from migration costs, regulatory limits, and network constraints.

AIBullisharXiv – CS AI · Apr 146/10

🧠Researchers propose CUTEv2, a unified matrix extension architecture for CPUs that decouples matrix units from the pipeline to enable efficient AI workload processing across diverse architectures. The design achieves significant speedups (1.57x-2.31x) on major AI models while occupying minimal silicon area (0.53 mm² in 14nm), demonstrating practical viability for open-source CPU development.

🧠 Llama

AIBullisharXiv – CS AI · Mar 36/103

🧠MeanCache introduces a training-free caching framework that accelerates Flow Matching inference by using average velocities instead of instantaneous ones. The framework achieves 3.59X to 4.56X acceleration on major AI models like FLUX.1, Qwen-Image, and HunyuanVideo while maintaining superior generation quality compared to existing caching methods.

AINeutralarXiv – CS AI · Mar 27/1015

🧠Researchers tested distributed AI inference across device, edge, and cloud tiers in a 5G network, finding that sub-second AI response times required for embodied AI are challenging to achieve. On-device execution took multiple seconds, while RAN-edge deployment with quantized models could meet 0.5-second deadlines, and cloud deployment achieved 100% success for 1-second deadlines.

$NEAR

AIBullisharXiv – CS AI · Mar 27/1018

🧠Researchers propose Semantic Parallelism, a new framework called Sem-MoE that significantly improves efficiency of large language model inference by optimizing how AI models distribute computational tasks across multiple devices. The system reduces communication overhead between devices by 'collocating' frequently-used model components with their corresponding data, achieving superior throughput compared to existing solutions.

AI × CryptoNeutralCoinTelegraph – AI · Jan 306/10

🤖While AI training remains dominated by hyperscale data centers, decentralized GPU networks are finding opportunities in AI inference and everyday computational workloads. This shift suggests a potential niche market for distributed computing infrastructure in the broader AI ecosystem.

AIBullishHugging Face Blog · Jul 216/105

🧠NVIDIA has partnered with Hugging Face to integrate NIM (NVIDIA Inference Microservices) to accelerate large language model deployment and inference. This collaboration aims to make AI model deployment more efficient and accessible through optimized GPU acceleration on the Hugging Face platform.

AIBullishHugging Face Blog · Jul 296/105

🧠Hugging Face has partnered with NVIDIA to integrate NIM (NVIDIA Inference Microservices) for serverless AI model inference. This collaboration enables developers to deploy and scale AI models more efficiently using NVIDIA's optimized inference infrastructure through Hugging Face's platform.

AIBullishHugging Face Blog · Apr 166/104

🧠The article discusses methods for running privacy-preserving machine learning inferences on Hugging Face endpoints. This technology allows users to perform AI model computations while protecting sensitive input data from being exposed to the service provider.

AIBullishHugging Face Blog · Mar 206/104

🧠The article discusses running Microsoft's Phi-2 chatbot model locally on Intel's Meteor Lake processors. This represents a significant advancement in bringing AI capabilities directly to consumer laptops without requiring cloud connectivity.

AIBullishHugging Face Blog · Feb 16/106

🧠Hugging Face has made its Text Generation Inference (TGI) service available on AWS Inferentia2 chips, enabling more cost-effective deployment of large language models. This integration allows developers to leverage AWS's custom AI inference chips for running text generation workloads with improved performance and reduced costs.