AIBullisharXiv – CS AI · May 127/10

🧠Researchers introduce WorldSpeech, a multilingual speech corpus containing 65,000 hours of aligned audio-transcript data across 76 languages, addressing the critical gap in ASR training data for low-resource languages. Fine-tuning existing ASR models on this dataset achieves an average 63.5% relative Word-Error-Rate reduction, significantly improving speech recognition accuracy for underrepresented languages.

AIBullisharXiv – CS AI · 2d ago6/10

🧠Researchers introduce Agentic ASR, a multi-turn interactive speech recognition framework that enables iterative refinement of recognized speech through semantic correction and reasoning-based editing. The approach addresses limitations of single-pass ASR systems by aligning with human communication patterns, introducing a new semantic evaluation metric (S²ER) that better captures meaning-critical errors than traditional token-level metrics.

AIBullisharXiv – CS AI · Apr 136/10

🧠Researchers propose Interactive ASR, a new framework that combines semantic-aware evaluation using LLM-as-a-Judge with multi-turn interactive correction to improve automatic speech recognition beyond traditional word error rate metrics. The approach simulates human-like interaction, enabling iterative refinement of recognition outputs across English, Chinese, and code-switching datasets.

AIBearisharXiv – CS AI · Mar 276/10

🧠Researchers introduced WildASR, a multilingual diagnostic benchmark revealing that current ASR systems suffer severe performance degradation in real-world conditions despite achieving near-human accuracy on curated tests. The study found that ASR models often hallucinate plausible but unspoken content under degraded inputs, creating safety risks for voice agents.

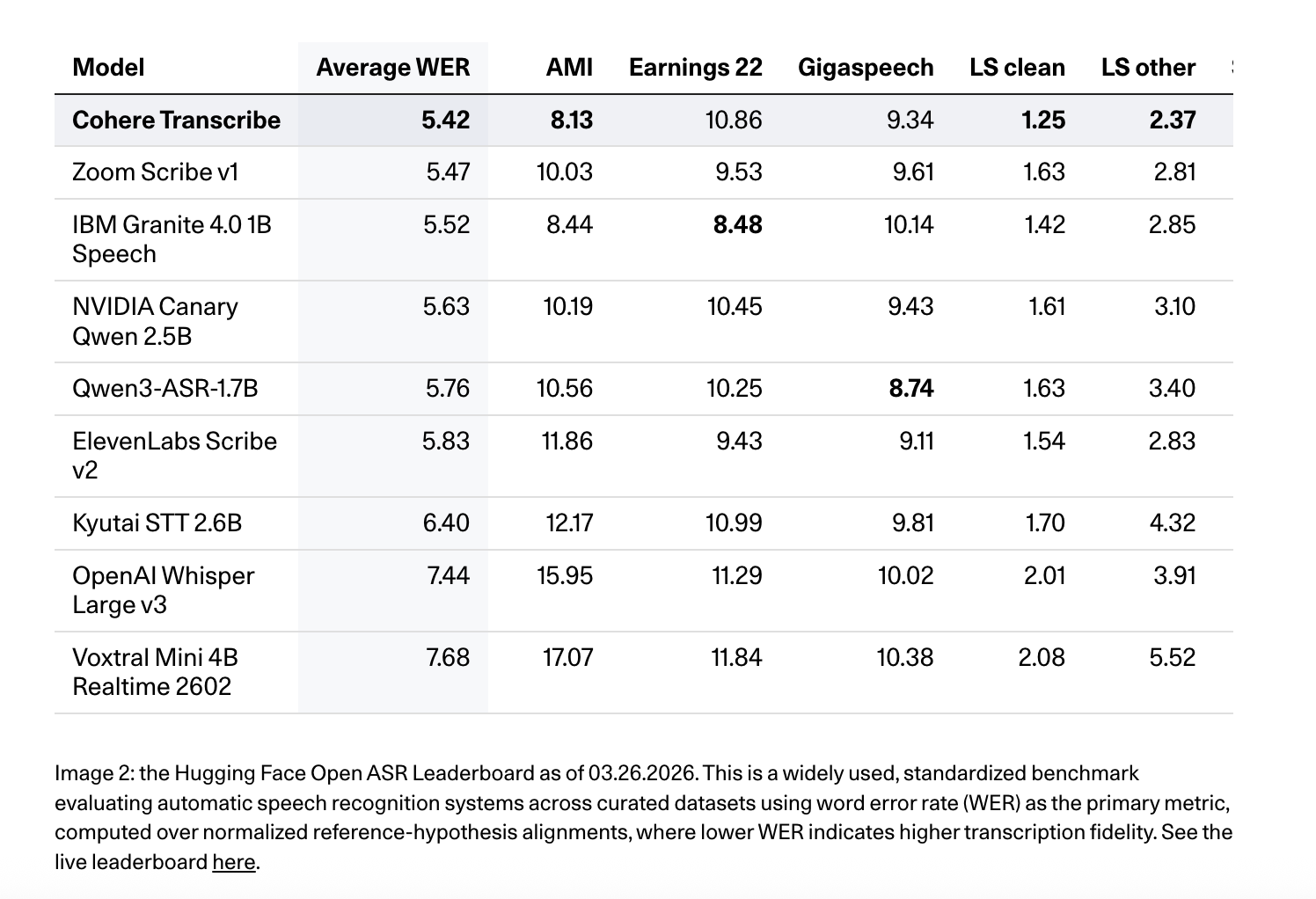

AIBullishMarkTechPost · Mar 266/10

🧠Cohere AI has released Cohere Transcribe, a new state-of-the-art Automatic Speech Recognition (ASR) model designed for enterprise applications. This marks the company's expansion beyond text generation and embedding models into the speech recognition market, targeting enterprise speech intelligence solutions.

🏢 Cohere

AIBullishMarkTechPost · Mar 176/10

🧠Google AI has released WAXAL, an open multilingual speech dataset covering 24 African languages to improve Automatic Speech Recognition and Text-to-Speech systems. This addresses the significant data distribution problem where African languages remain poorly represented in speech technology training corpora.

🏢 Google

AIBullishMarkTechPost · Mar 166/10

🧠IBM has released Granite 4.0 1B Speech, a compact multilingual speech-language model optimized for automatic speech recognition and translation. The model is specifically designed for enterprise and edge deployments where memory efficiency, low latency, and compute optimization are critical alongside performance quality.

AIBullisharXiv – CS AI · Mar 116/10

🧠DuplexCascade introduces a VAD-free cascaded streaming pipeline that enables full-duplex speech-to-speech dialogue while maintaining LLM intelligence. The system converts traditional long utterance turns into micro-turn interactions using special control tokens to coordinate turn-taking and response timing.

AIBullisharXiv – CS AI · Mar 116/10

🧠Facebook Research introduces the Latent Speech-Text Transformer (LST), which aggregates speech tokens into higher-level patches to improve computational efficiency and cross-modal alignment. The model achieves up to +6.5% absolute gain on speech HellaSwag benchmarks while maintaining text performance and reducing inference costs for ASR and TTS tasks.

AIBearisharXiv – CS AI · Mar 96/10

🧠Research reveals that speech LLMs don't perform significantly better than traditional ASR→LLM pipelines in most deployed scenarios. The study shows speech LLMs essentially function as expensive cascades that perform worse under noisy conditions, with advantages reversing by up to 7.6% at 0dB noise levels.

$LLM

AIBullisharXiv – CS AI · Mar 45/103

🧠Researchers developed GLoRIA, a parameter-efficient framework for automatic speech recognition that adapts to regional dialects using location metadata. The system achieves state-of-the-art performance while updating less than 10% of model parameters and demonstrates strong generalization to unseen dialects.

AIBullisharXiv – CS AI · Mar 37/107

🧠Researchers introduce Whisper-MLA, a modified version of OpenAI's Whisper speech recognition model that uses Multi-Head Latent Attention to reduce GPU memory consumption by up to 87.5% while maintaining accuracy. The innovation addresses a key scalability issue with transformer-based ASR models when processing long-form audio.

AIBullisharXiv – CS AI · Mar 26/1015

🧠Researchers developed Whisper-LLaDA, a diffusion-based large language model for automatic speech recognition that achieves 12.3% relative improvement over baseline models. The study demonstrates that audio-conditioned embeddings are crucial for accuracy improvements, while plain-text processing without acoustic features fails to enhance performance.

AIBullisharXiv – CS AI · Feb 276/107

🧠Researchers developed a new AI framework using RNN-T architecture to improve speech recognition for Taiwanese Hakka, an endangered low-resource language with high dialectal variability. The system achieved 57% and 40% relative error rate reductions for two different writing systems, marking the first systematic investigation into Hakka dialect variations in ASR.

AIBullisharXiv – CS AI · Mar 175/10

🧠Researchers have developed a Video-Guided Post-ASR Correction (VPC) framework that uses Video-Large Multimodal Models to improve speech recognition accuracy in complex environments like TV series. The system addresses challenges with multiple speakers, overlapping speech, and domain-specific terminology by leveraging video context to refine ASR outputs.

AINeutralarXiv – CS AI · Mar 175/10

🧠Researchers developed a novel Bayesian Low-rank Adaptation method for personalizing automatic speech recognition systems to better understand impaired speech. The approach addresses challenges in ASR systems like Whisper that struggle with non-normative speech patterns from conditions like cerebral palsy, using data-efficient fine-tuning on English and German datasets.

AINeutralarXiv – CS AI · Mar 54/10

🧠Researchers introduce ACES, a new method to analyze how automatic speech recognition systems perform differently across accents. The study finds that accent information is concentrated in early neural network layers and is deeply intertwined with speech recognition capabilities, making simple bias removal ineffective.

AINeutralarXiv – CS AI · Mar 44/103

🧠Researchers introduce Whisper-RIR-Mega, a new benchmark dataset for testing automatic speech recognition robustness in reverberant acoustic environments. The study evaluates five Whisper models and finds that reverberation consistently degrades performance across all model sizes, with word error rates increasing by 0.12 to 1.07 percentage points.

AIBullisharXiv – CS AI · Mar 44/102

🧠Researchers developed a multistage AI approach for Bengali speech transcription and speaker diarization, achieving significant improvements in processing long-form audio recordings. The system used fine-tuned Whisper models and custom segmentation techniques to address the low-resource nature of Bengali in speech technology applications.

AINeutralarXiv – CS AI · Mar 34/104

🧠Researchers developed an optimized speech-to-text translation pipeline for Nepali-to-English that addresses punctuation loss issues in low-resource language processing. By implementing a Punctuation Restoration Module, they achieved a 4.90 BLEU point improvement over baseline systems, demonstrating significant quality gains for cascaded translation architectures.

AINeutralarXiv – CS AI · Feb 274/102

🧠Researchers developed a robust framework for Bangla automatic speech recognition and speaker diarization that can handle long-form audio exceeding 30-60 seconds. The system uses Voice Activity Detection optimization and Connectionist Temporal Classification segmentation to maintain accuracy over extended durations in multi-speaker environments.

AINeutralHugging Face Blog · Nov 214/108

🧠The article title suggests coverage of the Open ASR (Automatic Speech Recognition) Leaderboard, focusing on trends and insights with new multilingual and long-form evaluation tracks. However, the article body appears to be empty or not provided, limiting the ability to extract specific details about ASR developments.

AIBullishHugging Face Blog · May 15/106

🧠The article appears to discuss advanced AI speech processing technologies including Automatic Speech Recognition (ASR), speaker diarization, and speculative decoding capabilities available through Hugging Face Inference Endpoints. However, the article body content is not provided for detailed analysis.

AINeutralHugging Face Blog · Jan 194/104

🧠The article appears to be about fine-tuning W2V2-Bert (Wav2Vec2-BERT) for automatic speech recognition in low-resource languages using Hugging Face Transformers. However, the article body is empty, preventing detailed analysis of the technical implementation or methodology.

AINeutralHugging Face Blog · Jun 194/106

🧠The article discusses fine-tuning MMS (Massively Multilingual Speech) adapter models for automatic speech recognition (ASR) in low-resource language scenarios. This approach aims to improve speech recognition performance for languages with limited training data by leveraging pre-trained multilingual models and adapter techniques.