#generative-ai News & Analysis

Recent coverage of #generative-ai spans 89 articles in the past month, with sentiment evenly split between bullish and neutral perspectives at 40.4% each, while bearish views account for 19.1%. The overall tone has softened compared to the previous quarter, with bullish sentiment declining 14.1 percentage points. Academic research dominates the discourse through arXiv submissions, while discussions frequently center on specific systems like Stable Diffusion, ChatGPT, and companies such as Anthropic.

The tag currently indexes 264 articles total, with coverage frequently intersecting with #machine-learning, #diffusion-models, and #ai-research. Scan the article list below to explore recent developments and perspectives on the topic.

sentiment · last 30d (89 articles) · -14.1pp bullish vs prior 90dTop sources:arXiv – CS AI · 150TechCrunch – AI · 10Blockonomi · 7Crypto Briefing · 5Fortune Crypto · 5

Most-discussed entities:Stable Diffusion · 6ChatGPT · 6Anthropic · 6Nvidia · 5Gemini · 5

AIBullishCrypto Briefing · 3d ago7/10

🧠Fountain 0 premiered an AI-generated film titled Dreams of Violets at the Tribeca Festival, produced for just $2,000, demonstrating how artificial intelligence is democratizing filmmaking by enabling creators to produce high-quality narratives with minimal financial resources.

AINeutralThe Verge – AI · 3d ago7/10

🧠An AI-generated feature film titled Dreams of Violets will premiere at the Tribeca Festival next month, marking the first full-length live-action film created entirely by AI to be accepted by a major film festival. The 75-minute film cost just $2,000 to produce and dramatizes Iran's January mass killing of protestors using entirely AI-generated imagery and characters.

AIBearishCrypto Briefing · 3d ago7/10

🧠CNN has filed a copyright infringement lawsuit against Perplexity, an AI company, over the use of its content in AI-generated responses. The case highlights growing legal tensions between content creators and AI firms, with potential industry-wide implications for how AI systems are trained and deployed.

🏢 Perplexity

AIBearishThe Verge – AI · 3d ago7/10

🧠CNN has sued Perplexity, alleging the AI startup's search engine generates verbatim copies of its news content and provides access to paywalled articles without permission. The lawsuit claims Perplexity ignored CNN's crawler-blocking efforts while profiting from human-created journalism without compensation.

🏢 Perplexity

AIBearisharXiv – CS AI · 3d ago7/10

🧠Researchers introduce Deepfake-Eval-2024, a new benchmark dataset of real-world deepfakes collected from social media in 2024, revealing that state-of-the-art detection models experience dramatic performance drops of 45-50% compared to academic benchmarks. The findings underscore a critical gap between laboratory-validated deepfake detectors and their effectiveness against actual manipulated content in circulation.

AINeutralarXiv – CS AI · 3d ago7/10

🧠A comprehensive academic resource presenting the unified mathematical foundations of diffusion models, explaining how three complementary perspectives—variational, score-based, and flow-based—emerge from shared principles. The work bridges theoretical understanding with practical applications including controllable generation and efficient sampling methods.

AIBullisharXiv – CS AI · 3d ago7/10

🧠Researchers propose LIFT and PLACE, a knowledge distillation framework that enables stable training of extremely lightweight diffusion models by decomposing the teacher's complex denoising process into coarse and fine stages with spatially adaptive guidance. The method achieves stable convergence even at extreme compression ratios (1.6% of teacher size) where conventional distillation fails, with potential applications across image generation, latent diffusion, and flow-based models.

AINeutralarXiv – CS AI · 3d ago7/10

🧠Researchers propose a steganographic method to trace the lineage of AI-generated content by embedding hidden traits in synthetic information, addressing the challenge of attribution in an era where AI models produce outputs with little apparent connection to their sources. The approach treats synthetic information inheritance analogously to biological evolution, enabling verification of parentage and maintaining accountability in AI-generated data.

AIBullishSimon Willison Blog · 4d ago7/10

🧠An analyst argues that Anthropic and OpenAI have achieved product-market fit, indicating their AI assistants have reached sufficient user adoption and value delivery to sustain long-term growth. This assessment reflects the maturation of consumer-facing AI products and suggests the market has moved beyond experimental adoption to mainstream utility.

🏢 OpenAI🏢 Anthropic

AINeutralLast Week in AI · 4d ago7/10

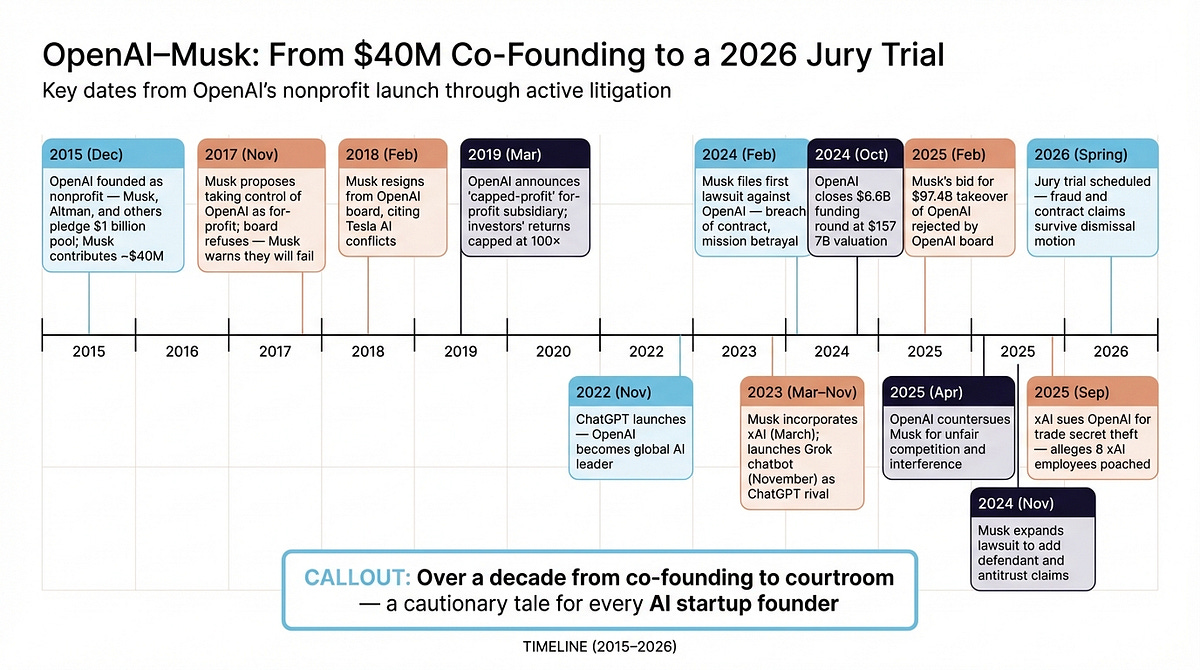

🧠Elon Musk's $150 billion lawsuit against OpenAI and Sam Altman was dismissed, marking a significant legal defeat for the Tesla CEO. Simultaneously, Google unveiled substantial updates to its Gemini app at IO 2026 designed to compete directly with ChatGPT and Claude, while OpenAI achieved a notable breakthrough in solving the Erdős problem.

🏢 OpenAI🧠 ChatGPT🧠 Claude

AIBullisharXiv – CS AI · 4d ago7/10

🧠Researchers introduce DIDR (Diff-Instruct with Diffused Reward), a reinforcement learning framework that improves one-step text-to-image generation by aligning reward optimization with diffusion dynamics. The method addresses a fundamental mismatch in existing approaches where optimizing for image-space rewards often degrades overall image fidelity, demonstrating superior results compared to current SDXL baselines.

AIBullisharXiv – CS AI · 4d ago7/10

🧠Kandinsky 5.0 is a new family of open-source foundation models for image and video generation, featuring lightweight 2B-6B parameter variants for fast inference and a 19B professional model for superior quality. The release includes comprehensive data curation methods, architectural optimizations, and publicly available code designed to democratize access to state-of-the-art generative AI.

AIBullisharXiv – CS AI · 4d ago7/10

🧠Researchers demonstrate that stochasticity in discrete diffusion models provides an error-correcting mechanism that improves the speed-quality tradeoff in generative AI. They propose Discrete Churn and Restart Sampling (DCRS), which achieves up to 10x faster sampling on images while maintaining quality by strategically injecting controlled randomness into the inference process.

AIBullisharXiv – CS AI · 4d ago7/10

🧠Researchers have developed a bias correction technique for quantizing KV-cache memory in video diffusion models, addressing a fundamental problem where quantization noise causes inflated attention to cached data. The method recovers near-full quality video generation while using 50% less memory than standard approaches, enabling longer video synthesis without sacrificing output quality.

AIBullisharXiv – CS AI · 4d ago7/10

🧠Researchers introduce Domain-Gated Latent Diffusion (DGLD), an AI method that discovered 12 novel energetic materials using generative diffusion models with quality-gated training and multi-task guidance. The breakthrough identified two lead compounds with performance metrics rivaling HMX-class materials for the first time in 15 years, validated through DFT simulations and released with open-source code.

AIBearisharXiv – CS AI · 4d ago7/10

🧠Researchers have developed BEAP, a black-box adversarial attack that bypasses machine unlearning safeguards in text-to-image diffusion models by generating natural-language prompts that evade detection filters. The attack achieves 60% higher success rates than previous methods while remaining undetectable to safety systems, raising critical questions about the robustness of AI model safety mechanisms.

AIBearisharXiv – CS AI · 4d ago7/10

🧠Researchers have developed SD-MIA, a black-box membership inference attack that can detect whether specific images were used in training diffusion-based image generation models by analyzing how the model denoise images and perturbed text instructions. This technique outperforms existing methods without requiring access to internal model features, raising significant privacy and copyright concerns for AI developers and users.

AIBullisharXiv – CS AI · 4d ago7/10

🧠Researchers have developed a framework using behavioral geometry to predict which AI models are vulnerable to jailbreak attacks and efficiently transfer defensive measures across model populations. The approach achieves 94% detection accuracy while reducing evaluation probes by 98%, enabling practical security assessment across thousands of model configurations.

AIBullishHugging Face Blog · May 237/10

🧠NVIDIA's Nemotron-Labs team has developed diffusion-based language models that significantly accelerate text generation speeds, approaching real-time inference capabilities. This advancement combines diffusion model efficiency with language understanding, potentially reshaping how AI systems balance quality and computational cost.

AIBullishArs Technica – AI · May 197/10

🧠Google has released Gemini 3.5 Flash, a more efficient version of its language model designed to enable practical agentic AI applications. The company positions this faster, lighter model as essential infrastructure for making generative AI economically viable at scale.

🧠 Gemini

AIBullishGoogle AI Blog · May 197/10

🧠A major technology company announced a significant advancement in search technology by integrating artificial intelligence capabilities with traditional search engine functionality. This development represents a strategic shift toward hybrid search solutions that combine AI's generative and analytical strengths with search engines' indexing and retrieval capabilities.

AIBullisharXiv – CS AI · May 127/10

🧠HyperTransport is a new hypernetwork framework that dramatically accelerates activation steering for text-to-image models by amortizing optimization costs across multiple concepts. Rather than optimizing intervention parameters for each new concept (which takes minutes), the system learns to map CLIP embeddings directly to steering parameters in a single forward pass, achieving 3600-7000x speedup while matching per-concept baselines on unseen concepts.

AIBullisharXiv – CS AI · May 127/10

🧠SWIFT is a new training-free framework for generating long videos with multiple prompt changes, addressing the challenge of maintaining visual coherence while rapidly adapting to semantic shifts. The system achieves 22.6 FPS on single H100 GPUs by using adaptive memory management and selective attention updates, rather than rebuilding cached memory at each prompt boundary.

AIBullisharXiv – CS AI · May 127/10

🧠SynerDiff is a new continuous batching system for diffusion model inference that addresses resource contention issues between UNet and VAE components. The system achieves 1.6× throughput improvement and up to 78.7% latency reduction through intra-level and inter-level optimization strategies, enabling faster AI-generated content services.

AIBullisharXiv – CS AI · May 127/10

🧠Researchers introduce Auto-Rubric as Reward (ARR), a framework that replaces opaque scalar reward signals in multimodal AI alignment with explicit, structured criteria-based evaluation. By externalizing a model's implicit preferences into interpretable rubrics before comparison, ARR reduces evaluation bias and enables more reliable human-preference alignment in generative models.