#scalability News & Analysis

Recent coverage tagged #scalability spans 16 articles in the past month, with 56.3% expressing bullish sentiment, though this represents an 17.8 percentage point decline from the previous quarter. The topic draws heavily from technical research, with arXiv Computer Science and AI dominating source distribution, alongside crypto-focused outlets like U.Today and Crypto Briefing. Discussions frequently intersect with machine learning, blockchain infrastructure, and ethereum development, with notable mentions of OpenAI and ChatGPT.

The sentiment softening suggests growing scrutiny of scalability claims across both AI and distributed systems domains. Browse the indexed articles below to explore the full range of recent perspectives on this tag.

sentiment · last 30d (16 articles) · -17.8pp bullish vs prior 90dTop sources:arXiv – CS AI · 28U.Today · 5Crypto Briefing · 4Bankless · 4OpenAI News · 2

Most-discussed entities:OpenAI · 3ChatGPT · 1Perplexity · 1

CryptoBullishcrypto.news · 2d ago7/10

⛓️Coinbase's Layer 2 solution Base has launched Base Azul on mainnet, introducing Trusted Execution Environment (TEE) technology, zero-knowledge proofs, and increased transaction throughput to 5,000 TPS while targeting one-day withdrawal times. The upgrade represents a significant step toward improving Base's scalability and user experience with faster settlement and enhanced security features.

AIBullisharXiv – CS AI · 3d ago7/10

🧠Researchers introduce LoRe, a training-free optimization method that dynamically routes computational resources to high-priority interactions in iterative graph solvers, achieving 8× speedup and 12× memory reduction on combinatorial optimization problems while maintaining solution quality.

AIBullisharXiv – CS AI · 3d ago7/10

🧠Researchers propose EELMA, an algorithm that uses information-theoretic empowerment to evaluate language model agents at scale without manual benchmarking. The method measures an agent's ability to influence future states through its actions and demonstrates strong correlation with task performance across text-based, web, and tool-use environments.

AIBullisharXiv – CS AI · 3d ago7/10

🧠PhoneWorld introduces a scalable pipeline that automatically converts real mobile app interactions into controllable environments, tasks, and training data for phone-use AI agents. The system demonstrates significant performance improvements across multiple benchmarks by leveraging real GUI trajectories rather than hand-built environments, addressing a critical bottleneck in mobile agent development.

AIBullishTechCrunch – AI · 3d ago7/10

🧠Major cloud infrastructure providers including AWS and Cloudflare are restructuring their platforms to accommodate AI agents moving from experimental phases into production environments. This shift reflects a fundamental change in internet traffic patterns, where machine-generated interactions are increasingly replacing human-centric usage, requiring new architectural approaches to handle different performance and scalability requirements.

CryptoBullishCrypto Briefing · 3d ago7/10

⛓️Major cryptocurrency platforms including Fireblocks, Robinhood, and MetaMask have joined forces to launch the Open Transaction Layer (OTL), a standardized protocol designed to improve scalability and interoperability across blockchain networks. The initiative aims to reduce operational complexity and enhance global accessibility in on-chain finance.

AI × CryptoBullishCrypto Briefing · 4d ago7/10

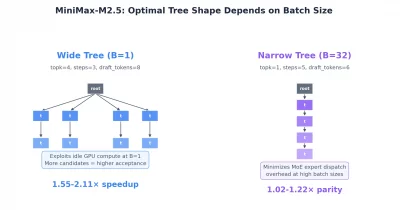

🤖MiniMax has announced its M3 model featuring a 15.6x faster decoding speed compared to previous versions, potentially reducing latency and operational costs for decentralized AI applications. This advancement could enhance scalability and efficiency across AI infrastructure, making decentralized AI systems more practical and cost-effective for broader adoption.

AI × CryptoNeutralarXiv – CS AI · May 127/10

🤖Researchers present the first comprehensive framework for token economics in LLM agents, unifying computer science and economics to address the exponential consumption of tokens that creates computational and security bottlenecks. The study proposes a four-dimensional taxonomy spanning micro-level agent optimization, multi-agent collaboration, ecosystem-wide pricing mechanisms, and security considerations, establishing theoretical foundations for scalable agentic AI systems.

AIBullisharXiv – CS AI · May 127/10

🧠Researchers introduce G-Zero, a verifier-free framework that enables large language models to improve autonomously through self-play without relying on external judges or proxy models. The approach uses an intrinsic reward mechanism called Hint-δ to identify and address the Generator model's blind spots, achieving scalable self-evolution across unverifiable domains.

AIBullisharXiv – CS AI · May 117/10

🧠Researchers introduce Weblica, a framework for creating reproducible and scalable web environments to train visual web agents at scale. The system uses HTTP-level caching and LLM-based synthesis to generate thousands of diverse training environments, with the resulting Weblica-8B model achieving competitive performance against larger API-based models on web navigation benchmarks.

AIBearisharXiv – CS AI · May 117/10

🧠Researchers have published a comprehensive benchmark for Graph Anomaly Detection (GAD) models that exposes critical gaps between academic performance and real-world deployment. The study reveals that leading GAD methods fail to scale to million-node graphs, collapse under realistic anomaly scarcity (0.1%), and struggle with missing data—challenges absent from typical laboratory benchmarks.

AIBullisharXiv – CS AI · May 117/10

🧠Researchers introduce a novel training strategy for neural posterior estimation that decouples representation learning from posterior modeling, enabling amortized inference on large observation sets by training only on pairs of examples. The approach dramatically reduces computational requirements while maintaining or improving performance across diverse benchmarks, making scalable Bayesian inference practical for real-world applications.

CryptoBullishCrypto Briefing · May 97/10

⛓️Anza has achieved the first successful Alpenswitch implementation on its Alpenglow cluster, resulting in a 100x improvement in Solana's finalization time. This advancement positions Solana as a competitive challenger to traditional payment systems and could reshape blockchain transaction standards across the industry.

$SOL

CryptoBullishCrypto Briefing · May 47/10

⛓️Ethereum's Glamsterdam upgrade increased the network's gas limit by 3.3x, enhancing transaction throughput and scalability. This technical improvement is expected to reduce congestion, lower transaction costs, and potentially strengthen investor confidence in Ethereum's long-term viability.

$ETH

AIBullishOpenAI News · May 47/10

🧠OpenAI has rebuilt its WebRTC infrastructure to enable real-time voice AI conversations with minimal latency and global scalability. The technical achievement demonstrates a significant advancement in conversational AI systems that can maintain natural turn-taking dynamics while serving users worldwide.

🏢 OpenAI

CryptoBullishU.Today · May 37/10

⛓️Ethereum is preparing to increase its gas limit to 200 million following a major upgrade, potentially tripling network capacity. This expansion aims to reduce transaction fees and improve throughput, addressing long-standing scalability concerns that have plagued the network during periods of high demand.

$ETH

CryptoBullishCrypto Briefing · May 37/10

⛓️Ethereum has implemented the Glamsterdam upgrade, which triples the network's gas limit to enhance scalability. This improvement is expected to increase transaction throughput, reduce congestion, and potentially boost market sentiment by addressing one of Ethereum's primary limitations.

$ETH

CryptoBullishU.Today · Apr 207/10

⛓️An Ethereum developer highlights that recent cryptocurrency security breaches underscore the network's need to adopt validity proofs to maintain competitiveness. This technical upgrade is positioned as critical for Ethereum's long-term scalability and security architecture as the blockchain ecosystem faces mounting exploitation risks.

$ETH

AIBullisharXiv – CS AI · Apr 137/10

🧠TensorHub introduces Reference-Oriented Storage (ROS), a novel weight transfer system that enables efficient reinforcement learning training across distributed GPU clusters without physically copying model weights. The production-deployed system achieves significant performance improvements, reducing GPU stall time by up to 6.7x for rollout operations and improving cross-datacenter transfers by 19x.

AIBullisharXiv – CS AI · Apr 137/10

🧠Researchers introduced Webscale-RL, a data pipeline that converts large-scale pre-training documents into 1.2 million diverse question-answer pairs for reinforcement learning training. The approach enables RL models to achieve pre-training-level performance with up to 100x fewer tokens, addressing a critical bottleneck in scaling RL data and potentially advancing more efficient language model development.

CryptoBullishThe Block · Apr 77/10

⛓️Polygon is set to activate the Giugliano hardfork on April 8, 2024, which will improve transaction finality and integrate fee parameters directly into block headers. This upgrade aims to enhance the network's performance and efficiency for users and developers.

$MATIC

AIBullisharXiv – CS AI · Apr 77/10

🧠Researchers developed PALM (Portfolio of Aligned LLMs), a method to create a small collection of language models that can serve diverse user preferences without requiring individual models per user. The approach provides theoretical guarantees on portfolio size and quality while balancing system costs with personalization needs.

AIBullisharXiv – CS AI · Apr 77/10

🧠Researchers introduce k-Maximum Inner Product (k-MIP) attention for graph transformers, enabling linear memory complexity and up to 10x speedups while maintaining full expressive power. The innovation allows processing of graphs with over 500k nodes on a single GPU and demonstrates top performance on benchmark datasets.

AIBullisharXiv – CS AI · Apr 77/10

🧠Researchers have developed Combee, a new framework that enables parallel prompt learning for AI language model agents, achieving up to 17x speedup over existing methods. The system allows multiple AI agents to learn simultaneously from their collective experiences without quality degradation, addressing scalability limitations in current single-agent approaches.

AIBullisharXiv – CS AI · Apr 67/10

🧠Researchers introduce Textual Equilibrium Propagation (TEP), a new method to optimize large language model compound AI systems that addresses performance degradation in deep, multi-module workflows. TEP uses local learning principles to avoid exploding and vanishing gradient problems that plague existing global feedback methods like TextGrad.