#robotics News & Analysis

The #robotics tag covers 249 indexed articles, with 35 published in the last month. Recent coverage leans bullish at 57.1%, though sentiment has softened by 15.8 percentage points compared to the prior quarter, with 40% neutral and 2.9% bearish articles. ArXiv's computer science and AI sections dominate the source list, alongside coverage from AI News and TechCrunch's AI beat. Nvidia and OpenAI appear most frequently in related discussions.

#robotics content intersects regularly with #machine-learning, #reinforcement-learning, #computer-vision, and #ai-research. Scan the articles below for the latest developments and perspectives in the field.

sentiment · last 30d (35 articles) · -15.8pp bullish vs prior 90dTop sources:arXiv – CS AI · 167AI News · 7TechCrunch – AI · 6Crypto Briefing · 4Blockonomi · 3

Most-discussed entities:Nvidia · 5OpenAI · 4Haiku · 1Gemini · 1Hugging Face · 1

AIBullisharXiv – CS AI · May 97/10

🧠Researchers introduce EA-WM, an event-aware generative world model that bridges kinematic control and visual perception for robotic systems. By projecting robot actions directly into camera views as structured kinematic-to-visual action fields rather than abstract tokens, the model achieves state-of-the-art performance on the WorldArena benchmark, significantly advancing robot learning and simulation capabilities.

AIBullisharXiv – CS AI · May 97/10

🧠Researchers introduce Stellar VLA, a continual learning framework for vision-language-action models that improves knowledge accumulation without adding network parameters. The approach uses knowledge-guided expert routing and hierarchical task structures, achieving strong performance on robotics benchmarks with minimal data replay and validated real-world transfer capabilities.

AIBullisharXiv – CS AI · May 77/10

🧠Researchers introduce LAWS, a self-certifying caching architecture for neural inference that builds a library of expert functions with formal error bounds, enabling efficient deployment across LLMs, robotics, and edge devices. The system generalizes both Mixture-of-Experts and KV prefix caching while providing mathematically verifiable performance guarantees without requiring ground truth validation.

AIBullisharXiv – CS AI · May 77/10

🧠Researchers introduce Q2RL, a novel algorithm that combines behavior cloning with reinforcement learning to enable robots to improve their policies through online interaction. The method uses Q-value estimation and gating mechanisms to prevent policy degradation from distribution mismatch, achieving 100% success rates on complex manipulation tasks in 1-2 hours of real robot learning.

AIBullisharXiv – CS AI · May 47/10

🧠Researchers introduce Interleaved Vision-Language Reasoning (IVLR), a new AI framework that combines text and visual planning for robotic manipulation tasks. The system generates explicit reasoning traces alternating between textual subgoals and visual keyframes, achieving 95.5% success on LIBERO benchmarks and demonstrating that multimodal reasoning significantly outperforms text-only or vision-only approaches.

AIBullishTechCrunch – AI · May 17/10

🧠Meta has acquired humanoid robotics startup Assured Robot Intelligence to strengthen its AI capabilities for robotic systems. The acquisition signals Meta's commitment to advancing artificial intelligence applications beyond software, positioning the company in the competitive robotics sector alongside tech giants pursuing embodied AI.

AIBullishcrypto.news · May 17/10

🧠137 Ventures has closed $700 million across two new funds, bringing its assets under management above $15 billion. The firm is concentrating its investment strategy on AI agents, robotics, advanced manufacturing, and maintaining its substantial $10 billion-plus stake in SpaceX.

AIBullisharXiv – CS AI · May 17/10

🧠Researchers introduce PRTS, a Vision-Language-Action foundation model that reformulates robotic learning through goal-conditioned reinforcement learning rather than traditional behavior cloning. The system learns to assess goal reachability by embedding state-action pairs and language instructions in a unified space, achieving state-of-the-art performance on multiple robotic benchmarks and real-world tasks.

AIBearisharXiv – CS AI · May 17/10

🧠Researchers present the first comprehensive threat modeling of LLM-enabled robotic systems, mapping three categories of attacks (cyber, adversarial, and conversational) across the perception-planning-actuation pipeline. The analysis reveals critical architectural vulnerabilities where compromised inputs or unsafe model outputs can propagate to unsafe physical actions without proper validation boundaries.

AIBullisharXiv – CS AI · Apr 207/10

🧠Researchers present a generative framework that converts real-world panoramic images into high-fidelity simulation scenes for robot training, using semantic and geometric editing to create diverse training variants. The approach demonstrates strong sim-to-real correlation and enables robots to generalize better to unseen environments and objects through scaled synthetic data generation.

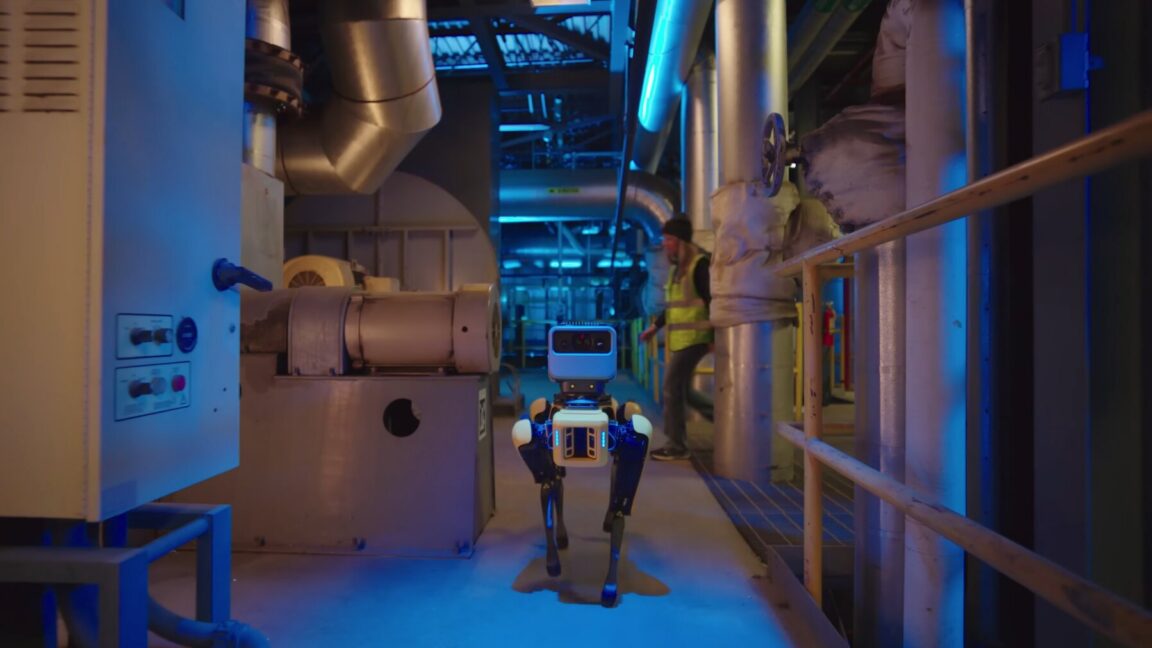

AIBullishArs Technica – AI · Apr 157/10

🧠Google has integrated its Gemini AI model into robotic systems that can autonomously read industrial gauges and thermometers during facility inspections. This advancement combines computer vision with large language models to enable robots to interpret analog instruments, improving automation capabilities in industrial monitoring and maintenance operations.

🧠 Gemini

AIBullisharXiv – CS AI · Apr 147/10

🧠Researchers propose Grounded World Model (GWM), a novel approach to visuomotor planning that aligns world models with vision-language embeddings rather than requiring explicit goal images. The method achieves 87% success on unseen tasks versus 22% for traditional vision-language action models, demonstrating superior semantic generalization in robotics and embodied AI applications.

AIBullisharXiv – CS AI · Apr 147/10

🧠Researchers demonstrate that robots equipped with minimal embodied sensorimotor capabilities learn numerical concepts significantly faster than vision-only systems, achieving 96.8% counting accuracy with 10% of training data. The embodied neural network spontaneously develops biologically plausible number representations matching human cognitive development, suggesting embodiment acts as a structural learning prior rather than merely an information source.

AIBullisharXiv – CS AI · Apr 147/10

🧠TimeRewarder is a new machine learning method that learns dense reward signals from passive videos to improve reinforcement learning in robotics. By modeling temporal distances between video frames, the approach achieves 90% success rates on Meta-World tasks using significantly fewer environment interactions than prior methods, while also leveraging human videos for scalable reward learning.

AIBullishDecrypt – AI · Apr 137/10

🧠Japan's largest tech companies—SoftBank, Sony, Honda, and NEC—have jointly established a new venture focused on developing trillion-parameter AI systems designed specifically for robotics and physical automation, securing $6.7 billion in Japanese government backing. This represents a strategic pivot away from conversational AI toward practical, embodied AI applications.

AIBullisharXiv – CS AI · Apr 107/10

🧠Researchers propose a shift from deterministic to probabilistic safety verification for embodied AI systems, arguing that provable probabilistic guarantees offer a more practical path to large-scale deployment in safety-critical applications like autonomous vehicles and robotics than the infeasible goal of absolute safety across all scenarios.

AIBullisharXiv – CS AI · Apr 77/10

🧠Researchers introduce ROSClaw, a new AI framework that integrates large language models with robotic systems to improve multi-agent collaboration and long-horizon task execution. The framework addresses critical gaps between semantic understanding and physical execution by using unified vision-language models and enabling real-time coordination between simulated and real-world robots.

AIBullisharXiv – CS AI · Apr 77/10

🧠Researchers have developed a neuro-symbolic framework that enables robots to learn complex manipulation tasks from as few as one demonstration, without requiring manual programming or large datasets. The system uses Vision-Language Models to automatically construct symbolic planning domains and has been validated on real industrial equipment including forklifts and robotic arms.

AIBullisharXiv – CS AI · Apr 77/10

🧠Researchers developed GRIT, a two-stage AI framework that learns dexterous robotic grasping from sparse taxonomy guidance, achieving 87.9% success rate. The system first predicts grasp specifications from scene context, then generates finger motions while preserving intended grasp structure, improving generalization to novel objects.

AIBullishCrypto Briefing · Apr 77/10

🧠OpenAI co-founder Greg Brockman predicts AGI will emerge within the next few years and states that OpenAI is pivoting toward real-world applications. He emphasizes that AI integration will significantly transform robotics and that AGI could revolutionize intellectual tasks under a unified AI framework.

🏢 OpenAI

AIBullisharXiv – CS AI · Mar 267/10

🧠Researchers introduce E0, a new AI framework using tweedie discrete diffusion to improve Vision-Language-Action (VLA) models for robotic manipulation. The system addresses key limitations in existing VLA models by generating more precise actions through iterative denoising over quantized action tokens, achieving 10.7% better performance on average across 14 diverse robotic environments.

AINeutralarXiv – CS AI · Mar 267/10

🧠Researchers propose a method to identify 'self-awareness' in AI systems by analyzing invariant cognitive structures that remain stable during continual learning. Their study found that robots subjected to continual learning developed significantly more stable subnetworks compared to control groups, suggesting this could be evidence of an emergent 'self' concept.

AIBullishBlockonomi · Mar 177/10

🧠YZi Labs led a $52M funding round for RoboForce, which develops industrial AI robots including the TITAN model with 1mm precision for harsh environments. NVIDIA's CEO Jensen Huang featured RoboForce's TITAN robot at GTC 2025, providing significant validation for the company's Physical AI technology in industrial applications.

🏢 Nvidia

AIBullisharXiv – CS AI · Mar 177/10

🧠Researchers introduce PRIMO R1, a 7B parameter AI framework that transforms video MLLMs from passive observers into active critics for robotic manipulation tasks. The system uses reinforcement learning to achieve 50% better accuracy than specialized baselines and outperforms 72B-scale models, establishing state-of-the-art performance on the RoboFail benchmark.

🏢 OpenAI🧠 o1

AINeutralarXiv – CS AI · Mar 177/10

🧠Researchers introduced Eva-VLA, the first unified framework to systematically evaluate the robustness of Vision-Language-Action models for robotic manipulation under real-world physical variations. Testing revealed OpenVLA exhibits over 90% failure rates across three physical variations, exposing critical weaknesses in current VLA models when deployed outside laboratory conditions.